- - -

-

-

-### Supported adapters

-

-

-

-

-### Supported adapters

-

- +

+

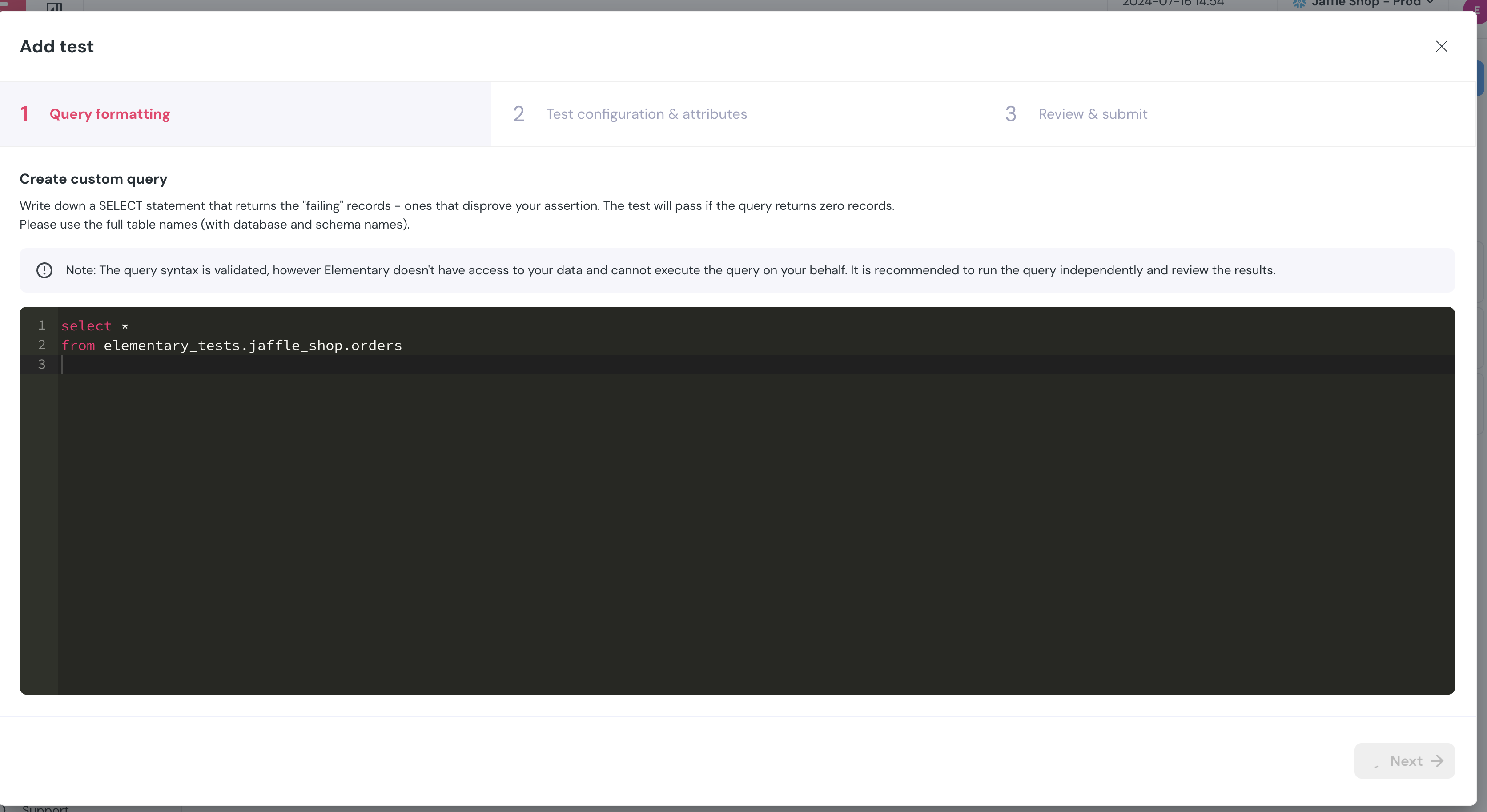

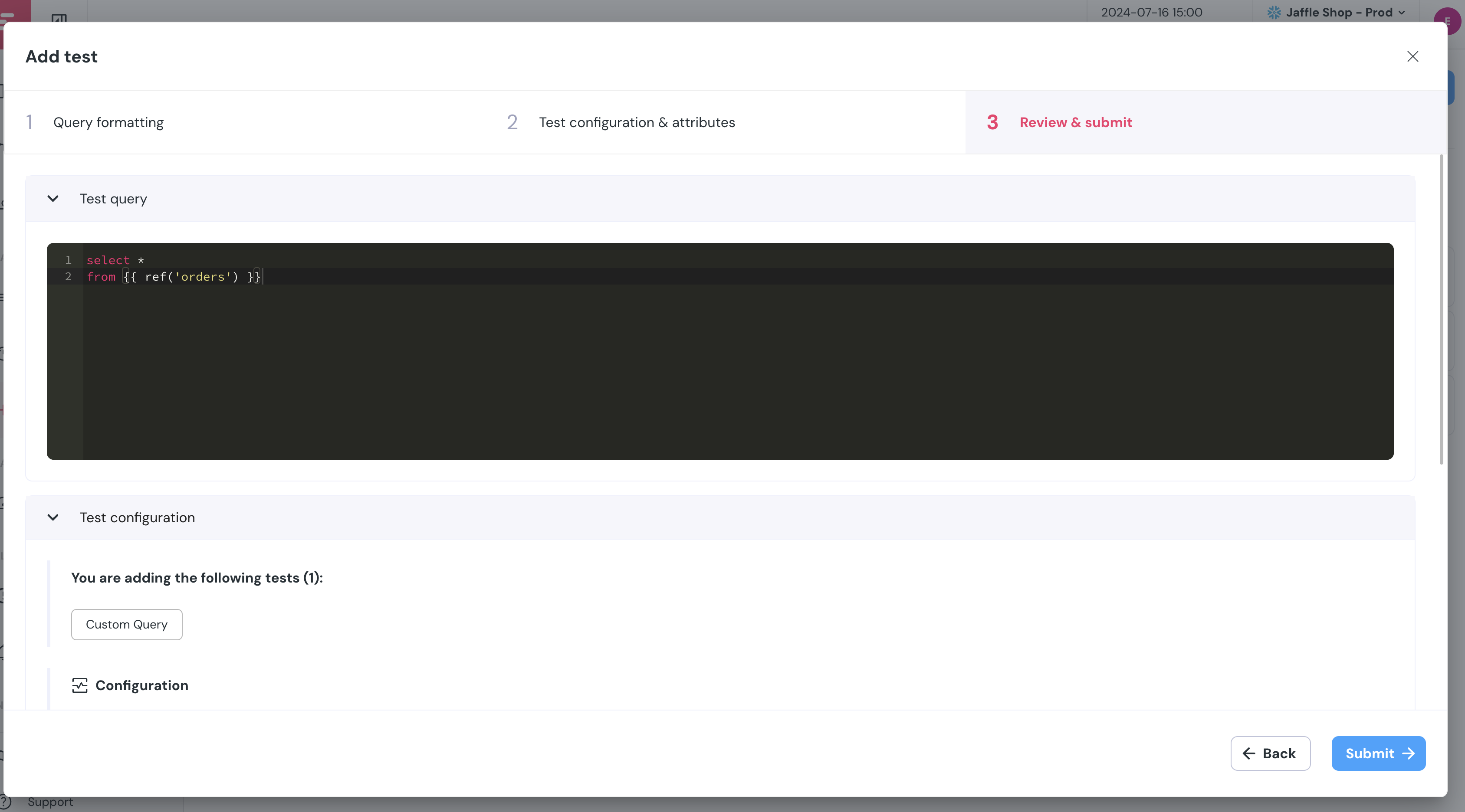

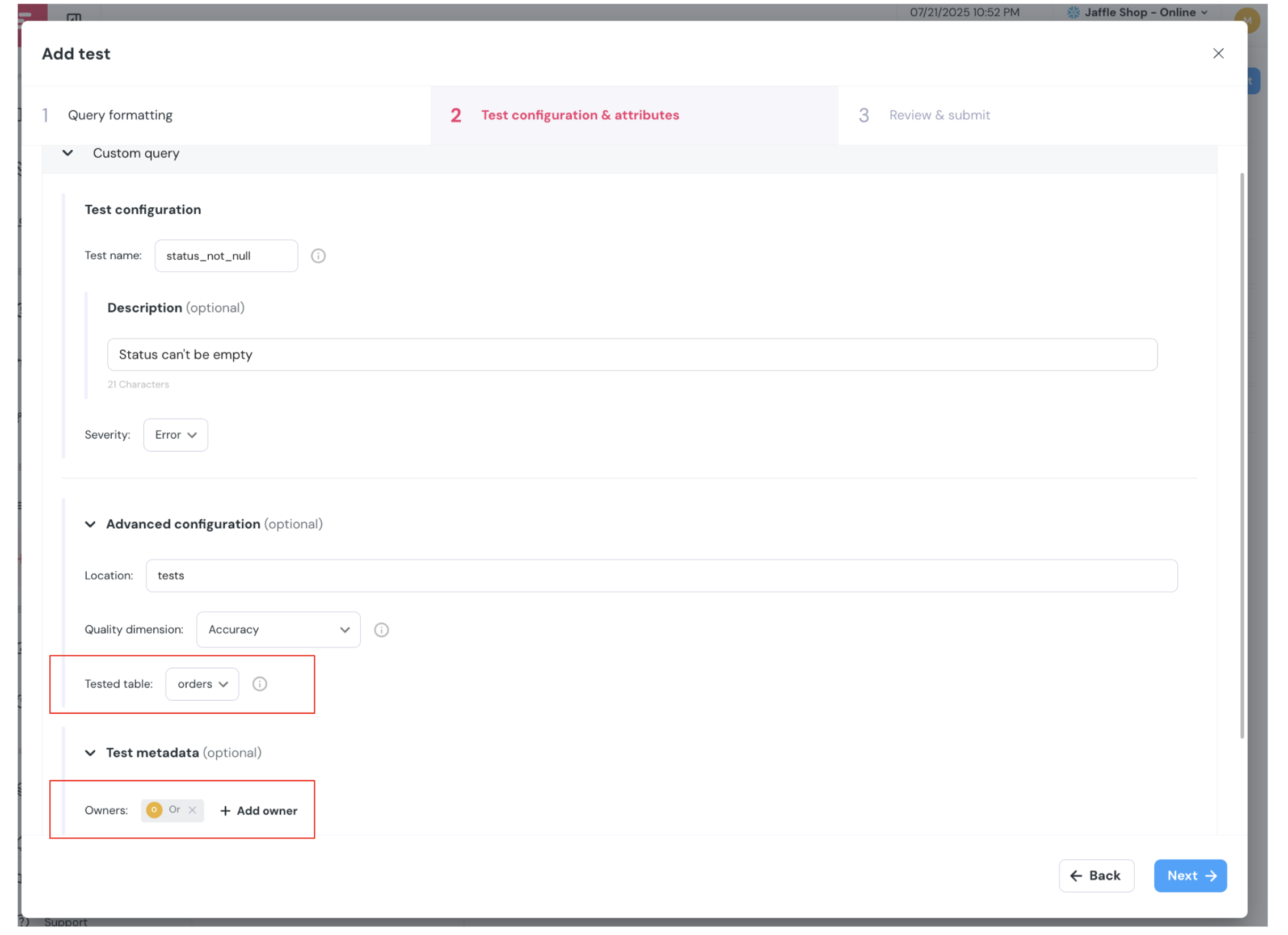

+## Custom SQL tests in UI

+

+

+

+

+## Custom SQL tests in UI

+

+ +

+

+## Model runs in dashboard

+

+

+

+

+## Model runs in dashboard

+

+ +

+

+## DAG subset in Lineage

+

+

+

+

+## DAG subset in Lineage

+

+ +

+

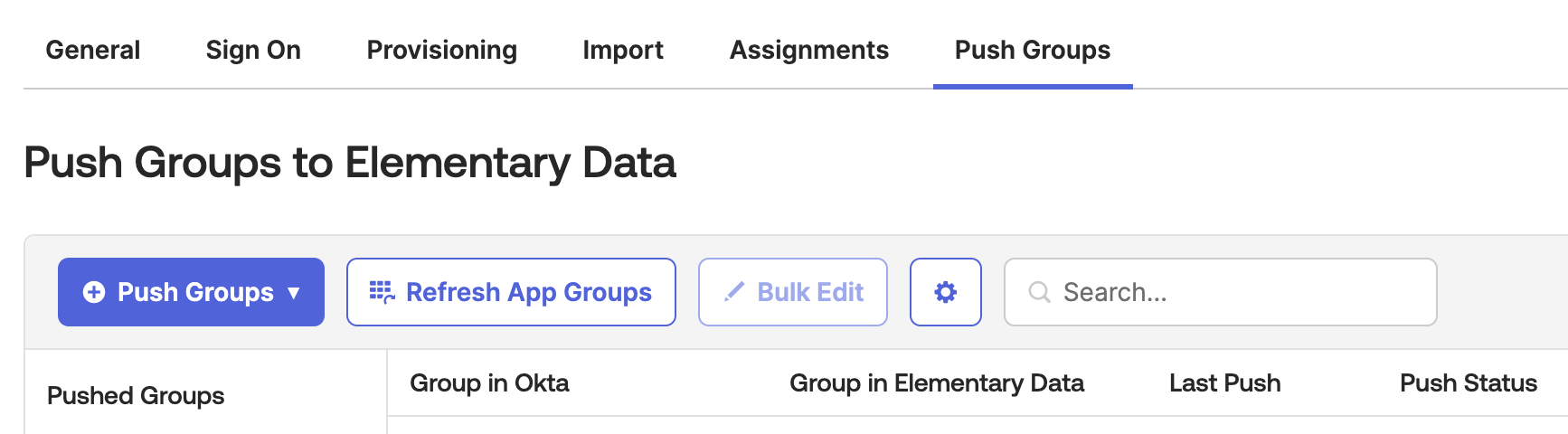

+## Role-based access control

+

+

+

+

+## Role-based access control

+

+ +

diff --git a/docs/cloud/ai-agents/catalog-agent.mdx b/docs/cloud/ai-agents/catalog-agent.mdx

new file mode 100644

index 000000000..98d1275c8

--- /dev/null

+++ b/docs/cloud/ai-agents/catalog-agent.mdx

@@ -0,0 +1,54 @@

+---

+title: "Catalog AI Agent"

+sidebarTitle: "Catalog agent"

+icon: "book-open"

+---

+

+

diff --git a/docs/cloud/ai-agents/catalog-agent.mdx b/docs/cloud/ai-agents/catalog-agent.mdx

new file mode 100644

index 000000000..98d1275c8

--- /dev/null

+++ b/docs/cloud/ai-agents/catalog-agent.mdx

@@ -0,0 +1,54 @@

+---

+title: "Catalog AI Agent"

+sidebarTitle: "Catalog agent"

+icon: "book-open"

+---

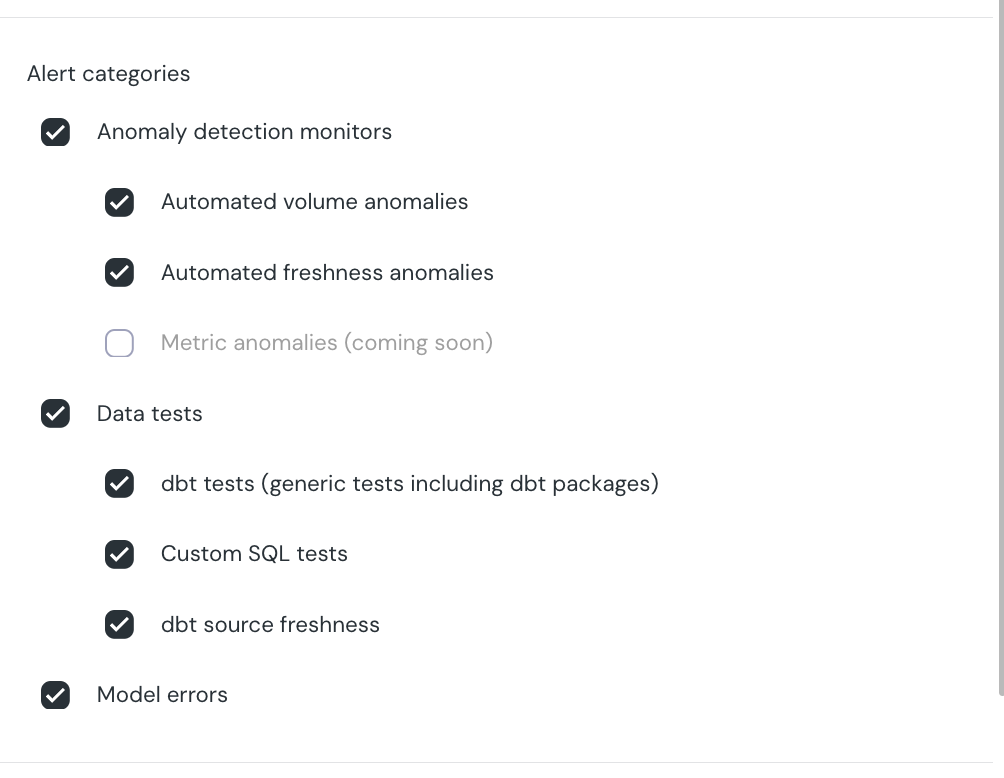

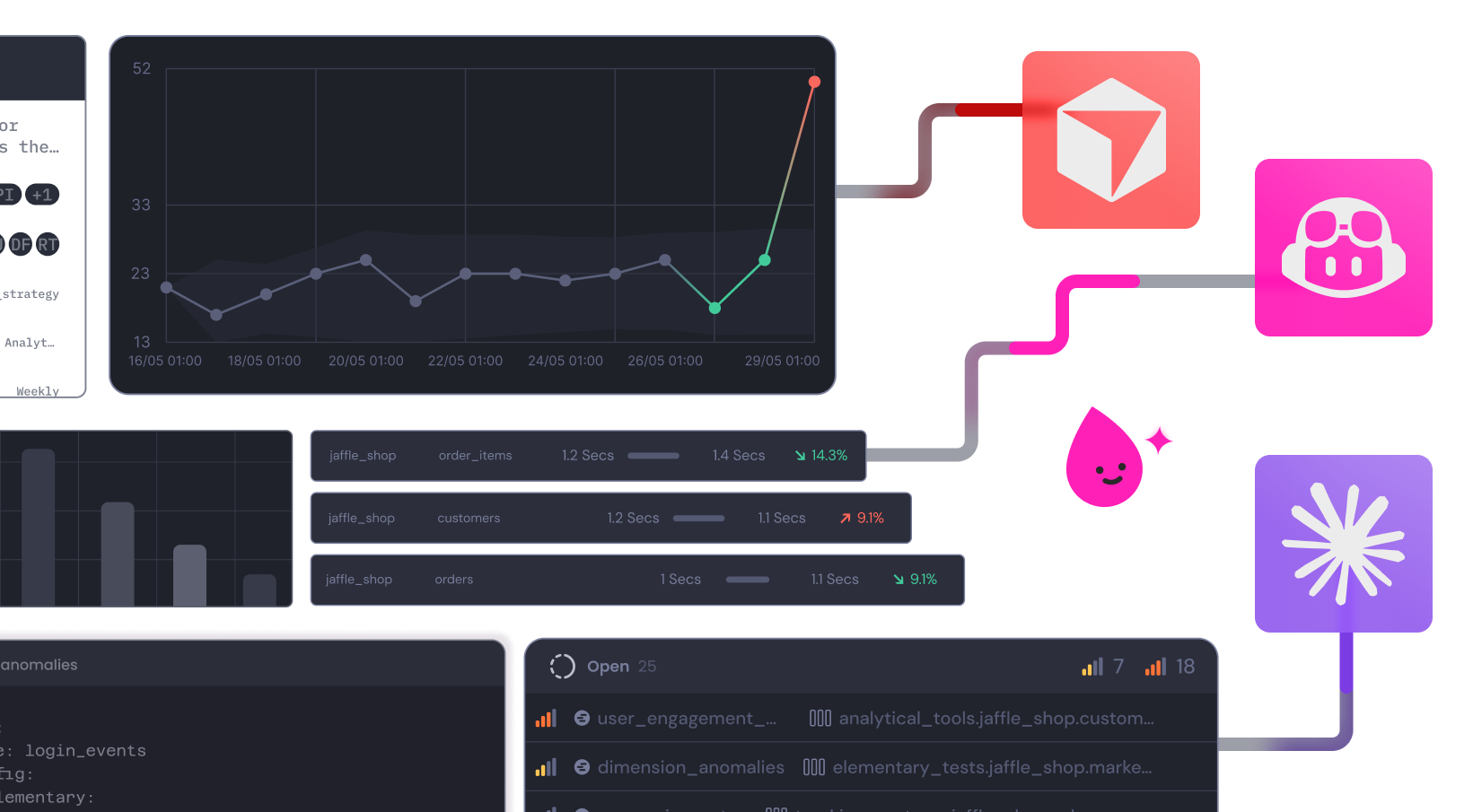

+- Anomaly detection and dbt tests

- Automated freshness & volume monitors

- ML-powered anomaly detection

- dbt and cloud tests

- Bulk add/edit for tests

- Basic alerts

- Table-level lineage

- Interactive alerts

- Column-level lineage up to BI

- BI integrations

- Test results history

- Incident management

- Model and test performance

- Model and test performance

- Performance alerts

- No-code test editor

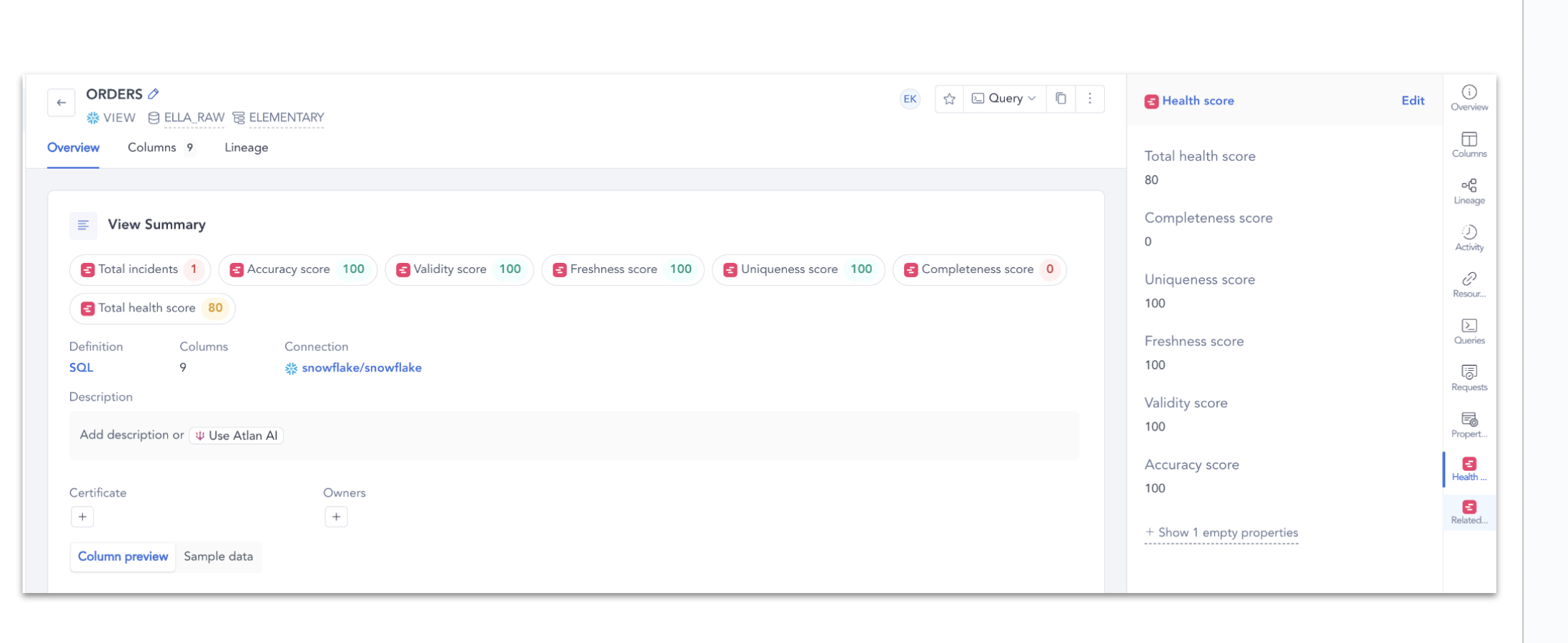

- Data health scores

- External catalog integrations

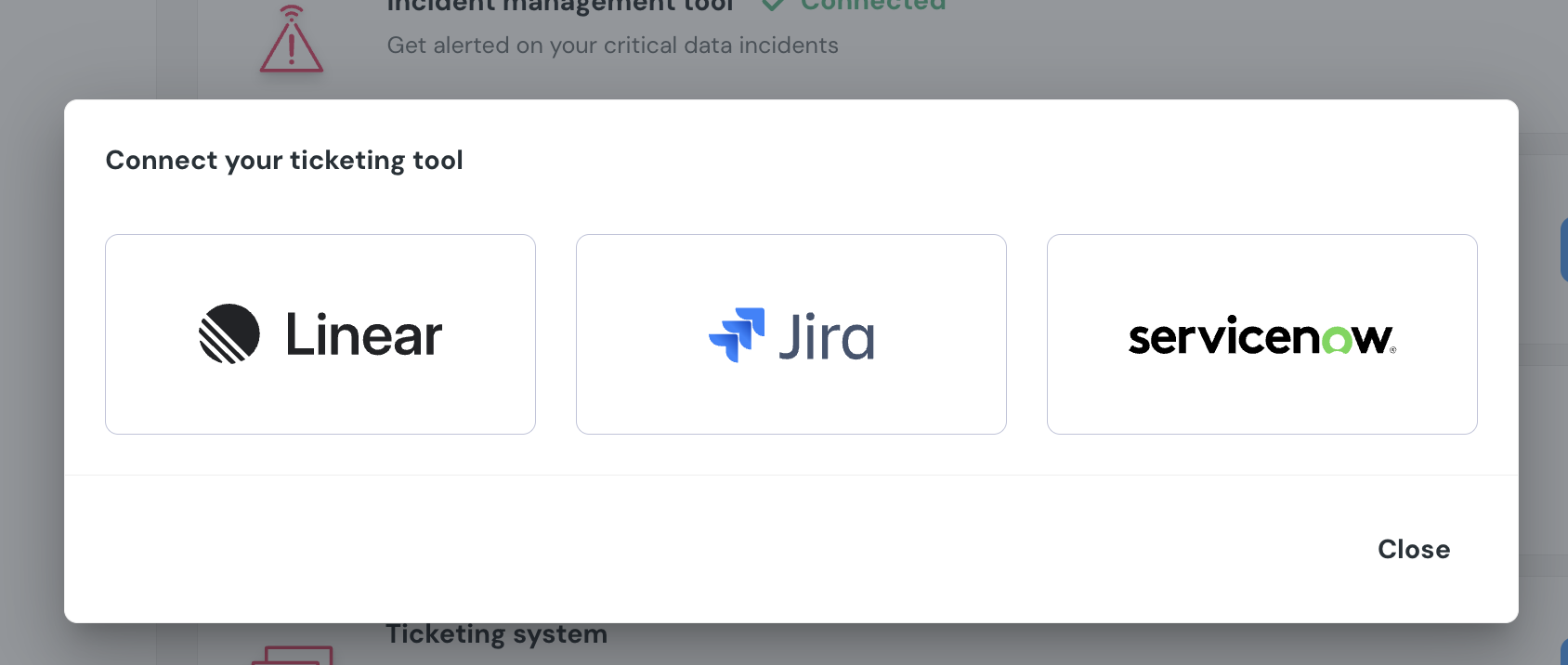

- Ticketing system integrations

- Catalog

- Metadata in code and UI

+

+  +

+  +

+

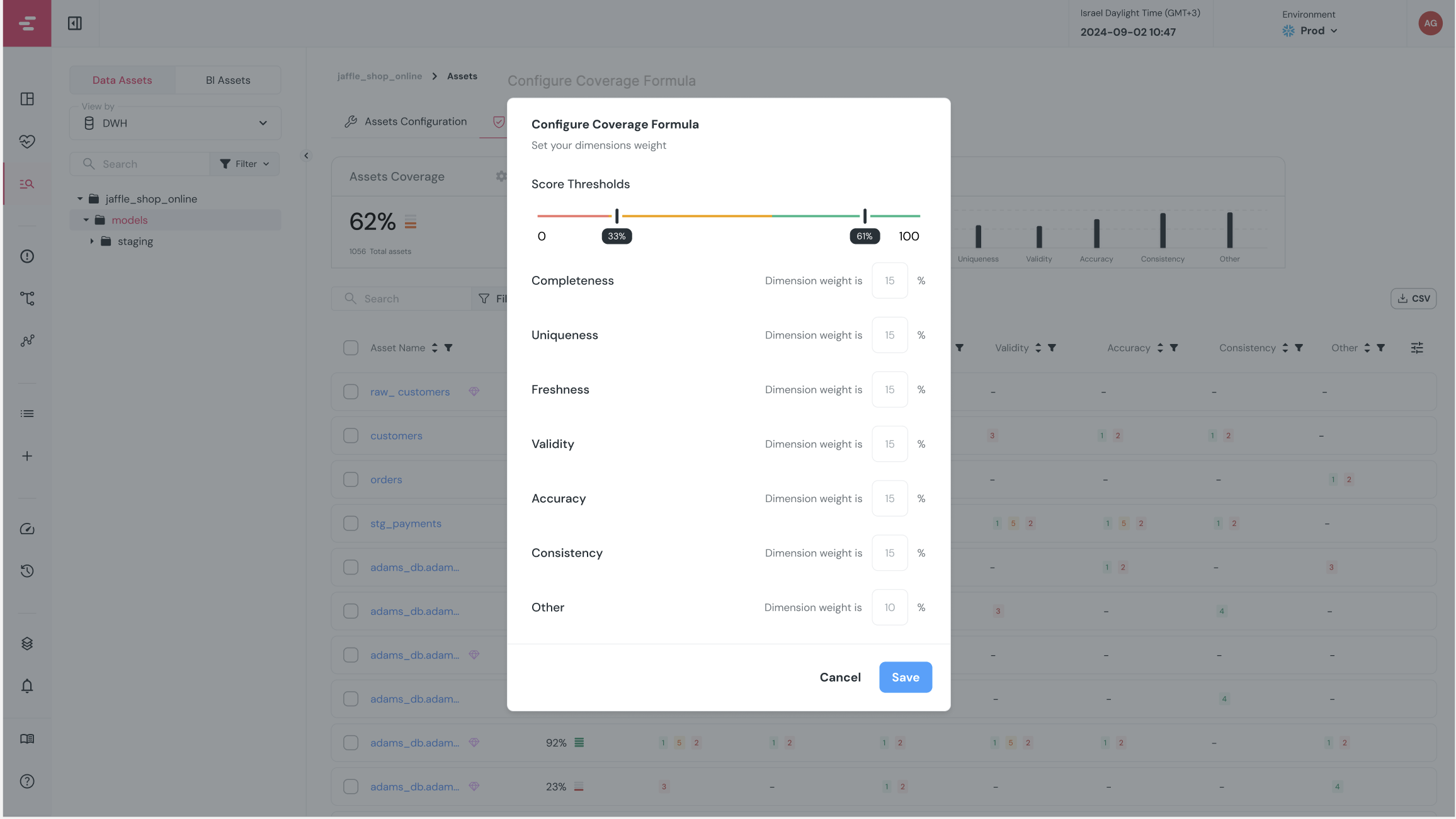

+## How it works

+

+Digest rules are configured under **Alert Rules → Incidents Digest** tab. Each rule defines:

+

+- **When** to send the digest (cadence: daily or weekly, at a specific hour)

+- **What** incidents to include (filters by tag, owner, status, model, or category)

+- **Where** to send it (one or more destinations such as a Slack channel or email)

+

+At the scheduled time, Elementary collects all incidents that match the rule's filters since the last digest was sent, and delivers them as a single message.

+

+

+

+

+

+## How it works

+

+Digest rules are configured under **Alert Rules → Incidents Digest** tab. Each rule defines:

+

+- **When** to send the digest (cadence: daily or weekly, at a specific hour)

+- **What** incidents to include (filters by tag, owner, status, model, or category)

+- **Where** to send it (one or more destinations such as a Slack channel or email)

+

+At the scheduled time, Elementary collects all incidents that match the rule's filters since the last digest was sent, and delivers them as a single message.

+

+

+  +

+

+## Creating a digest rule

+

+Navigate to **Alert Rules** in the left sidebar and select the **Incidents Digest** tab. Click **Create digest rule** to open the configuration drawer.

+

+The filters, categories, and destinations are configured the same way as in [Alert Rules](/cloud/features/alerts-and-incidents/alert-rules). The one addition unique to digest rules is **cadence**.

+

+### Cadence

+

+Choose how often the digest should be sent:

+

+- **Daily** — sent once a day at the hour you choose.

+- **Weekly** — sent once a week on the day and at the hour you choose.

+

+The time is in UTC.

+

+

+

+

+

+## Creating a digest rule

+

+Navigate to **Alert Rules** in the left sidebar and select the **Incidents Digest** tab. Click **Create digest rule** to open the configuration drawer.

+

+The filters, categories, and destinations are configured the same way as in [Alert Rules](/cloud/features/alerts-and-incidents/alert-rules). The one addition unique to digest rules is **cadence**.

+

+### Cadence

+

+Choose how often the digest should be sent:

+

+- **Daily** — sent once a day at the hour you choose.

+- **Weekly** — sent once a week on the day and at the hour you choose.

+

+The time is in UTC.

+

+

+  +

+

+## Managing digest rules

+

+Once created, digest rules appear as cards in the **Incidents Digest** tab. From each card you can:

+

+- **Edit** the rule to change any configuration.

+- **Activate / Deactivate** the rule without deleting it.

+- **Delete** the rule.

+

+

+

+

+

+## Managing digest rules

+

+Once created, digest rules appear as cards in the **Incidents Digest** tab. From each card you can:

+

+- **Edit** the rule to change any configuration.

+- **Activate / Deactivate** the rule without deleting it.

+- **Delete** the rule.

+

+

+  +

+

+## Relationship with alert rules

+

+Digest rules are independent of [Alert Rules](/cloud/features/alerts-and-incidents/alert-rules). You can have both real-time alert rules and digest rules active at the same time — they are evaluated separately. A common pattern is to use real-time alert rules for critical on-call channels and digest rules for broader team summaries.

diff --git a/docs/cloud/features/alerts-and-incidents/incident-management.mdx b/docs/cloud/features/alerts-and-incidents/incident-management.mdx

new file mode 100644

index 000000000..85607407e

--- /dev/null

+++ b/docs/cloud/features/alerts-and-incidents/incident-management.mdx

@@ -0,0 +1,54 @@

+---

+title: Incident Management

+sidebarTitle: Incident management

+---

+

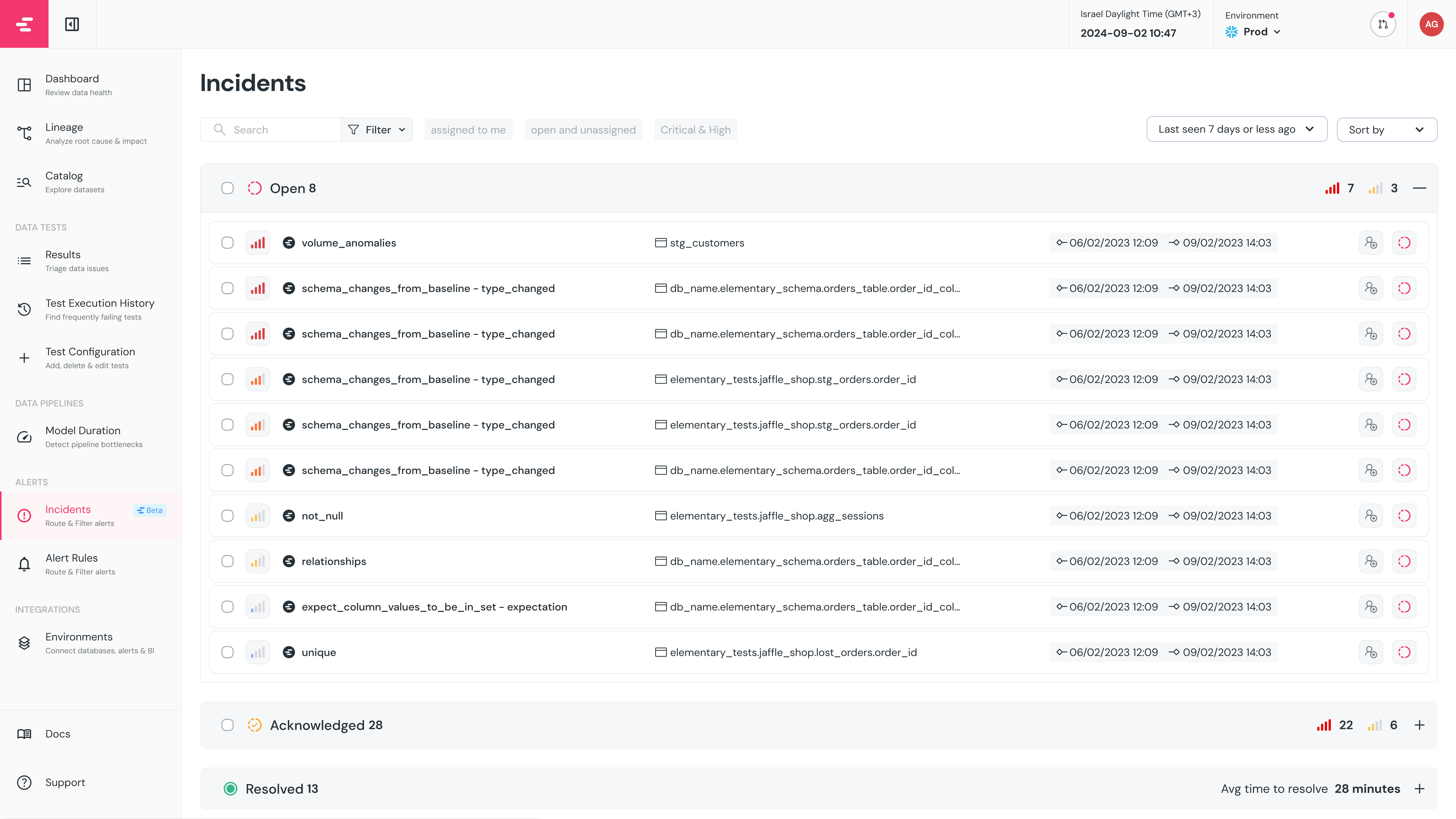

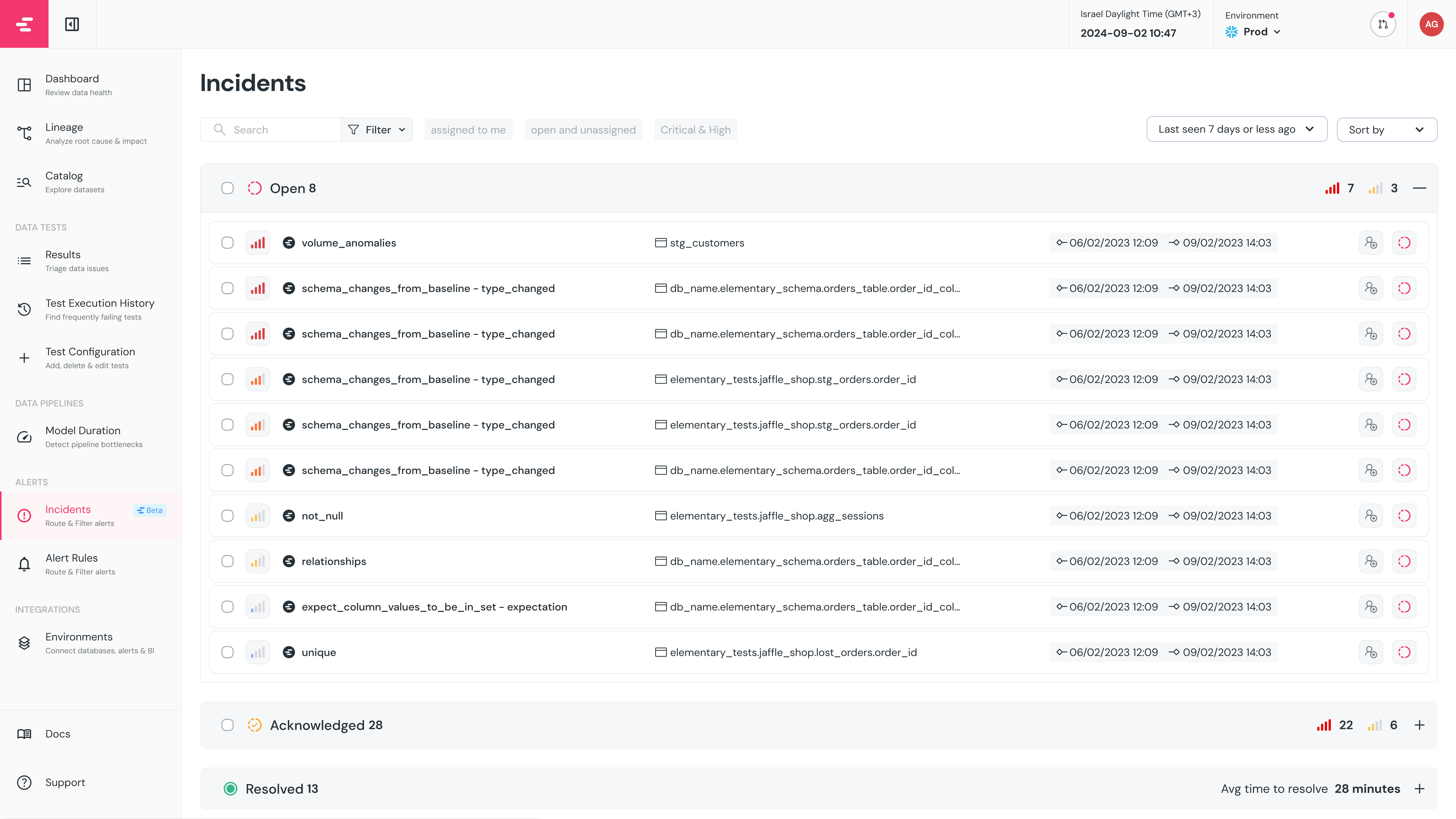

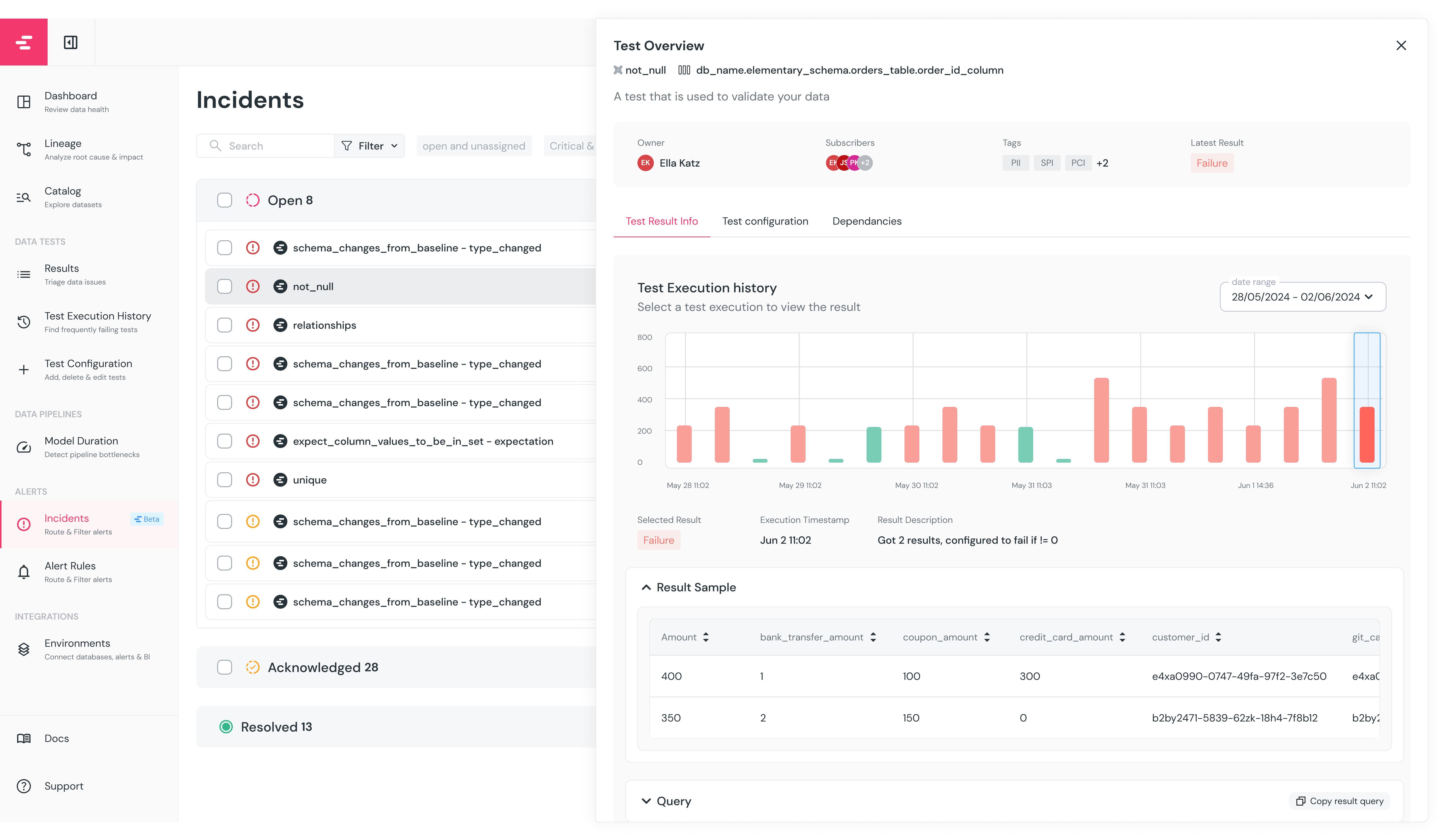

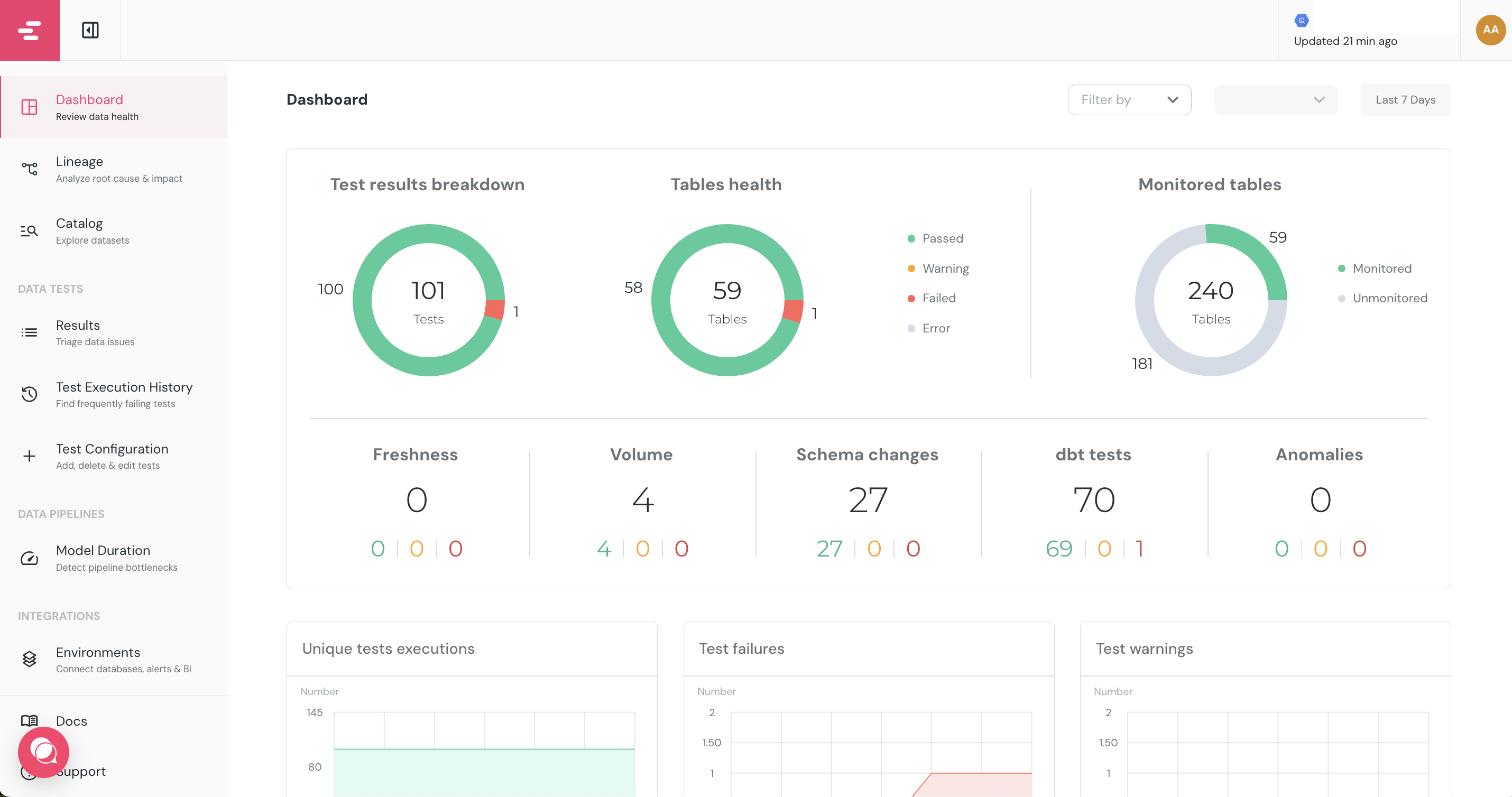

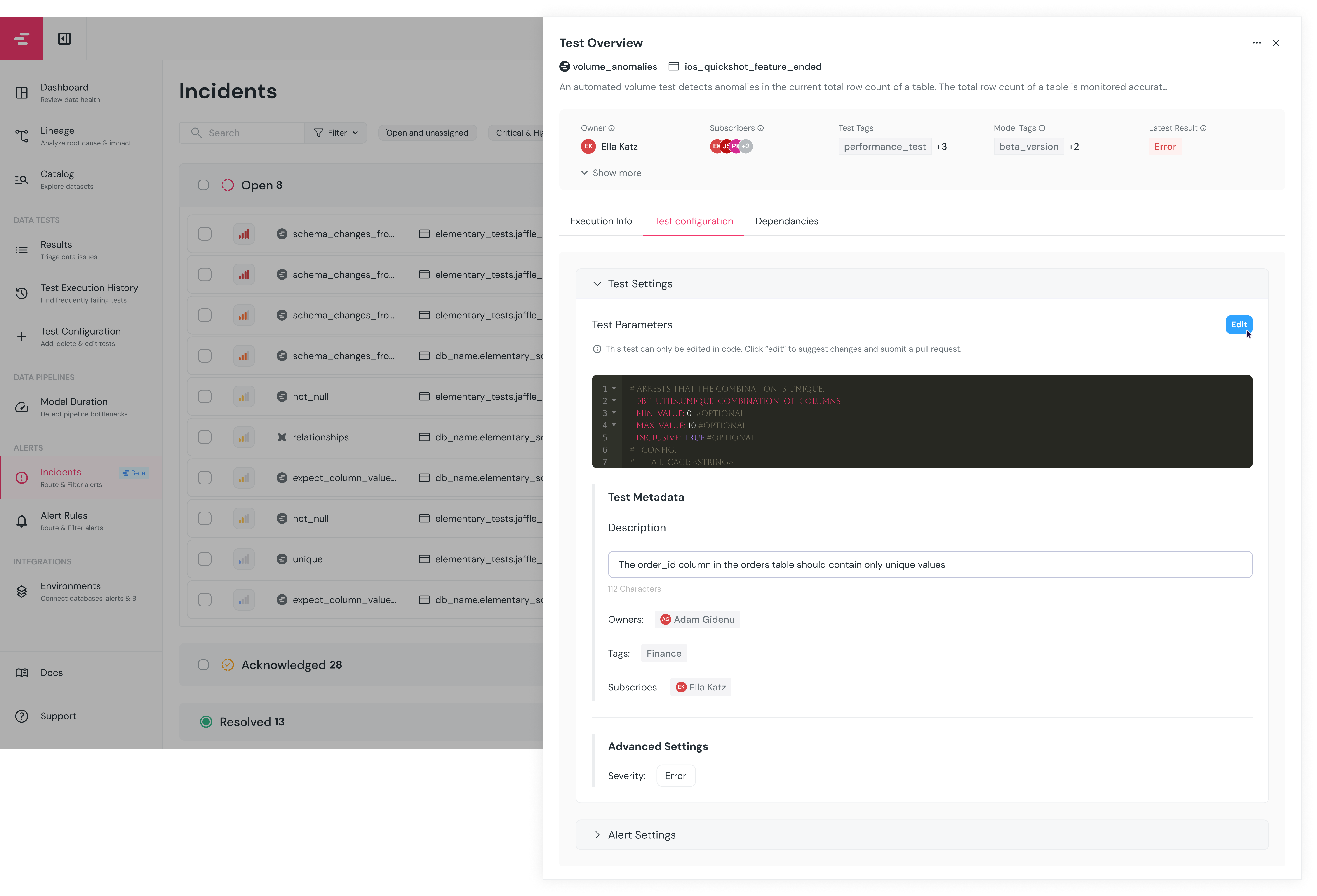

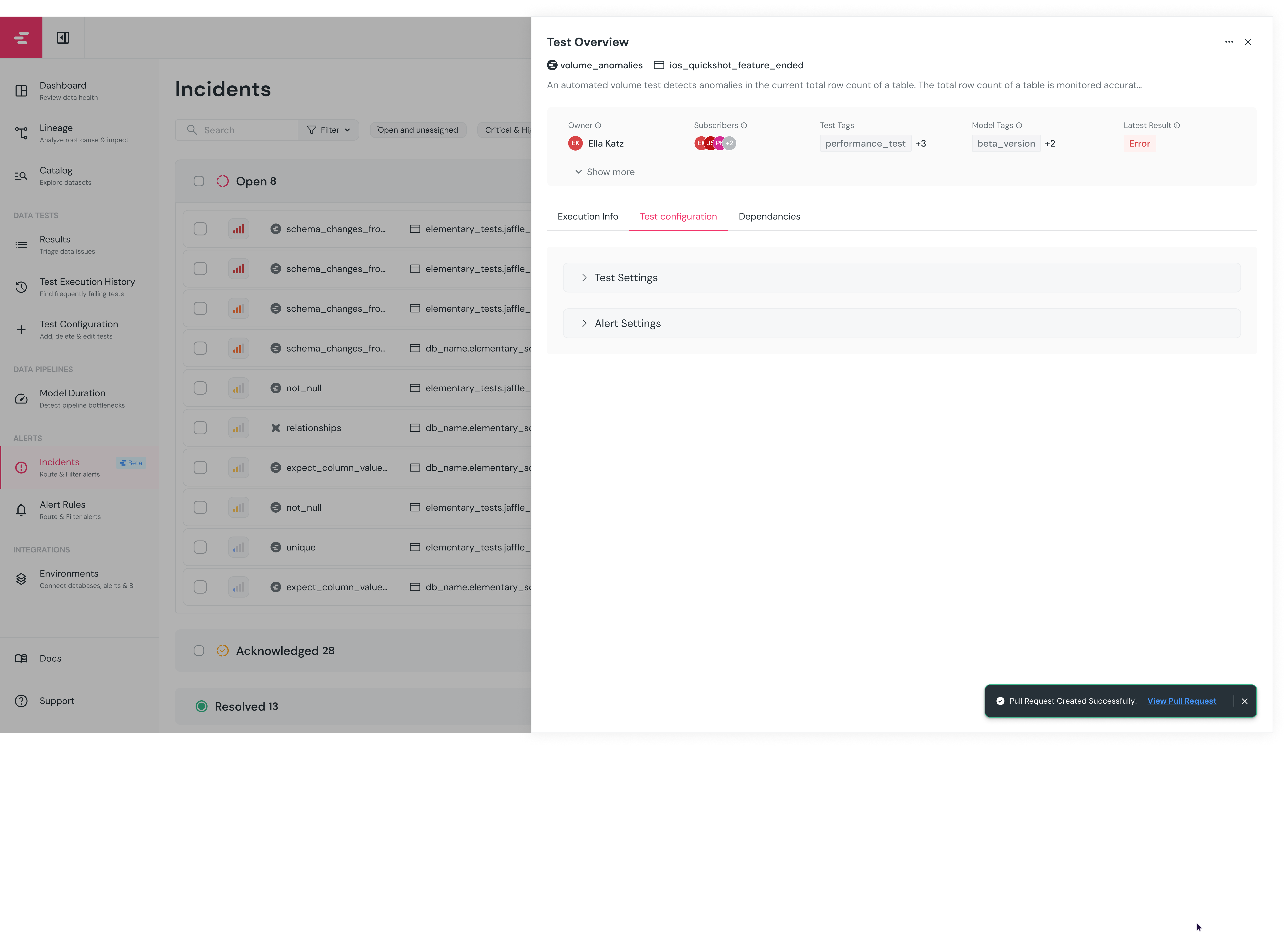

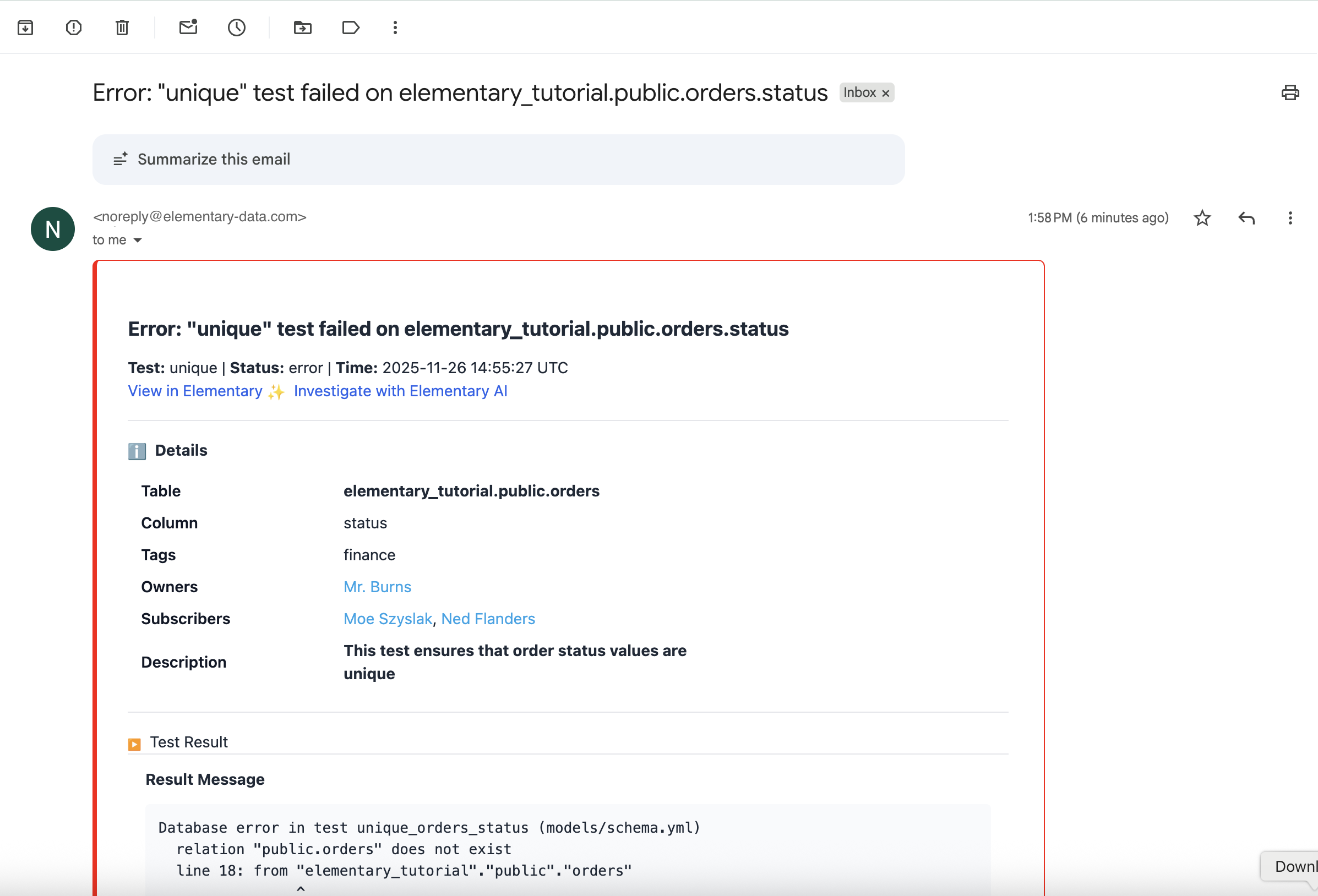

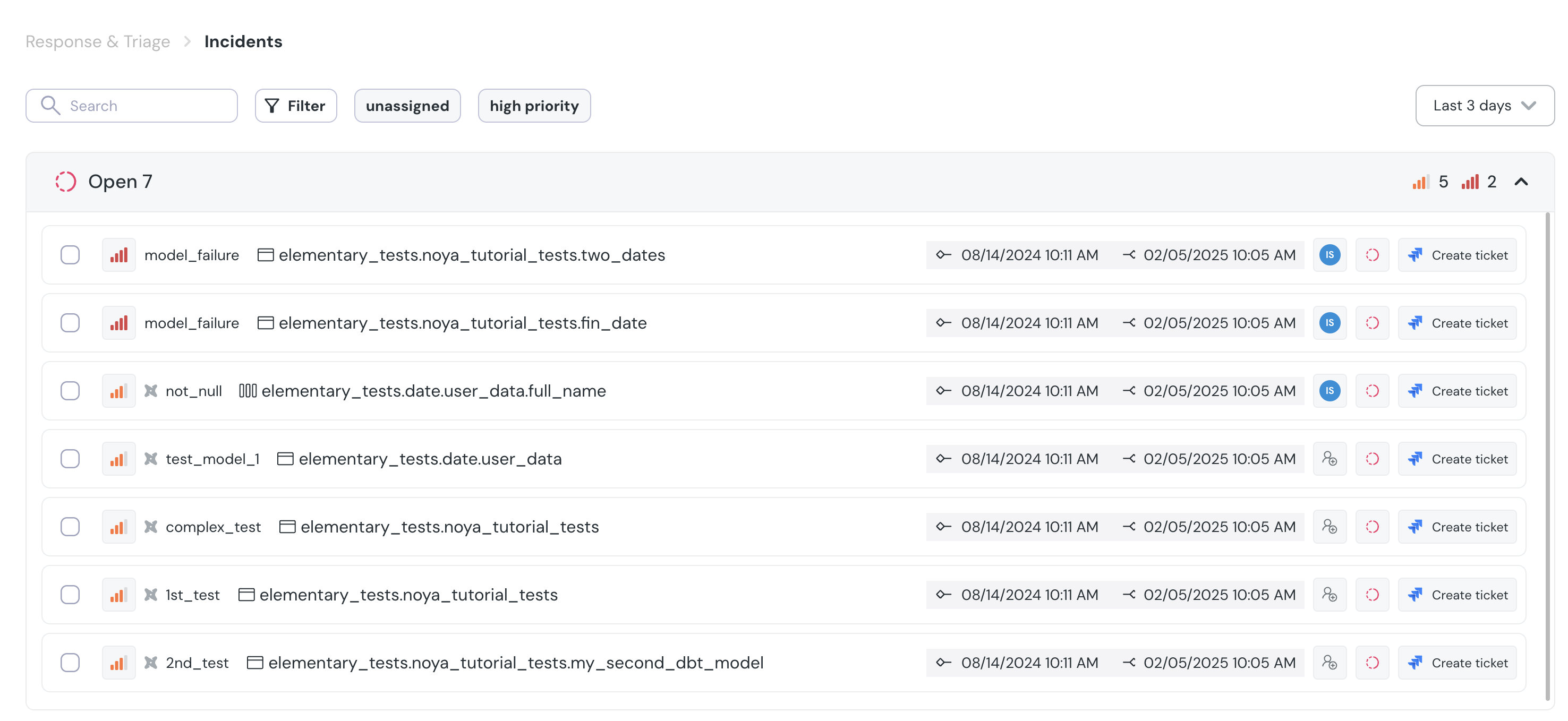

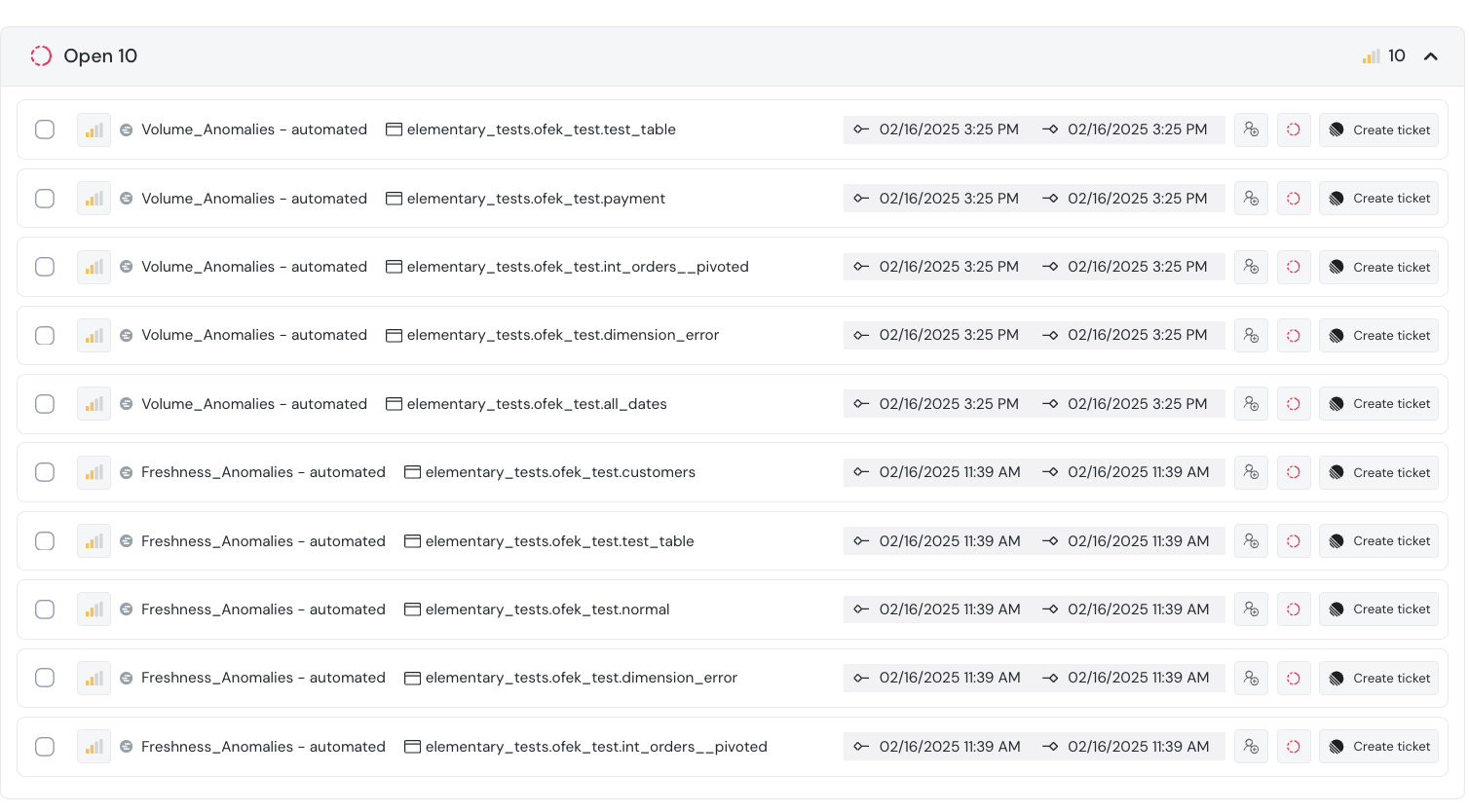

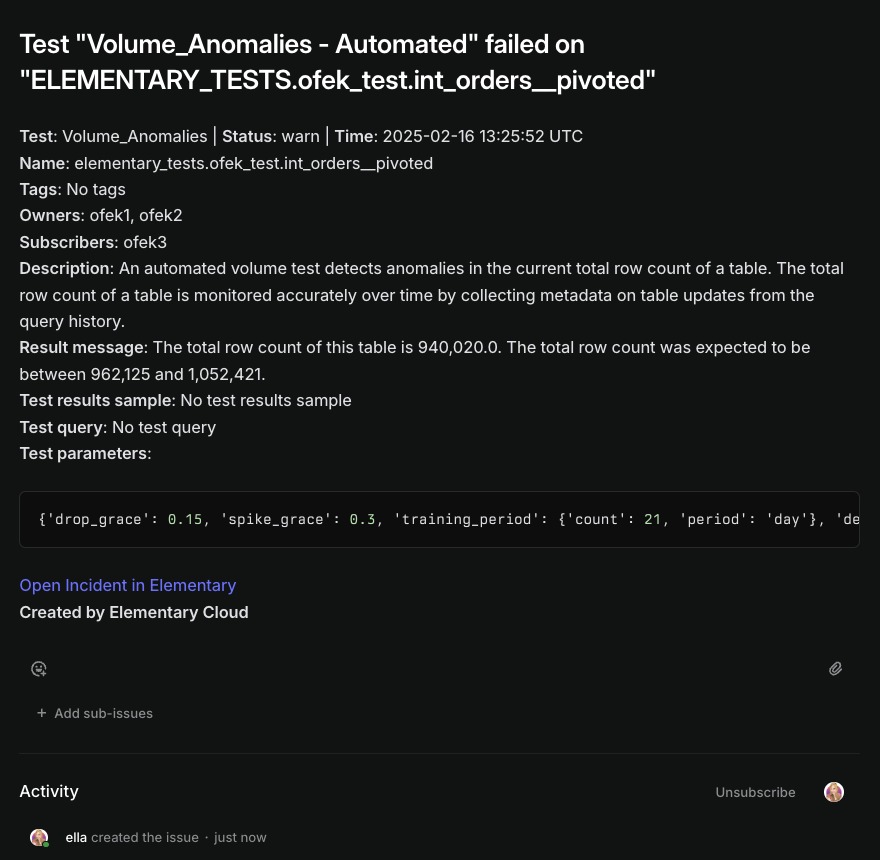

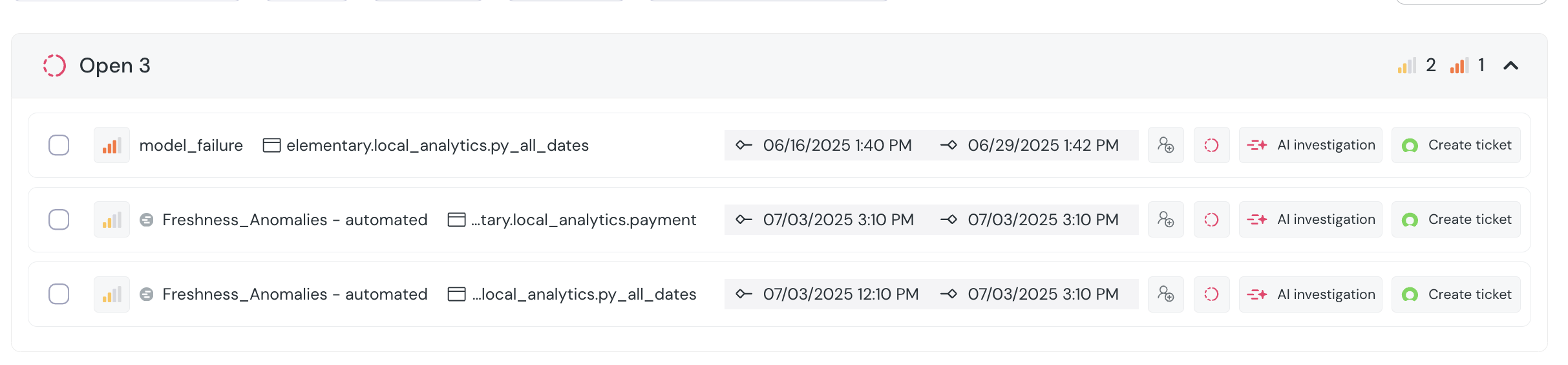

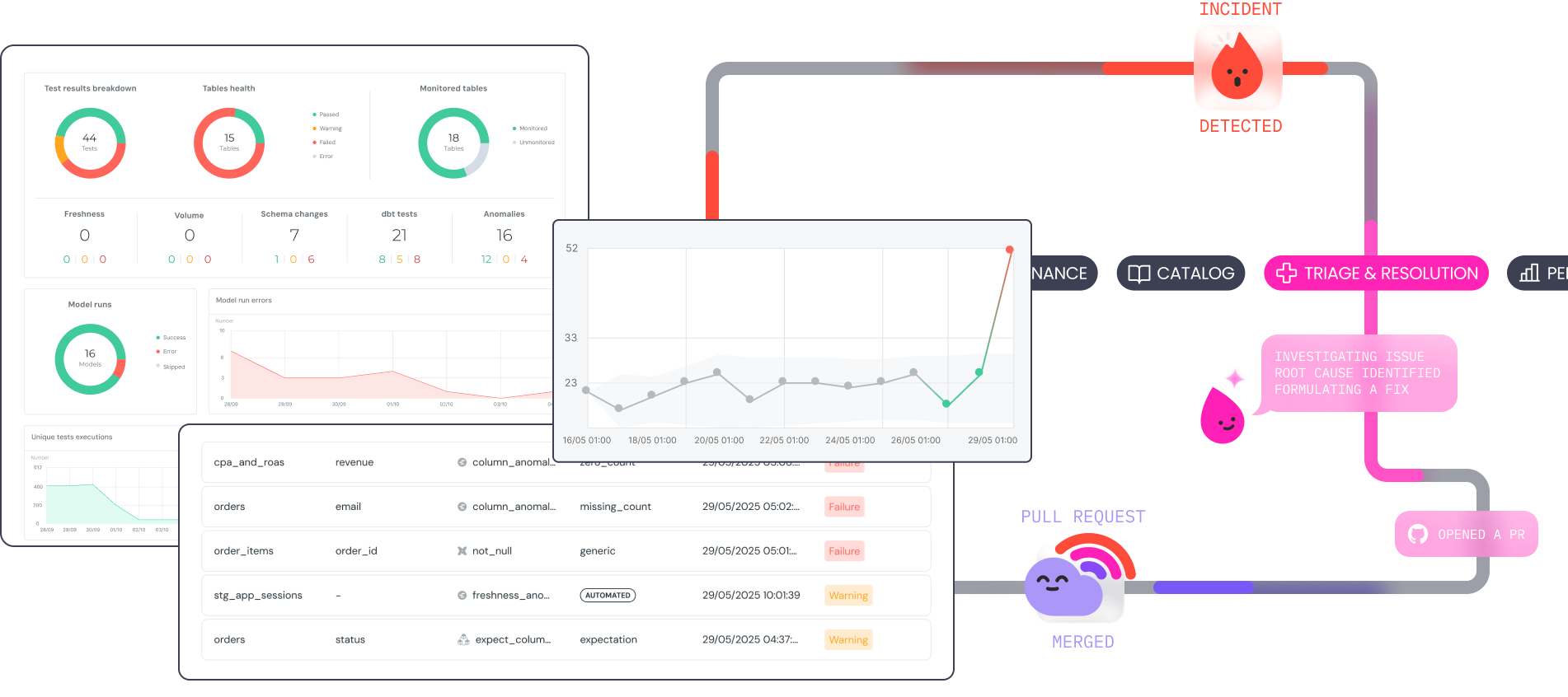

+The `Incidents` page is designed to enable your team to stay on top of open incidents and collaborate on resolving them.

+The page gives a comprehensive overview of all current and previous incidents, where users can view the status, prioritize, assign and resolve incidents.

+

+## Incidents view and filters

+

+The page provides a view of all incidents, and useful filters:

+

+- **Quick Filters:** Preset quick filters for all, unresolved and “open and unassigned” incidents.

+- **Filter:** Allows users to filter incidents based on various criteria such as status, severity, model name and assignee.

+- **Time frame:** Filter incidents which were open in a certain timeframe.

+

+

+

+

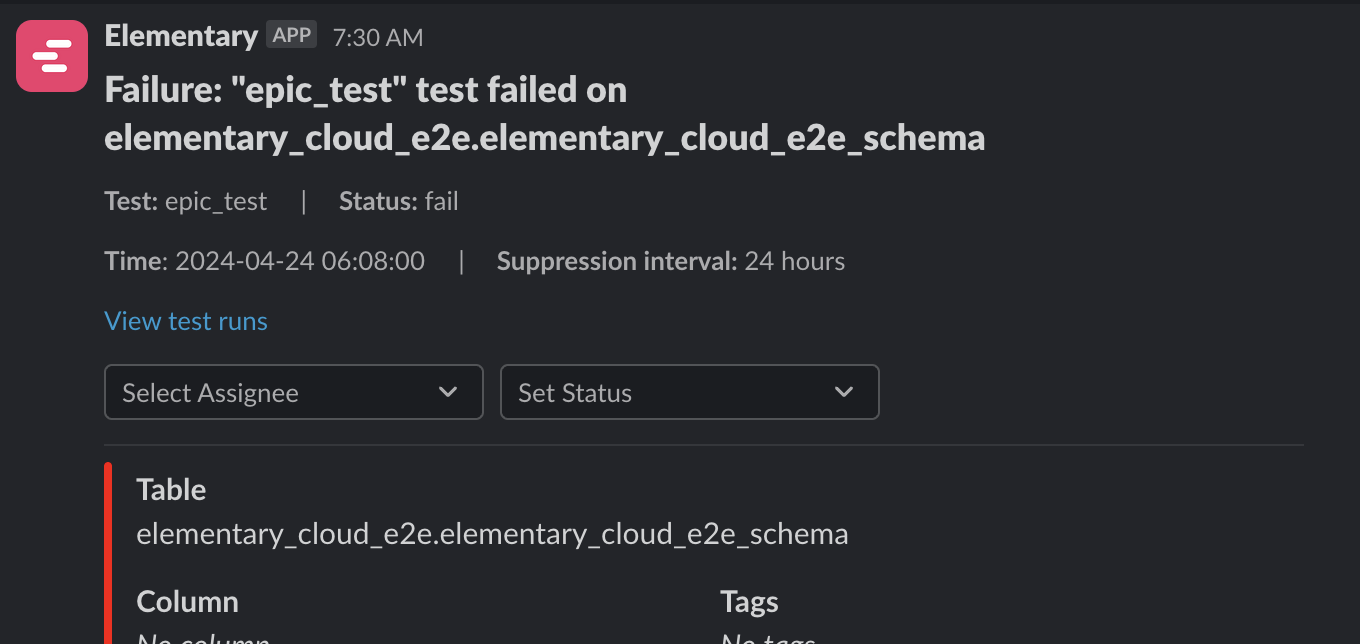

+## Interacting with Incidents

+

+An incident has a status, assignee and severity.

+These can be set in the Incidents page, or from an alert in integrations that support alert actions.

+

+- **Incident status**: Will be set to `open` by default, and can be changed to `Acknowledged` and back to `Open`. When an alert is manually or automatically set as `Resolved`, it will close and will no longer be modified.

+- **Incident assignee**: An incident can be assigned to any user on the team, and they will be notified.

+ - If you assign an incident to a user, it is recommended to leave the incident `Open` until the user changes status to `Acknowledged`.

+- **Incident severity**: Severity of an incident can be Low, Normal, High or Critical. By default, model errors are set to Critical, test failures set to High and warnings are marked as Normal, but the severity can be changed manually. _Coming soon_ : Severity will be automated by an analysis of the impacted assets.

+

+

+

+

+## Relationship with alert rules

+

+Digest rules are independent of [Alert Rules](/cloud/features/alerts-and-incidents/alert-rules). You can have both real-time alert rules and digest rules active at the same time — they are evaluated separately. A common pattern is to use real-time alert rules for critical on-call channels and digest rules for broader team summaries.

diff --git a/docs/cloud/features/alerts-and-incidents/incident-management.mdx b/docs/cloud/features/alerts-and-incidents/incident-management.mdx

new file mode 100644

index 000000000..85607407e

--- /dev/null

+++ b/docs/cloud/features/alerts-and-incidents/incident-management.mdx

@@ -0,0 +1,54 @@

+---

+title: Incident Management

+sidebarTitle: Incident management

+---

+

+The `Incidents` page is designed to enable your team to stay on top of open incidents and collaborate on resolving them.

+The page gives a comprehensive overview of all current and previous incidents, where users can view the status, prioritize, assign and resolve incidents.

+

+## Incidents view and filters

+

+The page provides a view of all incidents, and useful filters:

+

+- **Quick Filters:** Preset quick filters for all, unresolved and “open and unassigned” incidents.

+- **Filter:** Allows users to filter incidents based on various criteria such as status, severity, model name and assignee.

+- **Time frame:** Filter incidents which were open in a certain timeframe.

+

+

+

+

+## Interacting with Incidents

+

+An incident has a status, assignee and severity.

+These can be set in the Incidents page, or from an alert in integrations that support alert actions.

+

+- **Incident status**: Will be set to `open` by default, and can be changed to `Acknowledged` and back to `Open`. When an alert is manually or automatically set as `Resolved`, it will close and will no longer be modified.

+- **Incident assignee**: An incident can be assigned to any user on the team, and they will be notified.

+ - If you assign an incident to a user, it is recommended to leave the incident `Open` until the user changes status to `Acknowledged`.

+- **Incident severity**: Severity of an incident can be Low, Normal, High or Critical. By default, model errors are set to Critical, test failures set to High and warnings are marked as Normal, but the severity can be changed manually. _Coming soon_ : Severity will be automated by an analysis of the impacted assets.

+

+ +

+ +

+ +

+ +

+  +

+  +

+  +

+  +

+ +

+

+## How to set up a data content metric?

+

+The monitored metrics are set up in the code, in a way similar to dbt tests.

+

+

+

+

+No mandatory configuration, however it is highly recommended to configure a `timestamp_column`.

+

+{/* prettier-ignore */}

+

+

+

+## How to set up a data content metric?

+

+The monitored metrics are set up in the code, in a way similar to dbt tests.

+

+

+

+

+No mandatory configuration, however it is highly recommended to configure a `timestamp_column`.

+

+{/* prettier-ignore */}

+

+

+ data_tests:

+ -- elementary.collect_metrics:

+ arguments:

+ timestamp_column: column name

+ time_bucket:

+ period: [hour | day]

+ count: int

+ dimensions: sql expression

+ metrics: monitors list

+ name: string

+ type: monitor type

+ columns: list

+ where_expression: sql expression

+

+

+

+ +

+

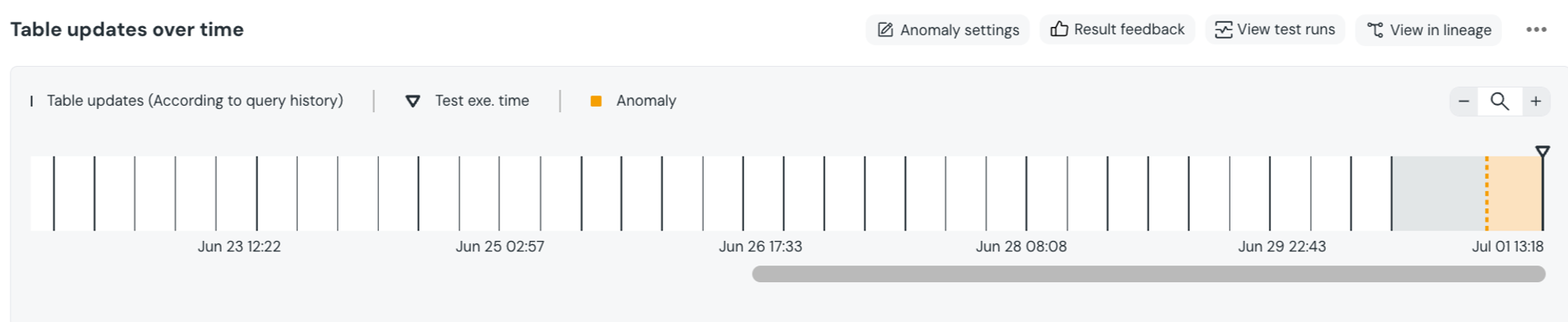

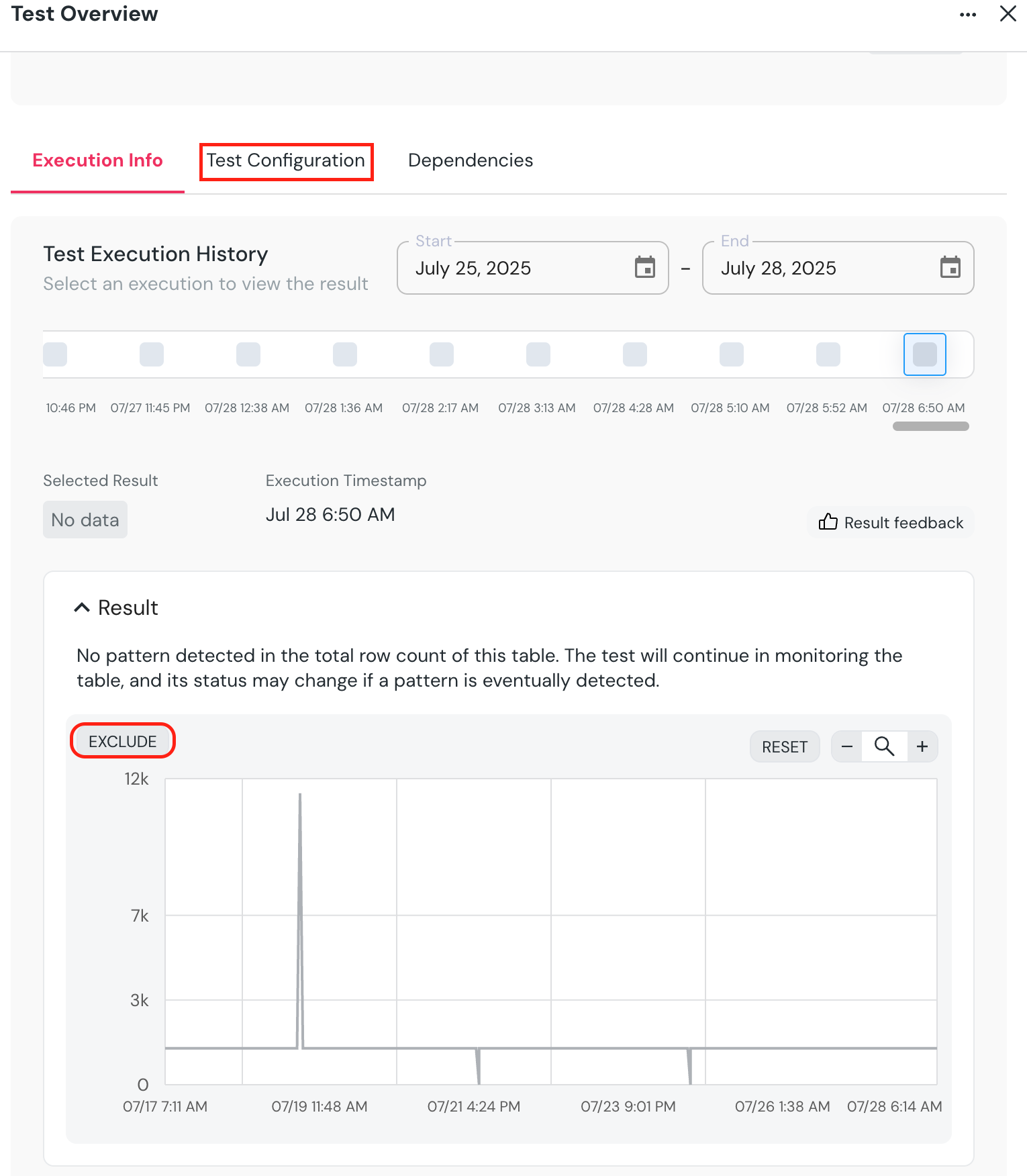

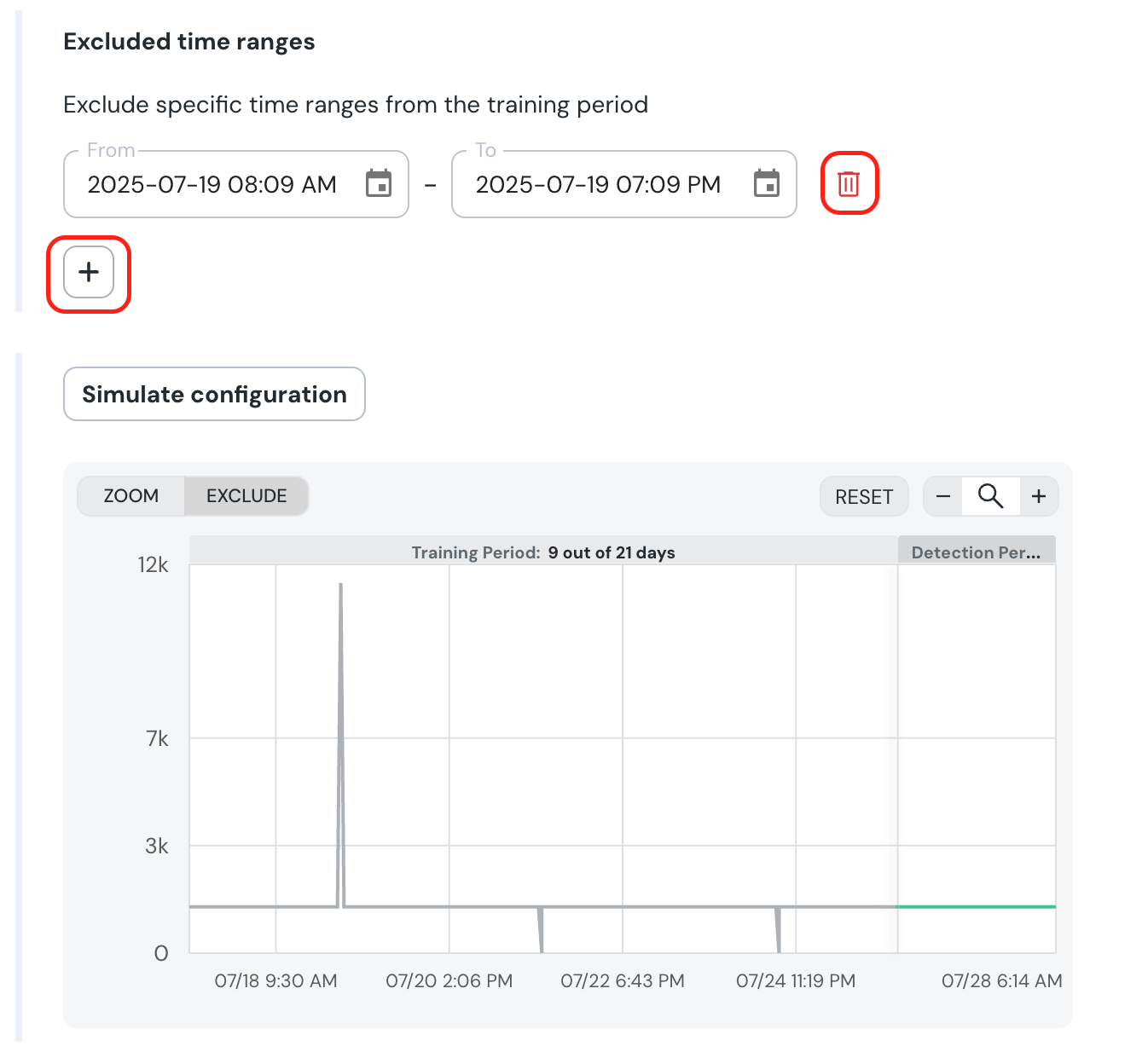

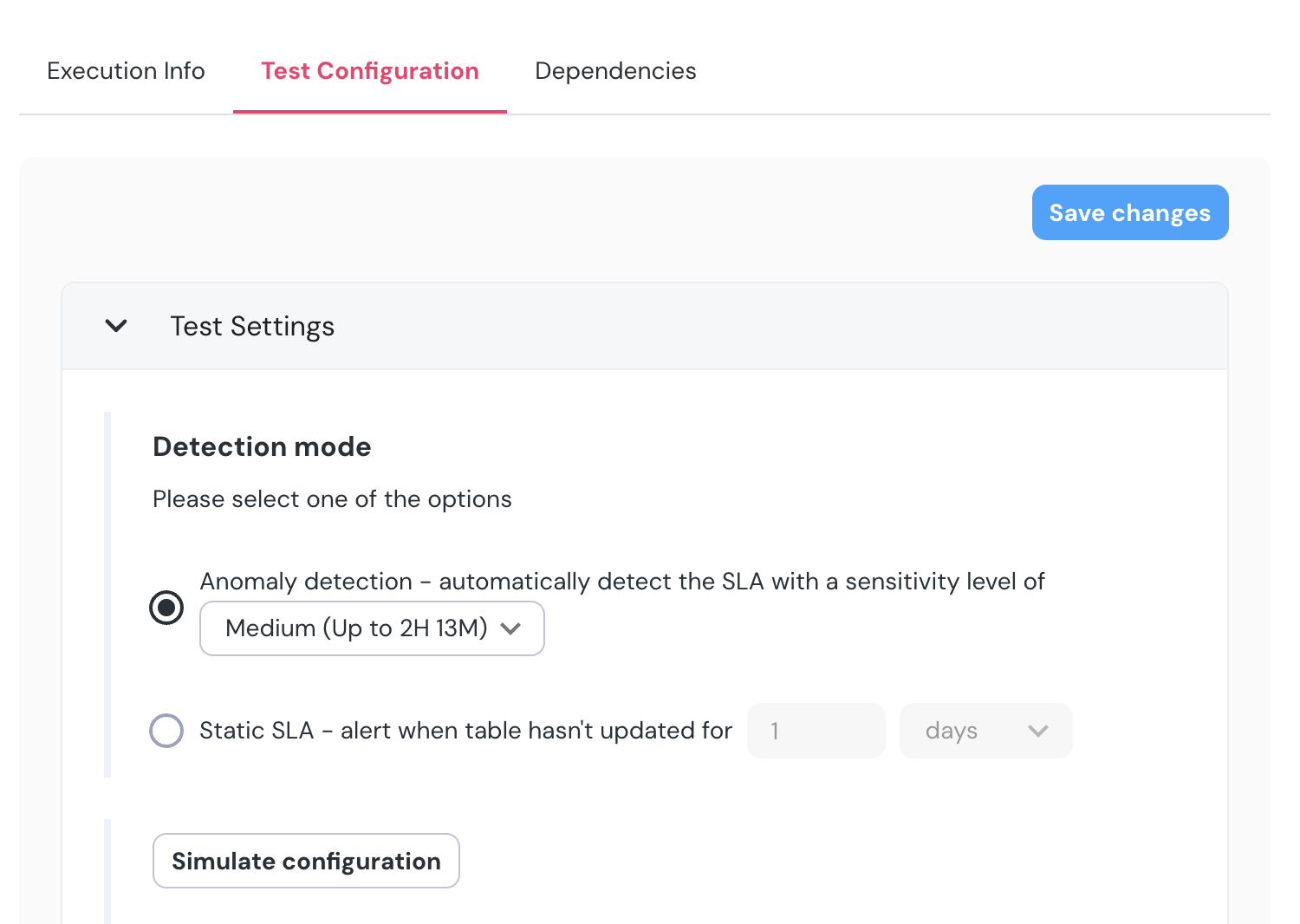

+- Go down the chart, click `EXCLUDE`, and mark the section you want to exclude.

+ - A confirmation dialog will appear, showing the exact samples which will be excluded.

+- On confirmation, the new excluded time range will appear under **`Excluded time ranges`.** You can modify or remove it, and you can also add new ranges using the + sign if you prefer.

+- To test the configuration, click “Simulate configuration”.

+

+

+

+

+- Go down the chart, click `EXCLUDE`, and mark the section you want to exclude.

+ - A confirmation dialog will appear, showing the exact samples which will be excluded.

+- On confirmation, the new excluded time range will appear under **`Excluded time ranges`.** You can modify or remove it, and you can also add new ranges using the + sign if you prefer.

+- To test the configuration, click “Simulate configuration”.

+

+ +

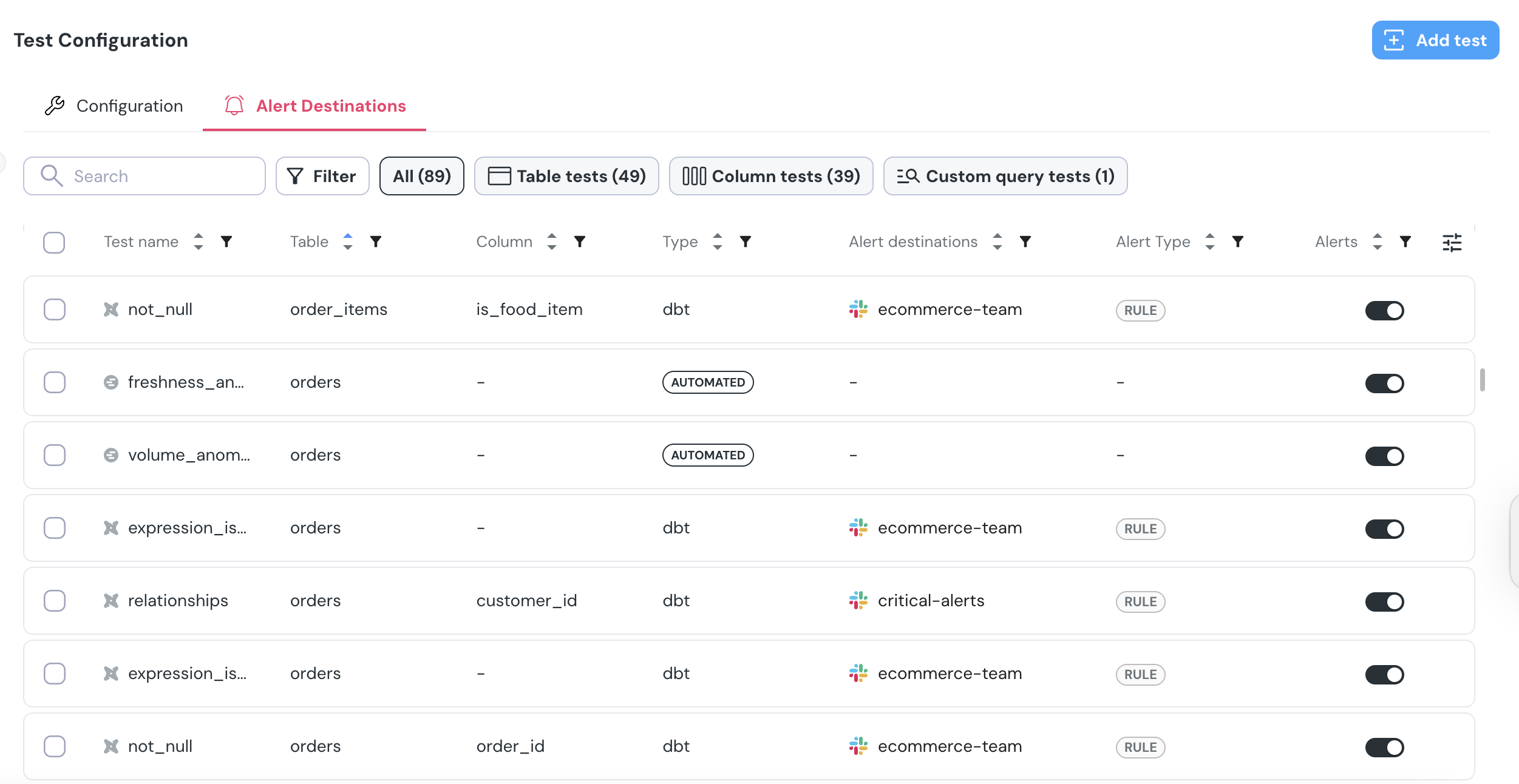

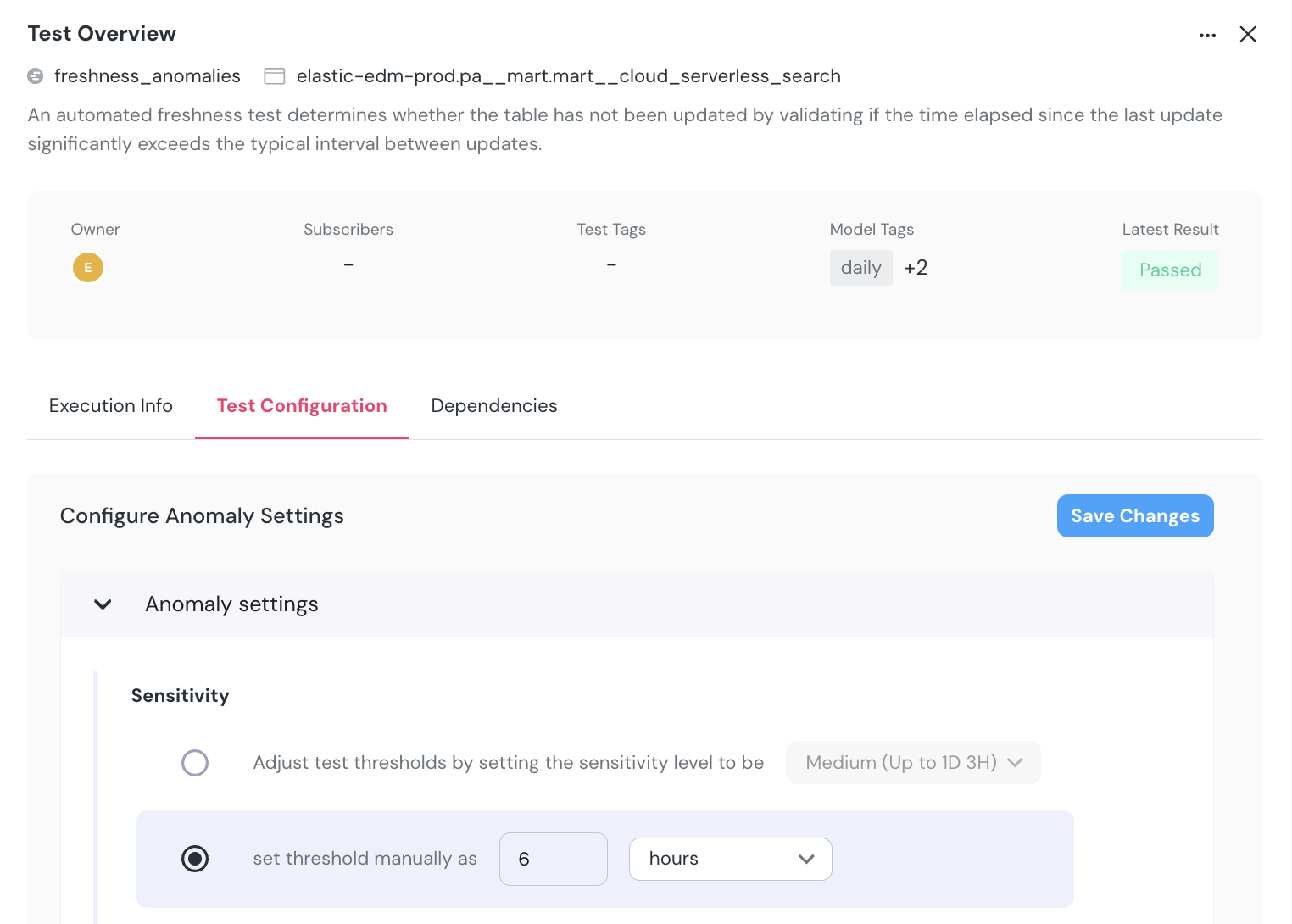

+## Remove anomaly detection monitors

+

+There are two ways to delete monitors from the UI.

+- Test configuration page - Choose one or more tests, and an option to delete them will be available at the bottom of the page.

+- Test results page - Press the `...` button on the top right of the test result and then `Delete test`.

+

+

+

+## Supported settings

+

+#### All monitors

+

+

+

+## Remove anomaly detection monitors

+

+There are two ways to delete monitors from the UI.

+- Test configuration page - Choose one or more tests, and an option to delete them will be available at the bottom of the page.

+- Test results page - Press the `...` button on the top right of the test result and then `Delete test`.

+

+

+

+## Supported settings

+

+#### All monitors

+

+ +

+  +

+  +

+  +

+  +

+  +

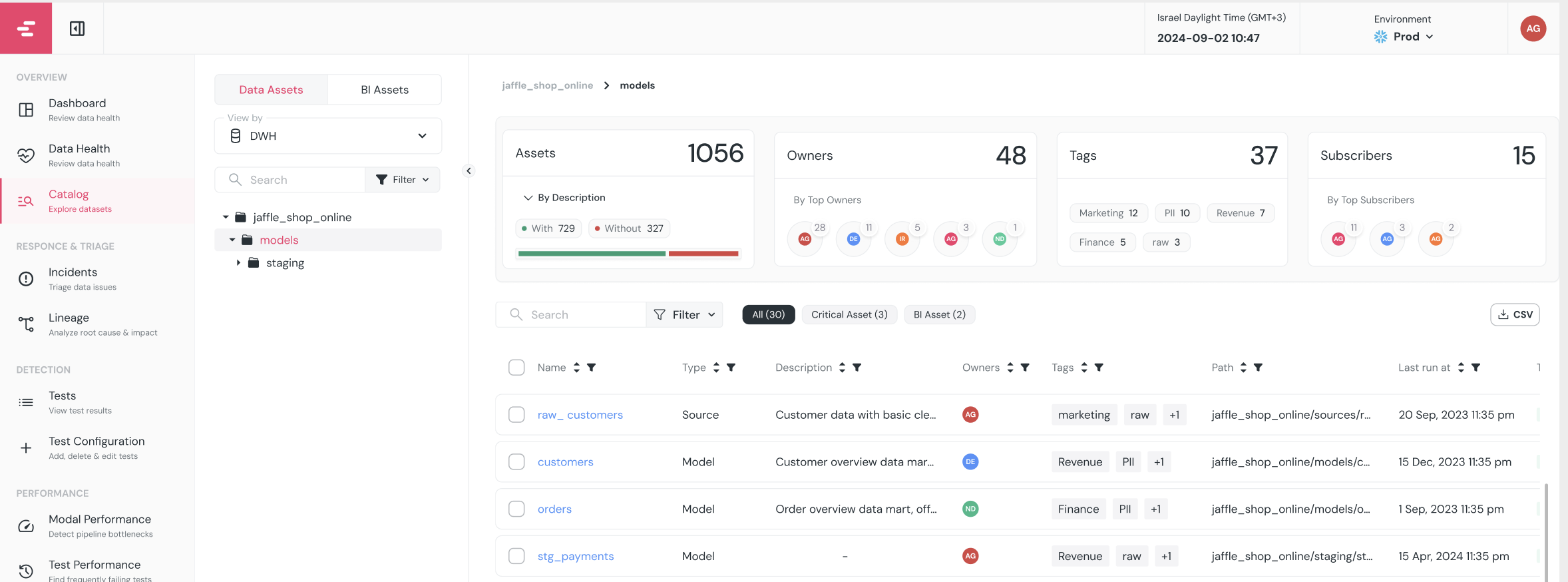

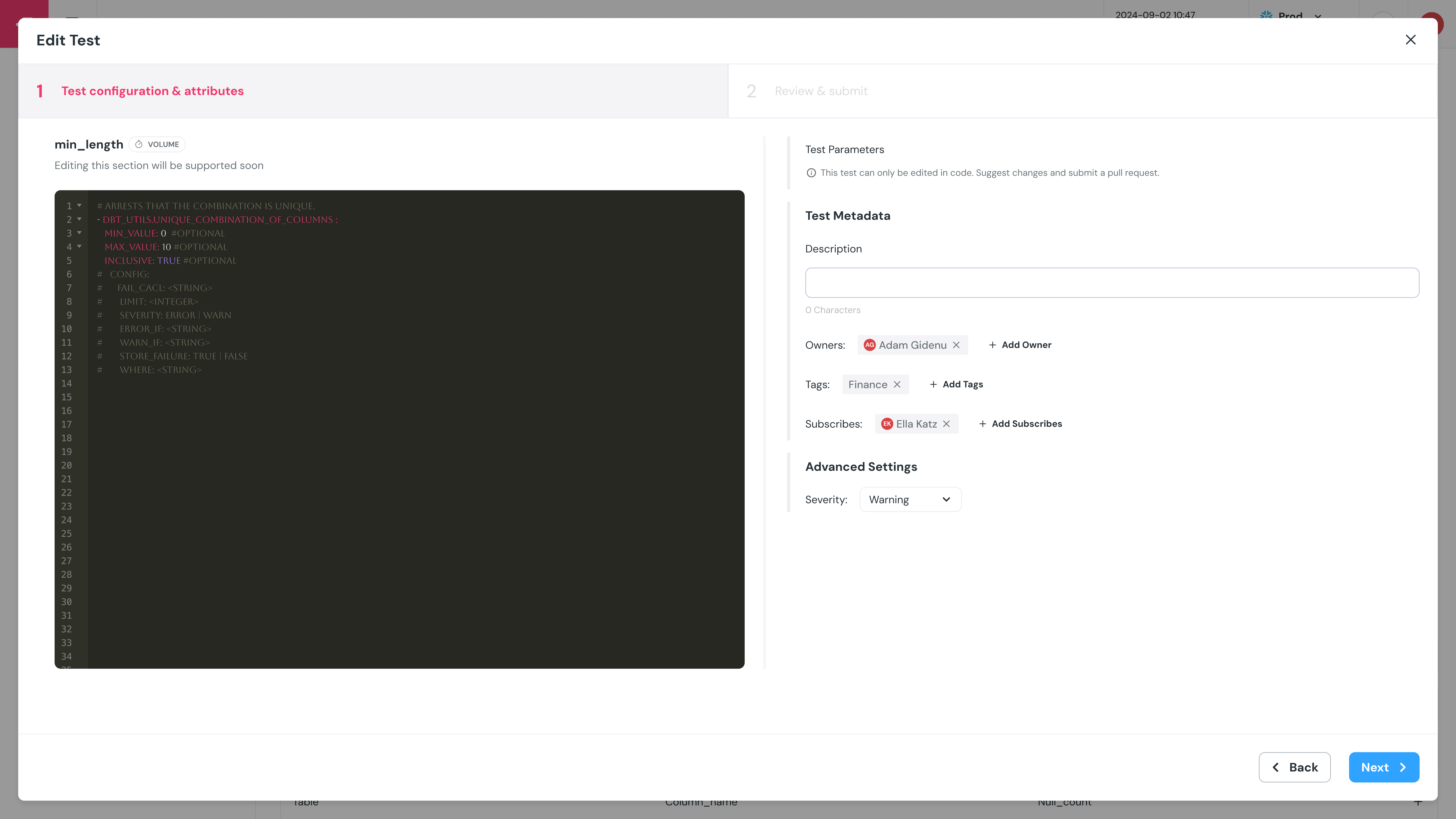

+### Editing Metadata

+You can edit metadata directly in the catalog. This includes updating model and column descriptions, assigning owners, and applying tags.

+To make updates more efficient, the catalog supports bulk editing. For example, you can assign an owner to multiple models at once or apply a shared tag to a group of assets.

+All metadata edits are synced back into your dbt project as a pull request, so documentation stays version-controlled and part of your workflow.

+

+Need to display additional metadata fields from your dbt `meta` config? Reach out to our team to customize the catalog with fields that best fit your data workflows.

+

+### Marking Critical Assets

+You can mark assets as critical directly in the catalog to highlight their importance. Critical assets are prioritized in monitoring and surfaced in governance views to help teams focus on what matters most. Read more [here](/cloud/features/data-governance/critical_assets).

+

+

+### Editing Metadata

+You can edit metadata directly in the catalog. This includes updating model and column descriptions, assigning owners, and applying tags.

+To make updates more efficient, the catalog supports bulk editing. For example, you can assign an owner to multiple models at once or apply a shared tag to a group of assets.

+All metadata edits are synced back into your dbt project as a pull request, so documentation stays version-controlled and part of your workflow.

+

+Need to display additional metadata fields from your dbt `meta` config? Reach out to our team to customize the catalog with fields that best fit your data workflows.

+

+### Marking Critical Assets

+You can mark assets as critical directly in the catalog to highlight their importance. Critical assets are prioritized in monitoring and surfaced in governance views to help teams focus on what matters most. Read more [here](/cloud/features/data-governance/critical_assets).

+ +

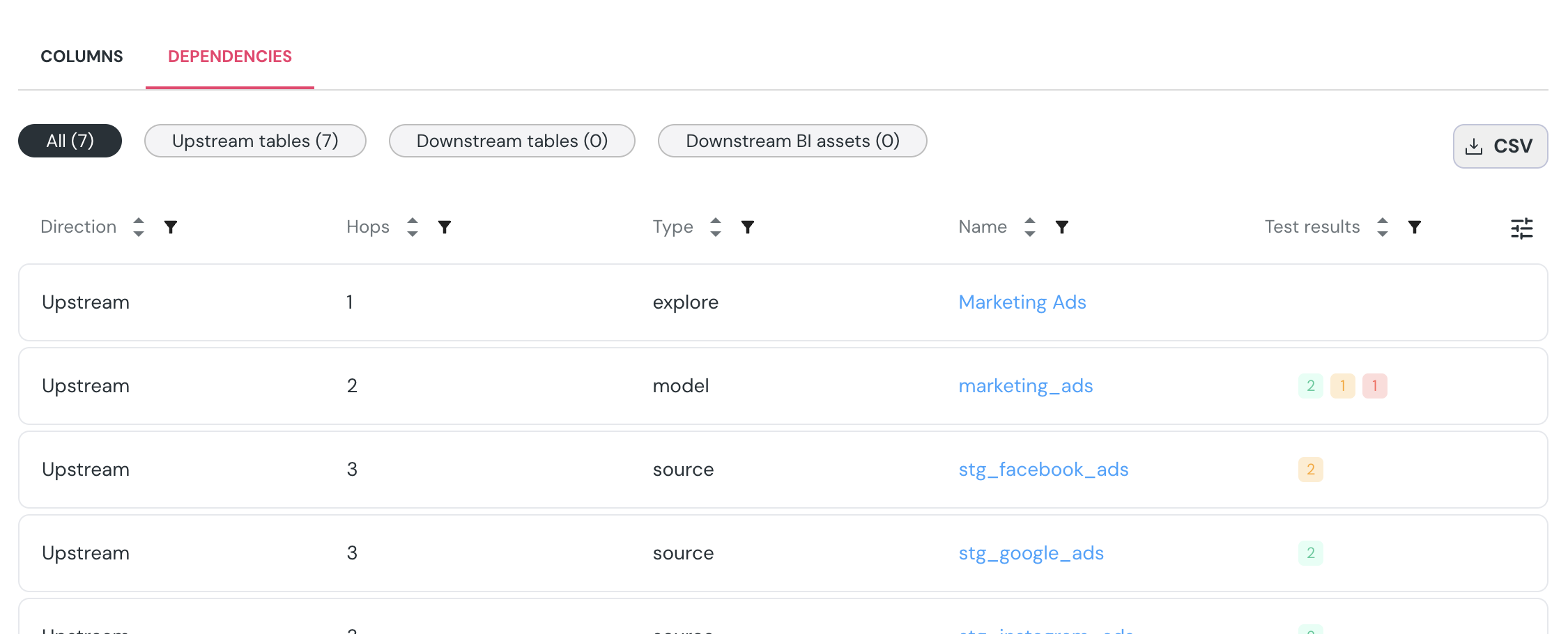

+### Lineage & Dependency Export

+View upstream and downstream dependencies for each dataset, and export the full dependency list as a CSV for further analysis or documentation.

+

+

+### Lineage & Dependency Export

+View upstream and downstream dependencies for each dataset, and export the full dependency list as a CSV for further analysis or documentation.

+ +

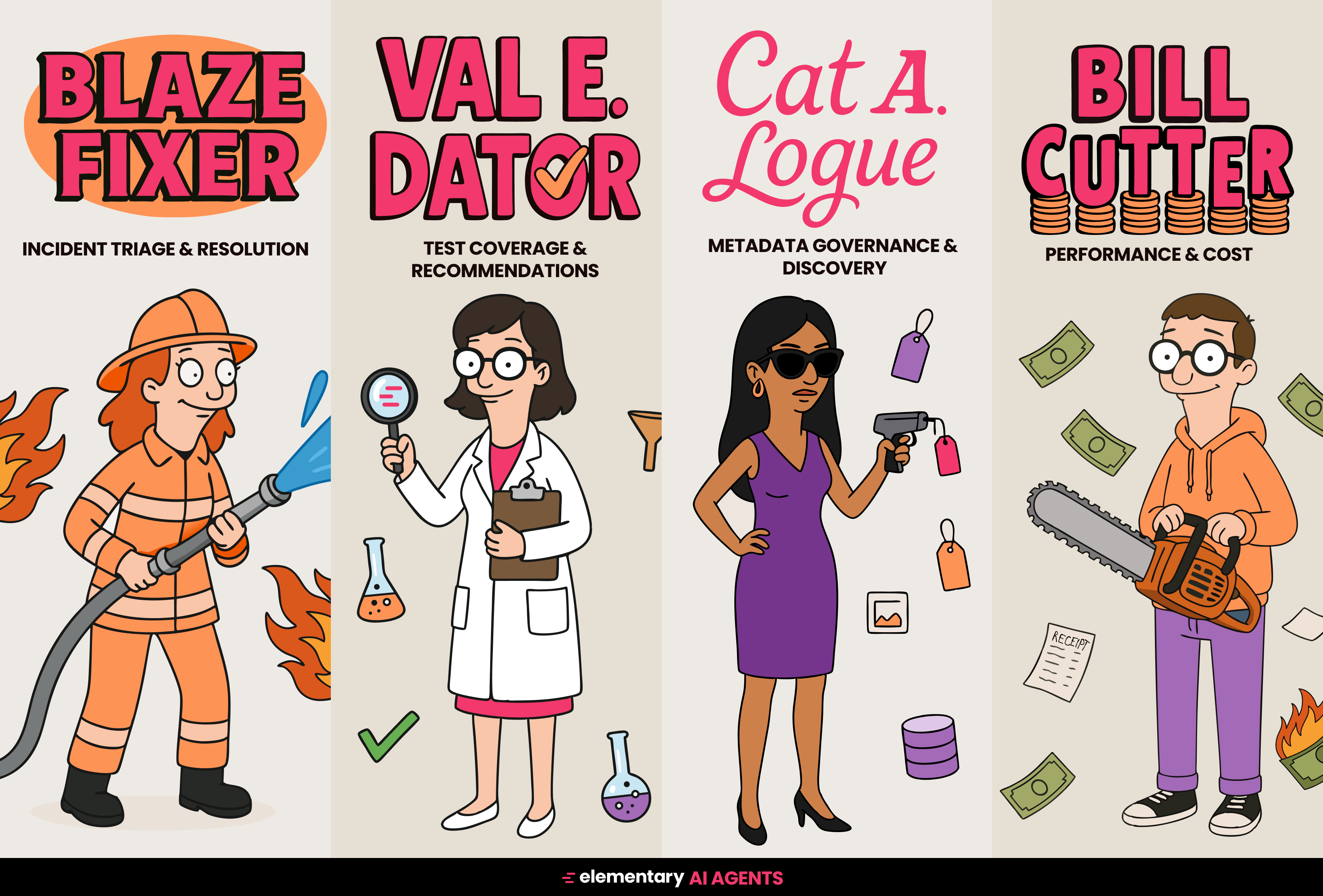

+### AI Agents for Discovery and Governance

+Elementary includes two agents that use the catalog to support your team:

+- The [Catalog agent](/cloud/ai-agents/catalog-agent) helps users find relevant models, columns, or metrics using natural language. This is especially helpful for new team members or business users who are less familiar with your dbt structure.

+- The [Governance agent](/cloud/ai-agents/governance-agent) identifies missing documentation, unowned assets, or inconsistent tags. It surfaces these issues automatically and can suggest actions to improve coverage.

+These agents run continuously and help keep the catalog useful and complete without requiring constant manual work.

diff --git a/docs/cloud/features/collaboration-and-communication/data-health.mdx b/docs/cloud/features/collaboration-and-communication/data-health.mdx

new file mode 100644

index 000000000..30b044304

--- /dev/null

+++ b/docs/cloud/features/collaboration-and-communication/data-health.mdx

@@ -0,0 +1,74 @@

+---

+title: Data Health Dashboard

+sidebarTitle: Data Health Dashboard

+---

+

+import DataHealthIntro from '/snippets/cloud/features/data-health/data-health-intro.mdx';

+import DataQualityDimensions from '/snippets/cloud/features/data-health/data-quality-dimensions.mdx';

+

+

+

+### AI Agents for Discovery and Governance

+Elementary includes two agents that use the catalog to support your team:

+- The [Catalog agent](/cloud/ai-agents/catalog-agent) helps users find relevant models, columns, or metrics using natural language. This is especially helpful for new team members or business users who are less familiar with your dbt structure.

+- The [Governance agent](/cloud/ai-agents/governance-agent) identifies missing documentation, unowned assets, or inconsistent tags. It surfaces these issues automatically and can suggest actions to improve coverage.

+These agents run continuously and help keep the catalog useful and complete without requiring constant manual work.

diff --git a/docs/cloud/features/collaboration-and-communication/data-health.mdx b/docs/cloud/features/collaboration-and-communication/data-health.mdx

new file mode 100644

index 000000000..30b044304

--- /dev/null

+++ b/docs/cloud/features/collaboration-and-communication/data-health.mdx

@@ -0,0 +1,74 @@

+---

+title: Data Health Dashboard

+sidebarTitle: Data Health Dashboard

+---

+

+import DataHealthIntro from '/snippets/cloud/features/data-health/data-health-intro.mdx';

+import DataQualityDimensions from '/snippets/cloud/features/data-health/data-quality-dimensions.mdx';

+

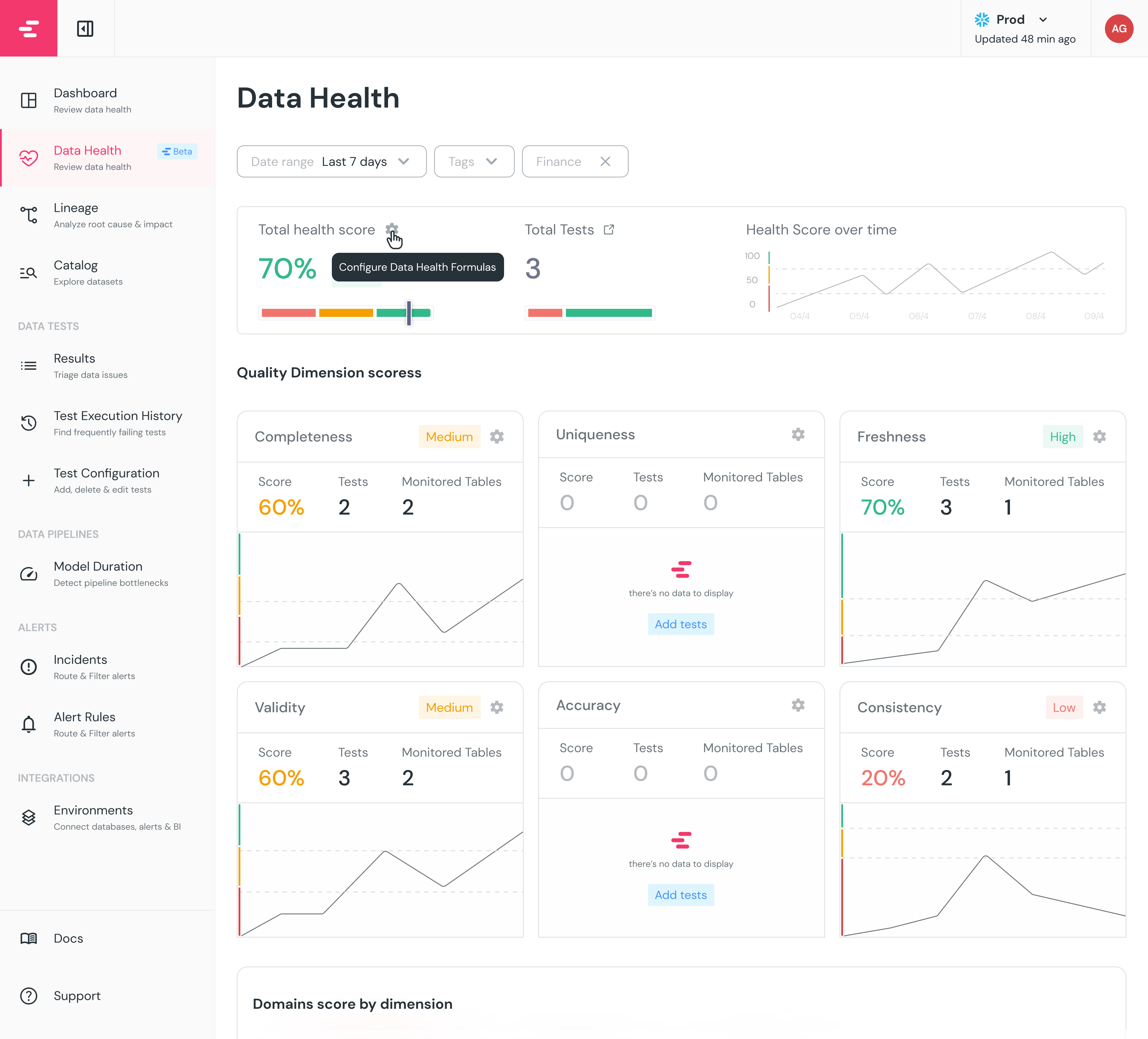

+ +

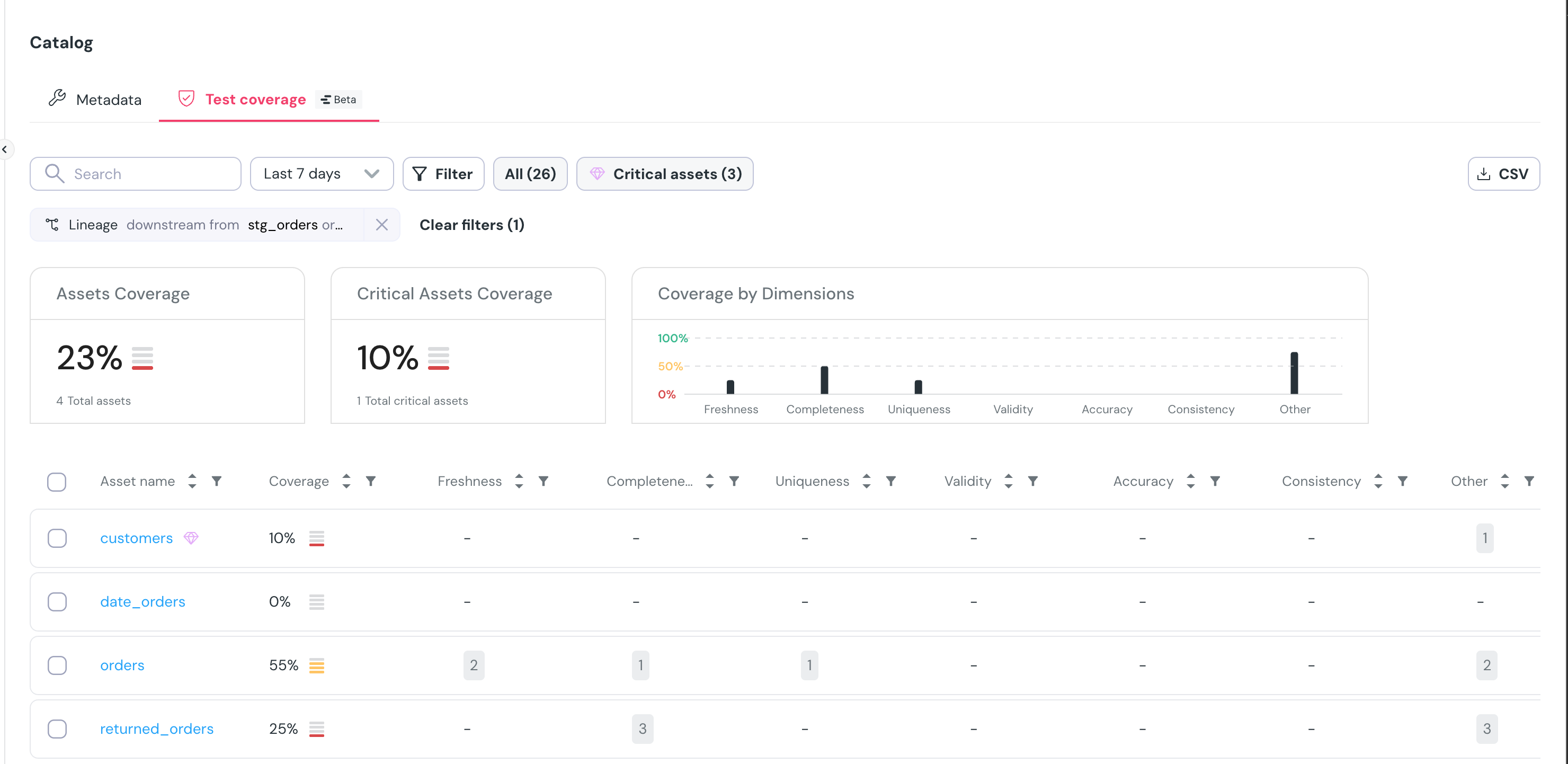

+The dashboard is based on the 6 [Data Quality Dimensions](/cloud/features/collaboration-and-communication/data-quality-dimensions#data-quality-dimensions):

+

+

+

+The dashboard is based on the 6 [Data Quality Dimensions](/cloud/features/collaboration-and-communication/data-quality-dimensions#data-quality-dimensions):

+

+ +

+### Critical assets alerts score

+

+To focus on what matters most, see the top right of the screen to filter on your [critical assets](/cloud/features/data-governance/critical_assets).

+

+### Can I customize the quality dimension mapping of my tests?

+

+Of course!

+Each test you run, whether it's a generic or a custom test, can be mapped to one of the 6 quality dimensions.

+The way to do so is to add `quality_dimension` to the test definition in your dbt project:

+

+

+

+### Critical assets alerts score

+

+To focus on what matters most, see the top right of the screen to filter on your [critical assets](/cloud/features/data-governance/critical_assets).

+

+### Can I customize the quality dimension mapping of my tests?

+

+Of course!

+Each test you run, whether it's a generic or a custom test, can be mapped to one of the 6 quality dimensions.

+The way to do so is to add `quality_dimension` to the test definition in your dbt project:

+

+ +

\ No newline at end of file

diff --git a/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx b/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx

new file mode 100644

index 000000000..c31a723cd

--- /dev/null

+++ b/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx

@@ -0,0 +1,70 @@

+---

+title: Data Quality Dimensions

+sidebarTitle: Data Quality Dimensions

+---

+

+import DataHealthIntro from '/snippets/cloud/features/data-health/data-health-intro.mdx';

+import DataQualityDimensions from '/snippets/cloud/features/data-health/data-quality-dimensions.mdx';

+

+

+

+## Measuring data quality

+

+

+

\ No newline at end of file

diff --git a/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx b/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx

new file mode 100644

index 000000000..c31a723cd

--- /dev/null

+++ b/docs/cloud/features/collaboration-and-communication/data-quality-dimensions.mdx

@@ -0,0 +1,70 @@

+---

+title: Data Quality Dimensions

+sidebarTitle: Data Quality Dimensions

+---

+

+import DataHealthIntro from '/snippets/cloud/features/data-health/data-health-intro.mdx';

+import DataQualityDimensions from '/snippets/cloud/features/data-health/data-quality-dimensions.mdx';

+

+

+

+## Measuring data quality

+

+ +

+  +

+

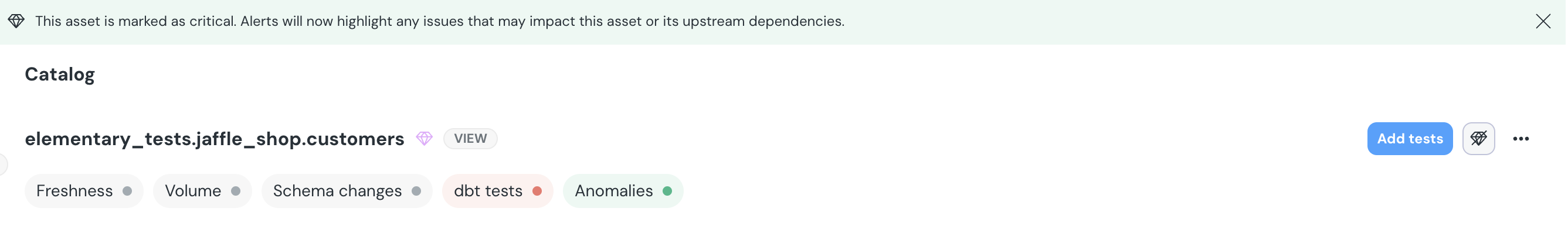

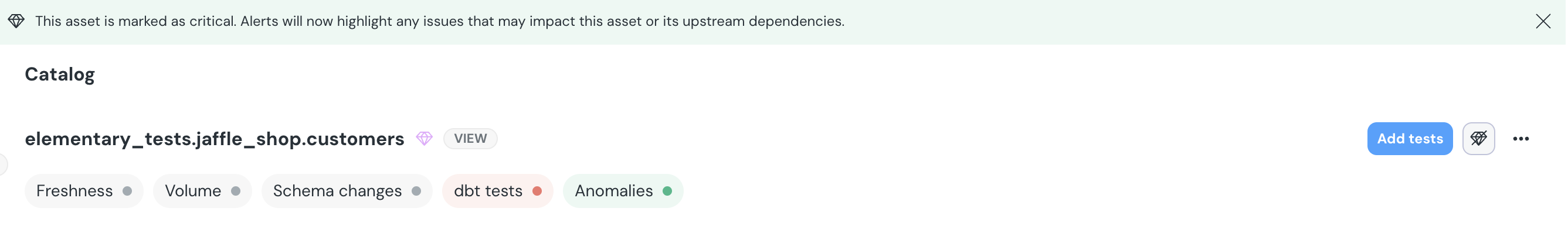

+## What Should Be Set as a Critical Asset?

+

+You should mark an asset as **critical** if:

+

+- It directly impacts key **business reports, dashboards, or decision-making tools**.

+- It serves as an essential **upstream dependency** for other important data models.

+- It is frequently used by **multiple teams or stakeholders**.

+- Its failure or inaccuracy could cause **significant business or operational risks**

+

+## Why Should I Define My Critical Assets?

+

+Defining your **critical assets** helps you:

+

+- **Quickly identify and respond to issues**– Get notified when upstream problems may impact your critical assets, ensuring faster resolution and minimal disruption.

+- **Prioritize issue resolution**– Focus on addressing incidents that have the greatest impact on business operations, dashboards, and decision-making.

+- **Improve data reliability**– Ensure key stakeholders have access to accurate and up-to-date data by monitoring critical assets more effectively.

+- **Enhance observability**– Gain better visibility into the health of your most important assets through prioritized monitoring and alerting.

+

+## How to Set a Critical Asset?

+

+You can mark an asset as **critical** directly in the UI:

+

+- **From the Catalog Page** – Navigate to the asset in the catalog and click the **diamond icon** to **"Set as Critical Asset."**

+- **From the Lineage View** – Right-click on the node representing the asset and select **"Set as Critical Asset"** from the list.

+

+Once an asset is marked as critical, **alerts will now highlight any issues that may impact this asset or its upstream dependencies, ensuring prioritization.**

+

+

+

+## Where Can You See Critical Assets?

+

+Once an asset is marked as **critical**, you will be able to:

+

+- **Identify it in the UI**, where it will be visually highlighted.

+- **Receive alerts** when upstream issues may impact the critical asset.

+- **Filter incidents** by their impact on critical assets.

+- **Track health scores of critical data assets** over time through dashboard monitoring.

+

+

+

+

+## What Should Be Set as a Critical Asset?

+

+You should mark an asset as **critical** if:

+

+- It directly impacts key **business reports, dashboards, or decision-making tools**.

+- It serves as an essential **upstream dependency** for other important data models.

+- It is frequently used by **multiple teams or stakeholders**.

+- Its failure or inaccuracy could cause **significant business or operational risks**

+

+## Why Should I Define My Critical Assets?

+

+Defining your **critical assets** helps you:

+

+- **Quickly identify and respond to issues**– Get notified when upstream problems may impact your critical assets, ensuring faster resolution and minimal disruption.

+- **Prioritize issue resolution**– Focus on addressing incidents that have the greatest impact on business operations, dashboards, and decision-making.

+- **Improve data reliability**– Ensure key stakeholders have access to accurate and up-to-date data by monitoring critical assets more effectively.

+- **Enhance observability**– Gain better visibility into the health of your most important assets through prioritized monitoring and alerting.

+

+## How to Set a Critical Asset?

+

+You can mark an asset as **critical** directly in the UI:

+

+- **From the Catalog Page** – Navigate to the asset in the catalog and click the **diamond icon** to **"Set as Critical Asset."**

+- **From the Lineage View** – Right-click on the node representing the asset and select **"Set as Critical Asset"** from the list.

+

+Once an asset is marked as critical, **alerts will now highlight any issues that may impact this asset or its upstream dependencies, ensuring prioritization.**

+

+

+

+## Where Can You See Critical Assets?

+

+Once an asset is marked as **critical**, you will be able to:

+

+- **Identify it in the UI**, where it will be visually highlighted.

+- **Receive alerts** when upstream issues may impact the critical asset.

+- **Filter incidents** by their impact on critical assets.

+- **Track health scores of critical data assets** over time through dashboard monitoring.

+

+ +

+By carefully selecting which assets to mark as critical, you can quickly detect and prioritize issues that impact your most important data, reducing disruptions, improving reliability, and keeping key stakeholders informed.

diff --git a/docs/cloud/features/data-governance/manage-metadata.mdx b/docs/cloud/features/data-governance/manage-metadata.mdx

new file mode 100644

index 000000000..e5160de80

--- /dev/null

+++ b/docs/cloud/features/data-governance/manage-metadata.mdx

@@ -0,0 +1,78 @@

+---

+title: "Manage metadata"

+---

+

+Manage your metadata directly from the [Elementary Catalog](/cloud/features/collaboration-and-communication/catalog), with no need to update code manually. This streamlines governance workflows, reduces manual effort, and improves visibility and consistency across your assets.

+

+From a single interface, you can view and filter assets, edit metadata fields individually or in bulk, edit metadata for related assets and even use [AI to generate high-quality descriptions](/cloud/features/data-governance/ai-descriptions)—saving time and enhancing data discoverability. All changes are version-controlled via pull requests to keep your dbt project in sync.

+

+To get the most out of this feature, check out our [Data Governance Best Practices](/cloud/best-practices/governance-for-observability) guide.

+

+

+

+## Overview

+

+Managing metadata such as owners, tags, descriptions, and critical asset status is essential for effective data governance. With Elementary you can:

+

+- View all your assets and their metadata in a centralized table

+- Identify governance gaps and inconsistencies

+- Edit metadata fields individually or in bulk

+- Generate high-quality asset descriptions using AI

+- Create pull requests for metadata changes directly from the UI

+- Track pending changes awaiting approval

+

+### Supported Metadata Fields

+

+Elementary supports editing the following metadata fields:

+

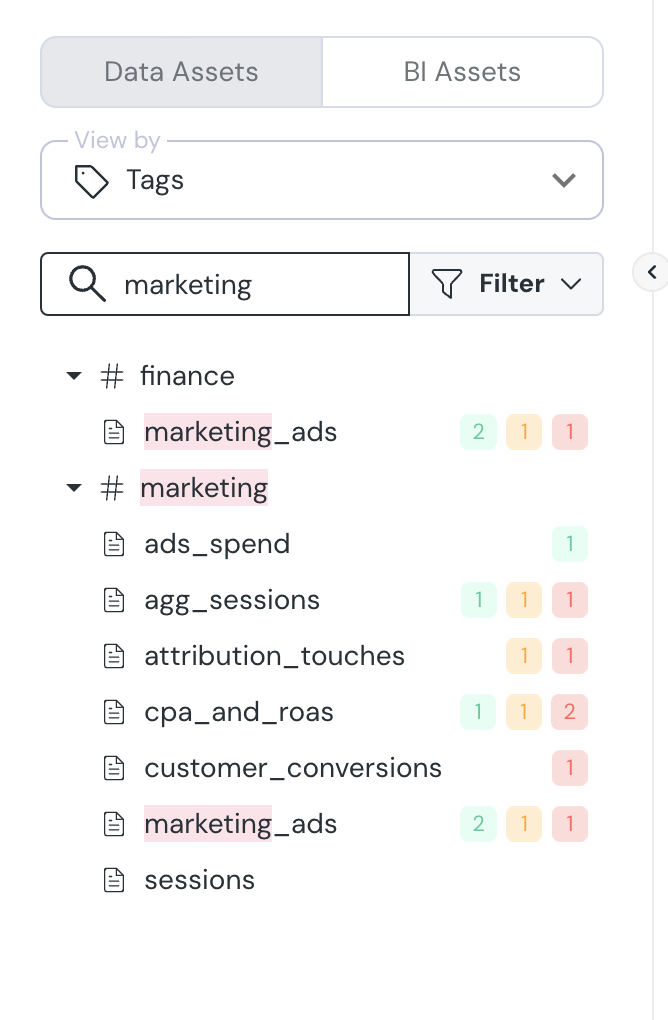

+- **Tags** - Categorize and organize assets

+- **Owners** - Assign responsibility for assets

+- **Descriptions** - Provide context about asset purpose and usage

+- **Critical Assets** - Mark high-priority assets

+

+Coming soon:

+

+- **Subscribers** - Add users who should be notified about changes

+- **Custom Metadata Fields** - Edit organization-specific metadata

+

+## Editing Metadata

+

+Elementary allows you to edit metadata for individual assets or in bulk, with changes reflected in your dbt project through pull requests.

+

+### How to Edit Metadata

+

+To edit metadata:

+

+1. In the Catalog page select any folder for getting into the assets Table

+2. Select the assets you want to modify

+3. Choose which metadata field to edit and click on the right field (Tags, Owners, Description, etc.)

+4. Make your changes in the editing wizard

+5. Review the changes summary

+6. Click "Submit Pull Request" to submit your changes

+

+

+### Editing Metadata for Related Assets

+From the Dependencies tab in the Catalog, you can efficiently apply metadata to all upstream and downstream assets of a selected asset in a single action.

+

+

+

+By carefully selecting which assets to mark as critical, you can quickly detect and prioritize issues that impact your most important data, reducing disruptions, improving reliability, and keeping key stakeholders informed.

diff --git a/docs/cloud/features/data-governance/manage-metadata.mdx b/docs/cloud/features/data-governance/manage-metadata.mdx

new file mode 100644

index 000000000..e5160de80

--- /dev/null

+++ b/docs/cloud/features/data-governance/manage-metadata.mdx

@@ -0,0 +1,78 @@

+---

+title: "Manage metadata"

+---

+

+Manage your metadata directly from the [Elementary Catalog](/cloud/features/collaboration-and-communication/catalog), with no need to update code manually. This streamlines governance workflows, reduces manual effort, and improves visibility and consistency across your assets.

+

+From a single interface, you can view and filter assets, edit metadata fields individually or in bulk, edit metadata for related assets and even use [AI to generate high-quality descriptions](/cloud/features/data-governance/ai-descriptions)—saving time and enhancing data discoverability. All changes are version-controlled via pull requests to keep your dbt project in sync.

+

+To get the most out of this feature, check out our [Data Governance Best Practices](/cloud/best-practices/governance-for-observability) guide.

+

+

+

+## Overview

+

+Managing metadata such as owners, tags, descriptions, and critical asset status is essential for effective data governance. With Elementary you can:

+

+- View all your assets and their metadata in a centralized table

+- Identify governance gaps and inconsistencies

+- Edit metadata fields individually or in bulk

+- Generate high-quality asset descriptions using AI

+- Create pull requests for metadata changes directly from the UI

+- Track pending changes awaiting approval

+

+### Supported Metadata Fields

+

+Elementary supports editing the following metadata fields:

+

+- **Tags** - Categorize and organize assets

+- **Owners** - Assign responsibility for assets

+- **Descriptions** - Provide context about asset purpose and usage

+- **Critical Assets** - Mark high-priority assets

+

+Coming soon:

+

+- **Subscribers** - Add users who should be notified about changes

+- **Custom Metadata Fields** - Edit organization-specific metadata

+

+## Editing Metadata

+

+Elementary allows you to edit metadata for individual assets or in bulk, with changes reflected in your dbt project through pull requests.

+

+### How to Edit Metadata

+

+To edit metadata:

+

+1. In the Catalog page select any folder for getting into the assets Table

+2. Select the assets you want to modify

+3. Choose which metadata field to edit and click on the right field (Tags, Owners, Description, etc.)

+4. Make your changes in the editing wizard

+5. Review the changes summary

+6. Click "Submit Pull Request" to submit your changes

+

+

+### Editing Metadata for Related Assets

+From the Dependencies tab in the Catalog, you can efficiently apply metadata to all upstream and downstream assets of a selected asset in a single action.

+

+ +

+## Governance Dashboard (Coming soon)

+

+The Governance Dashboard provides insights into your data governance structure, helping you identify gaps and inconsistencies at a glance.

+

+

+

+## Governance Dashboard (Coming soon)

+

+The Governance Dashboard provides insights into your data governance structure, helping you identify gaps and inconsistencies at a glance.

+

+ +

+### Dashboard Features

+

+- **Tag Distribution Analysis** - Visualize tag coverage across assets and identify areas needing better categorization

+- **Ownership Coverage** - Track asset ownership distribution and highlight resources requiring owner assignment

+- **Description Status** - Monitor assets with missing or incomplete descriptions to improve documentation

+- **Critical Asset Tracking** - Get an overview of your business-critical data assets and their governance status

+- **Dynamic Filtering** - Filter the Assets Table in real-time based on dashboard selections and metrics

diff --git a/docs/cloud/features/data-lineage/column-level-lineage.mdx b/docs/cloud/features/data-lineage/column-level-lineage.mdx

new file mode 100644

index 000000000..3b6479e6e

--- /dev/null

+++ b/docs/cloud/features/data-lineage/column-level-lineage.mdx

@@ -0,0 +1,46 @@

+---

+title: Column-Level Lineage

+sidebarTitle: Column level lineage

+---

+

+The table nodes in Elementary lineage can be expanded to show the columns. When you

+select a column, the lineage of that specific column will be highlighted.

+

+Column-level lineage is useful for answering questions such as:

+

+* Which downstream columns are actually impacted by a data quality issue?

+

+* Can we deprecate or rename a column?

+

+* Will changing this column impact a dashboard?

+

+### Filter and highlight columns path

+

+To help navigate graphs with large amount of columns per table, use the `...` menu to the right of the column:

+

+* **Filter**: Will show a graph of only the selected column and its dependencies.

+

+* **Highlight**: Will highlight only the selected column and its dependencies.

+

+

+

+### Column-level lineage generation

+

+Elementary parses SQL queries to determine the dependencies between columns.

+Note that the lineage is only of the columns that directly contribute data to the column.

+

+For example, for the query:

+

+```sql

+create or replace table db.schema.users as

+select

+ user_name,

+ count(distinct login_time) as total_logins

+from db.schema.login_events

+where user_type != 'test_user'

+```

+

+The direct dependency of `total_logins` is `login_events.login_time`.

+The column `login_events.user_type` filter the data of `total_logins`, but it is an indirect dependency and will not show in lineage.

+

+If you want a different approach in your Elementary Cloud instance - contact us.

\ No newline at end of file

diff --git a/docs/cloud/features/data-lineage/exposures-lineage.mdx b/docs/cloud/features/data-lineage/exposures-lineage.mdx

new file mode 100644

index 000000000..74c887b3a

--- /dev/null

+++ b/docs/cloud/features/data-lineage/exposures-lineage.mdx

@@ -0,0 +1,66 @@

+---

+title: Lineage to Downstream Dashboards and Tools

+sidebarTitle: Lineage to BI

+---

+

+import BiCards from '/snippets/cloud/integrations/cards-groups/bi-cards.mdx';

+

+

+

+Some of your data is used downstream in dashboards, applications, data science pipelines, reverse ETLs, etc.

+These downstream data consumers are called _exposures_.

+

+The Elementary lineage graph presents downstream exposures of two origins:

+

+1. Elementary automated BI integrations

+2. Exposures configured in your dbt project. Read about [how to configure exposures](https://docs.getdbt.com/docs/build/exposures) in code.

+

+

+

+### Dashboard Features

+

+- **Tag Distribution Analysis** - Visualize tag coverage across assets and identify areas needing better categorization

+- **Ownership Coverage** - Track asset ownership distribution and highlight resources requiring owner assignment

+- **Description Status** - Monitor assets with missing or incomplete descriptions to improve documentation

+- **Critical Asset Tracking** - Get an overview of your business-critical data assets and their governance status

+- **Dynamic Filtering** - Filter the Assets Table in real-time based on dashboard selections and metrics

diff --git a/docs/cloud/features/data-lineage/column-level-lineage.mdx b/docs/cloud/features/data-lineage/column-level-lineage.mdx

new file mode 100644

index 000000000..3b6479e6e

--- /dev/null

+++ b/docs/cloud/features/data-lineage/column-level-lineage.mdx

@@ -0,0 +1,46 @@

+---

+title: Column-Level Lineage

+sidebarTitle: Column level lineage

+---

+

+The table nodes in Elementary lineage can be expanded to show the columns. When you

+select a column, the lineage of that specific column will be highlighted.

+

+Column-level lineage is useful for answering questions such as:

+

+* Which downstream columns are actually impacted by a data quality issue?

+

+* Can we deprecate or rename a column?

+

+* Will changing this column impact a dashboard?

+

+### Filter and highlight columns path

+

+To help navigate graphs with large amount of columns per table, use the `...` menu to the right of the column:

+

+* **Filter**: Will show a graph of only the selected column and its dependencies.

+

+* **Highlight**: Will highlight only the selected column and its dependencies.

+

+

+

+### Column-level lineage generation

+

+Elementary parses SQL queries to determine the dependencies between columns.

+Note that the lineage is only of the columns that directly contribute data to the column.

+

+For example, for the query:

+

+```sql

+create or replace table db.schema.users as

+select

+ user_name,

+ count(distinct login_time) as total_logins

+from db.schema.login_events

+where user_type != 'test_user'

+```

+

+The direct dependency of `total_logins` is `login_events.login_time`.

+The column `login_events.user_type` filter the data of `total_logins`, but it is an indirect dependency and will not show in lineage.

+

+If you want a different approach in your Elementary Cloud instance - contact us.

\ No newline at end of file

diff --git a/docs/cloud/features/data-lineage/exposures-lineage.mdx b/docs/cloud/features/data-lineage/exposures-lineage.mdx

new file mode 100644

index 000000000..74c887b3a

--- /dev/null

+++ b/docs/cloud/features/data-lineage/exposures-lineage.mdx

@@ -0,0 +1,66 @@

+---

+title: Lineage to Downstream Dashboards and Tools

+sidebarTitle: Lineage to BI

+---

+

+import BiCards from '/snippets/cloud/integrations/cards-groups/bi-cards.mdx';

+

+

+

+Some of your data is used downstream in dashboards, applications, data science pipelines, reverse ETLs, etc.

+These downstream data consumers are called _exposures_.

+

+The Elementary lineage graph presents downstream exposures of two origins:

+

+1. Elementary automated BI integrations

+2. Exposures configured in your dbt project. Read about [how to configure exposures](https://docs.getdbt.com/docs/build/exposures) in code.

+

+ +

+  +

+  +

+ +

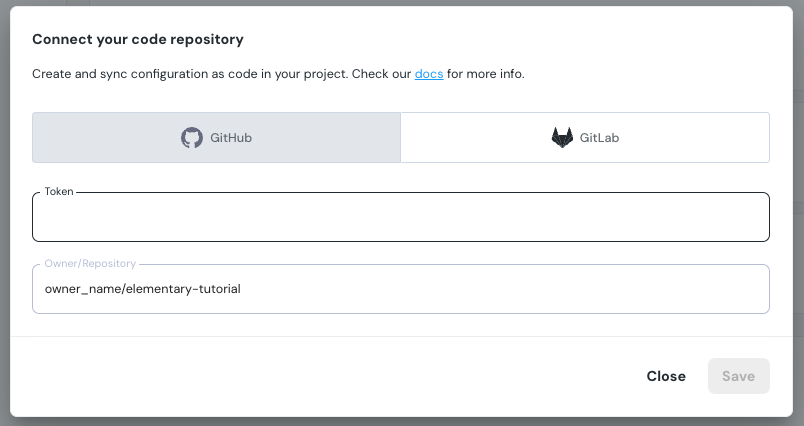

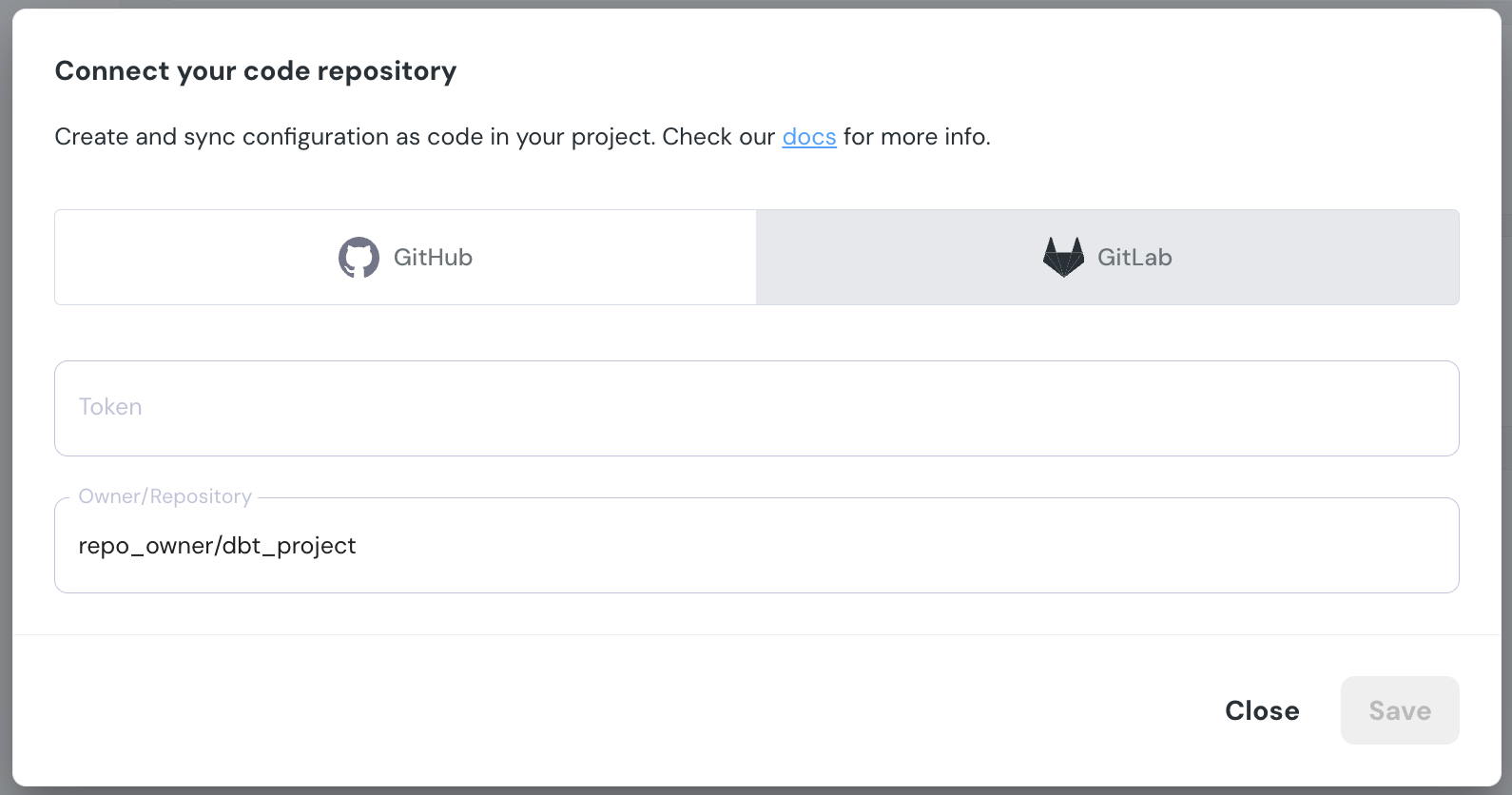

+A pull request will be opened in the code repository, and a link to the PR will be provided in the UI.

+

+

+A pull request will be opened in the code repository, and a link to the PR will be provided in the UI.

+ diff --git a/docs/cloud/features/data-tests/dbt-tests.mdx b/docs/cloud/features/data-tests/dbt-tests.mdx

new file mode 100644

index 000000000..00bcc258c

--- /dev/null

+++ b/docs/cloud/features/data-tests/dbt-tests.mdx

@@ -0,0 +1,36 @@

+---

+title: dbt, Packages and Elementary Tests

+sidebarTitle: dbt tests

+---

+

+import BenefitsDbtTests from '/snippets/cloud/features/data-tests/benefits-dbt-tests.mdx';

+import DbtTestHub from '/snippets/cloud/features/data-tests/dbt-test-hub.mdx';

+import TestsCards from '/snippets/data-tests/tests-cards.mdx';

+

+

+

+

diff --git a/docs/cloud/features/data-tests/dbt-tests.mdx b/docs/cloud/features/data-tests/dbt-tests.mdx

new file mode 100644

index 000000000..00bcc258c

--- /dev/null

+++ b/docs/cloud/features/data-tests/dbt-tests.mdx

@@ -0,0 +1,36 @@

+---

+title: dbt, Packages and Elementary Tests

+sidebarTitle: dbt tests

+---

+

+import BenefitsDbtTests from '/snippets/cloud/features/data-tests/benefits-dbt-tests.mdx';

+import DbtTestHub from '/snippets/cloud/features/data-tests/dbt-test-hub.mdx';

+import TestsCards from '/snippets/data-tests/tests-cards.mdx';

+

+

+

+ +

+  +

+  +

+  +

+  +

+  -

+

-

+ +

+  +

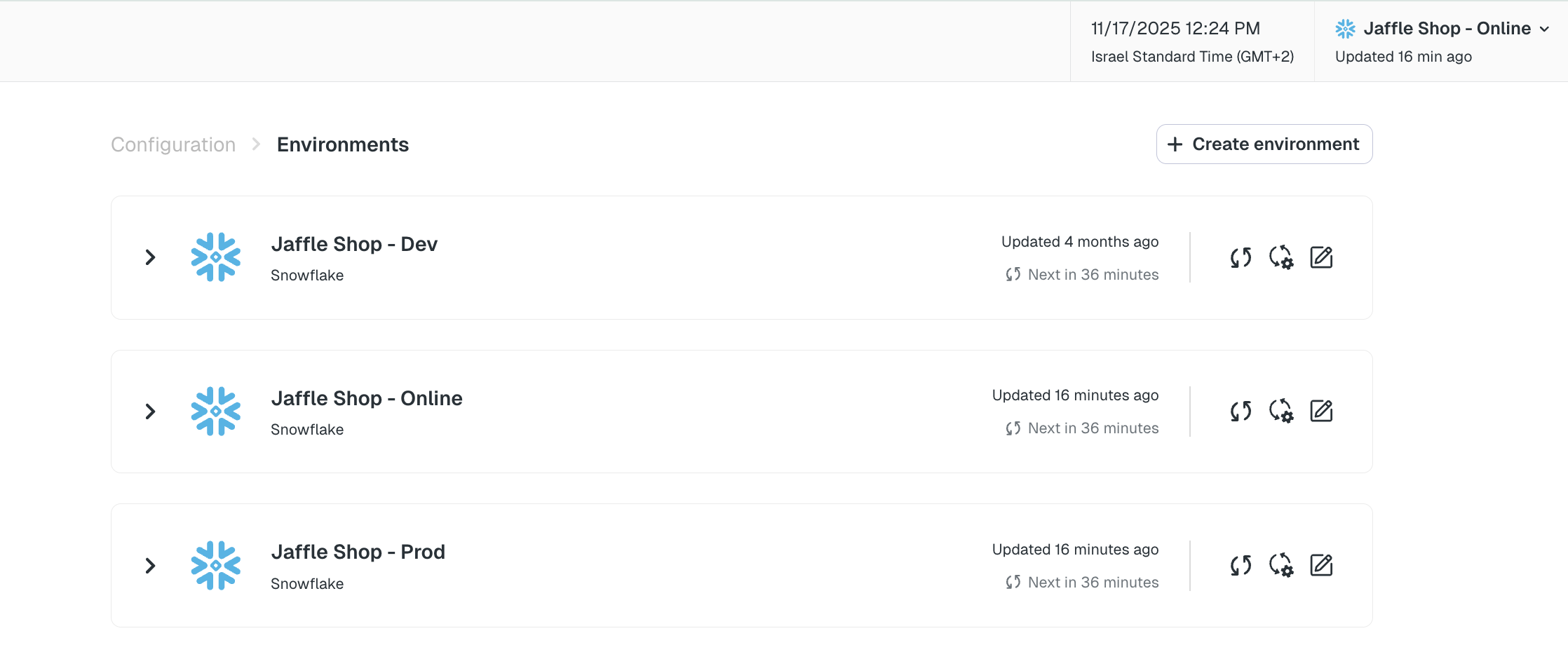

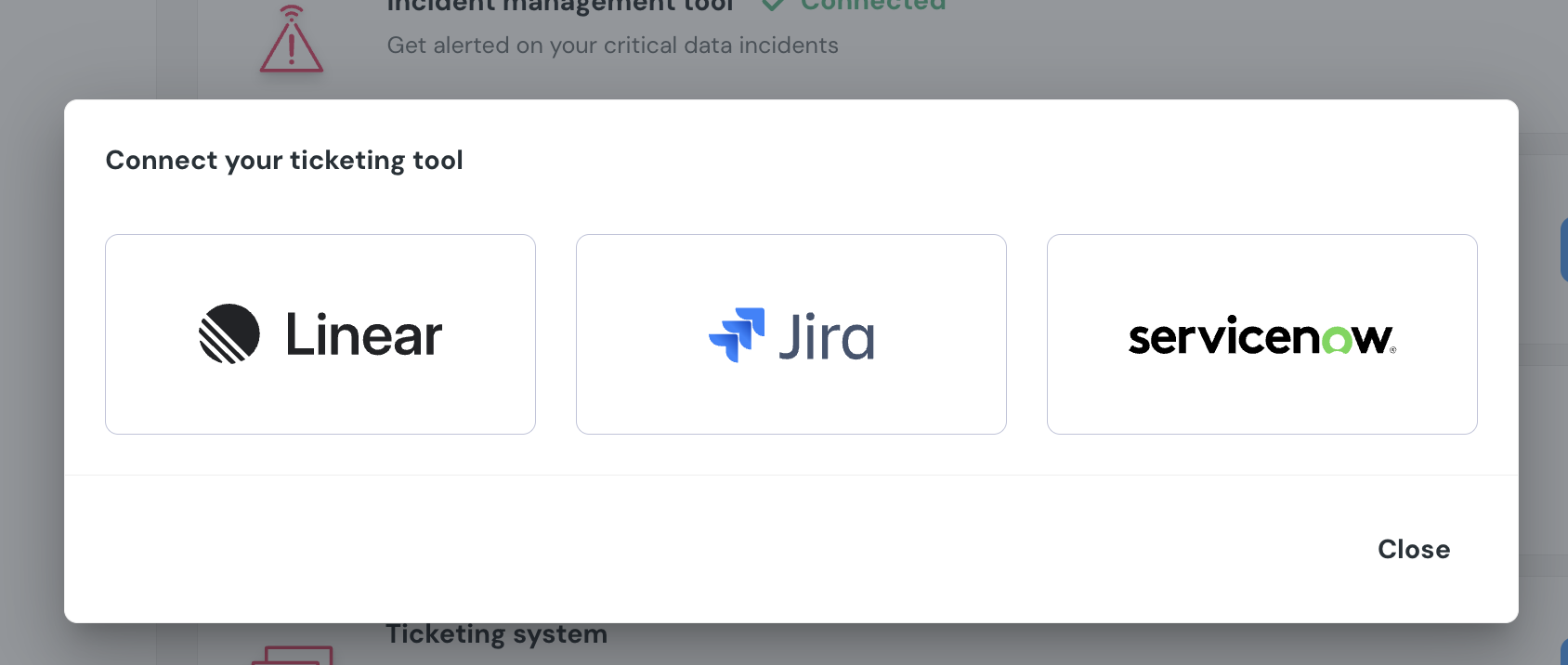

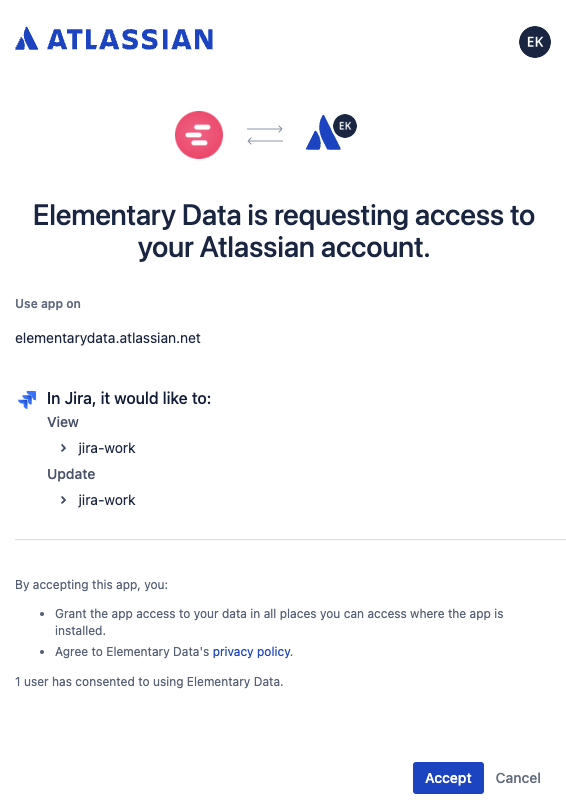

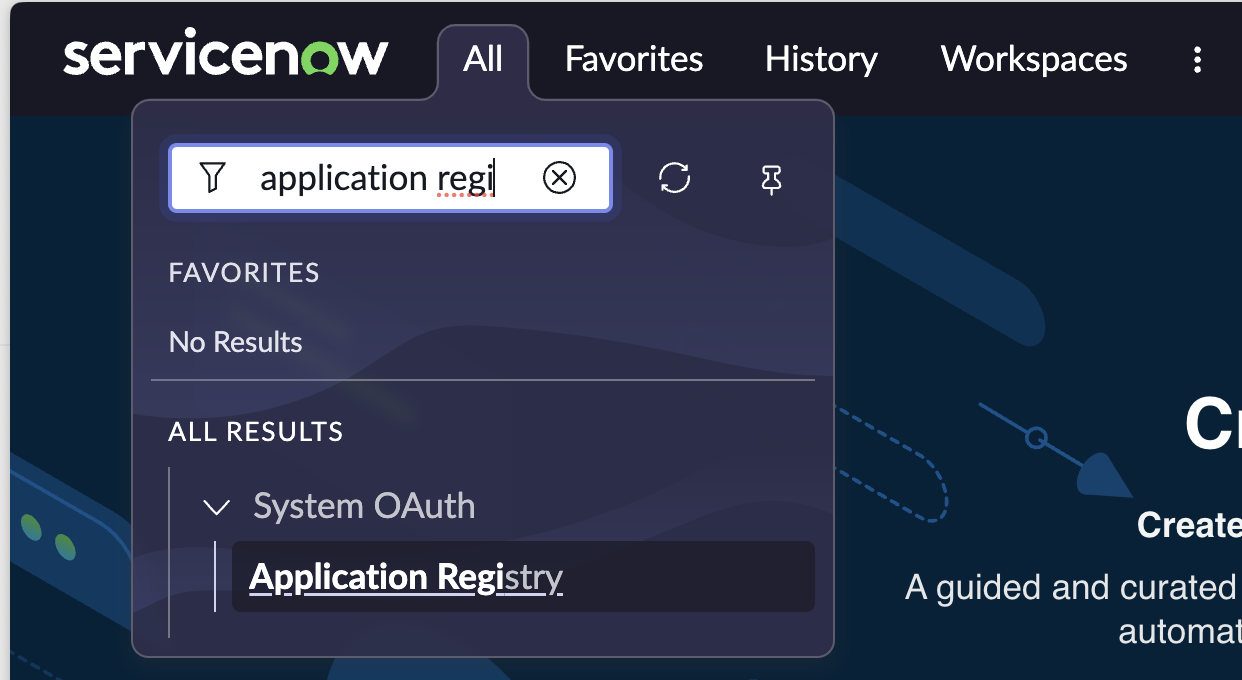

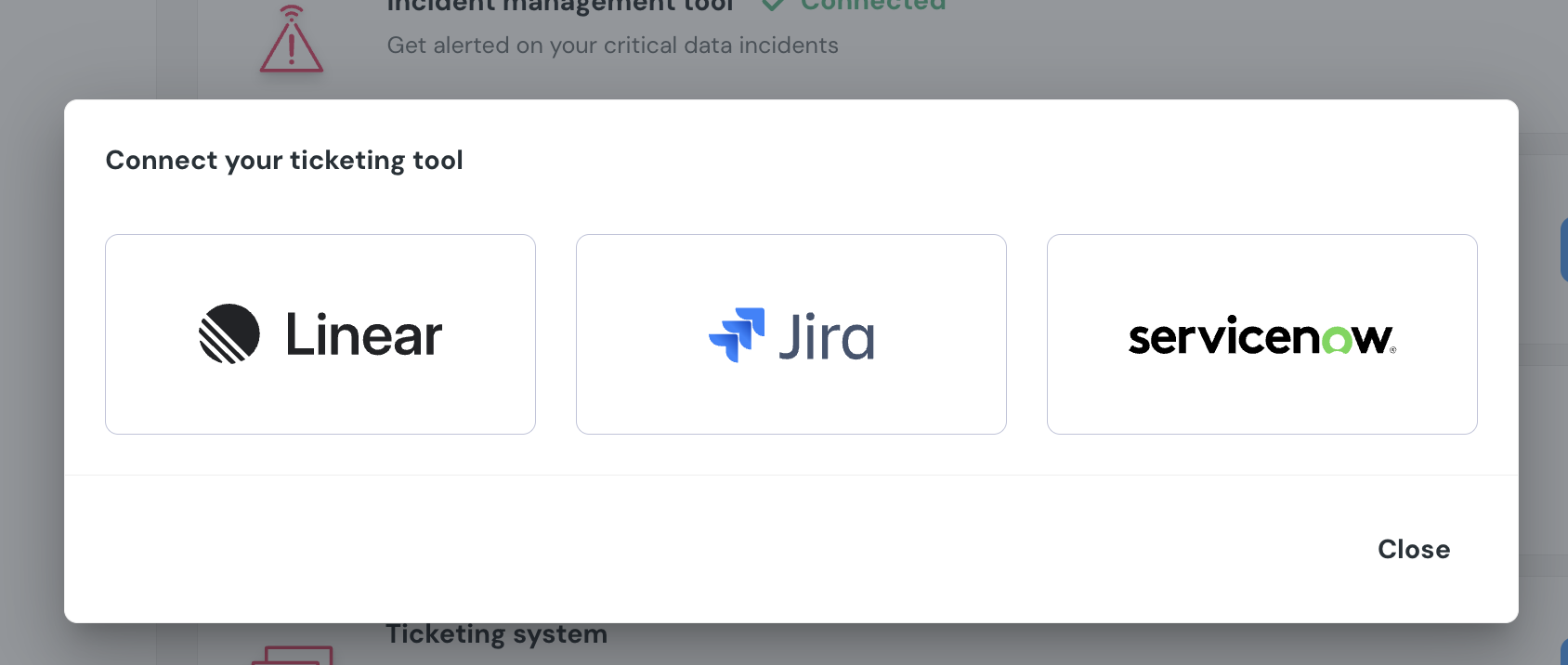

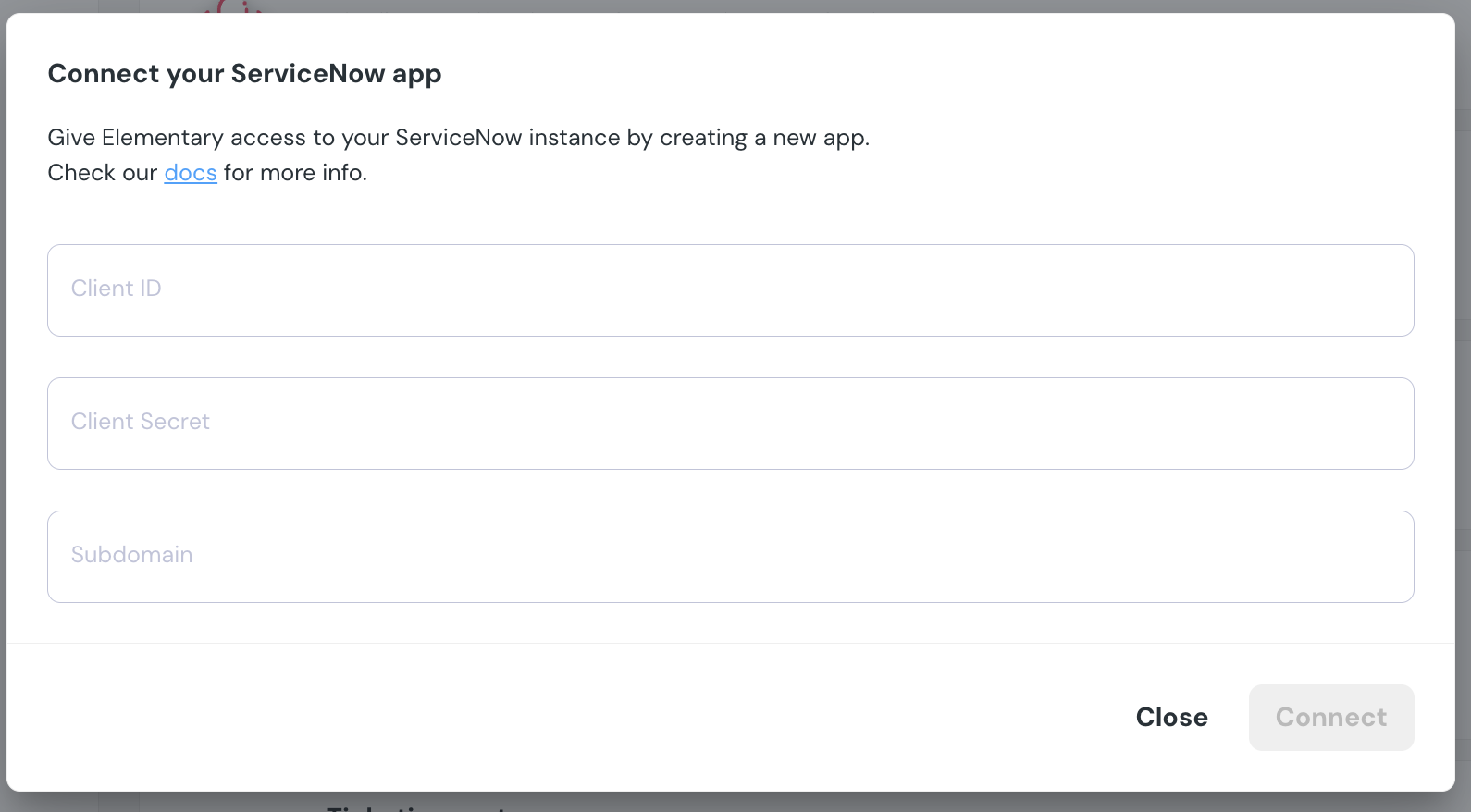

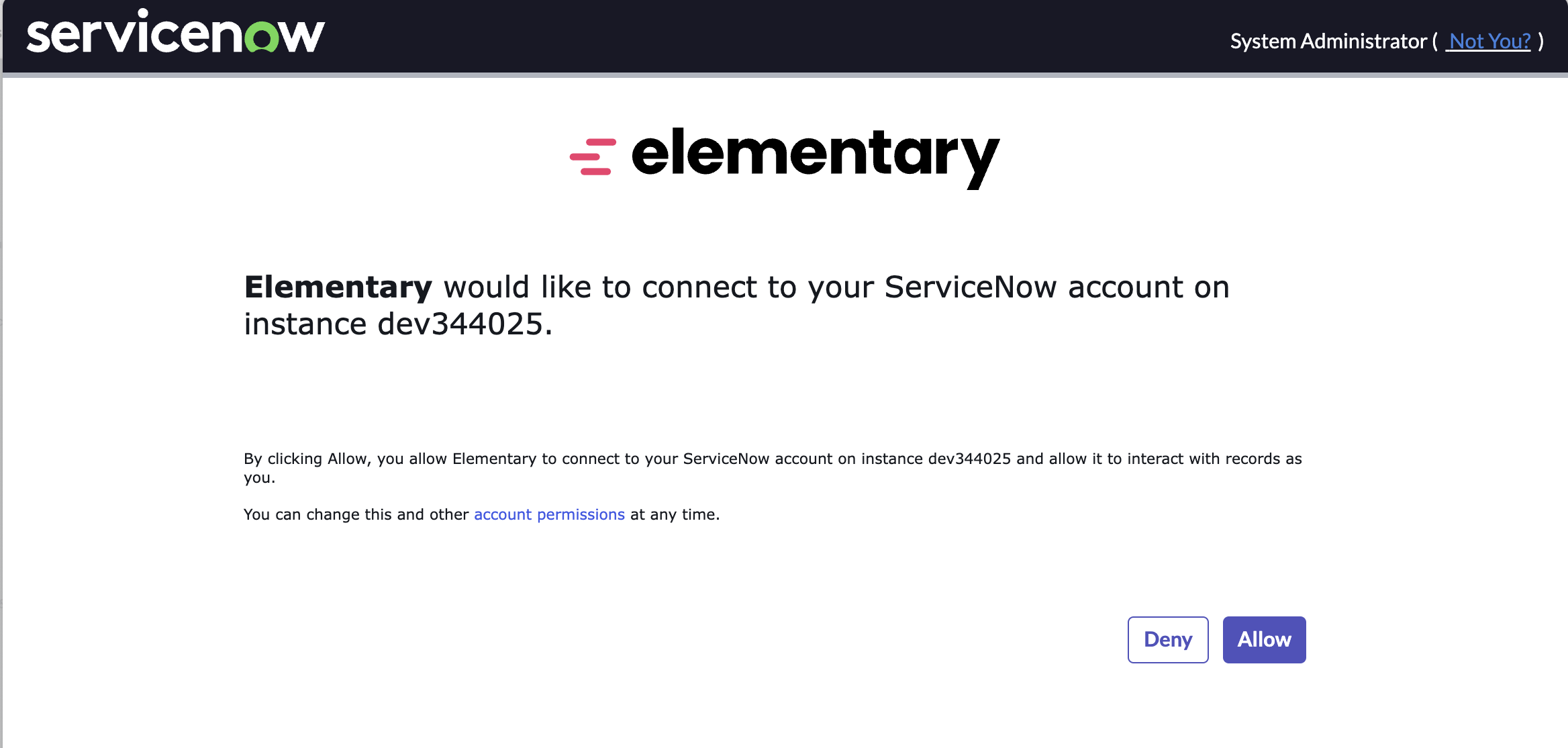

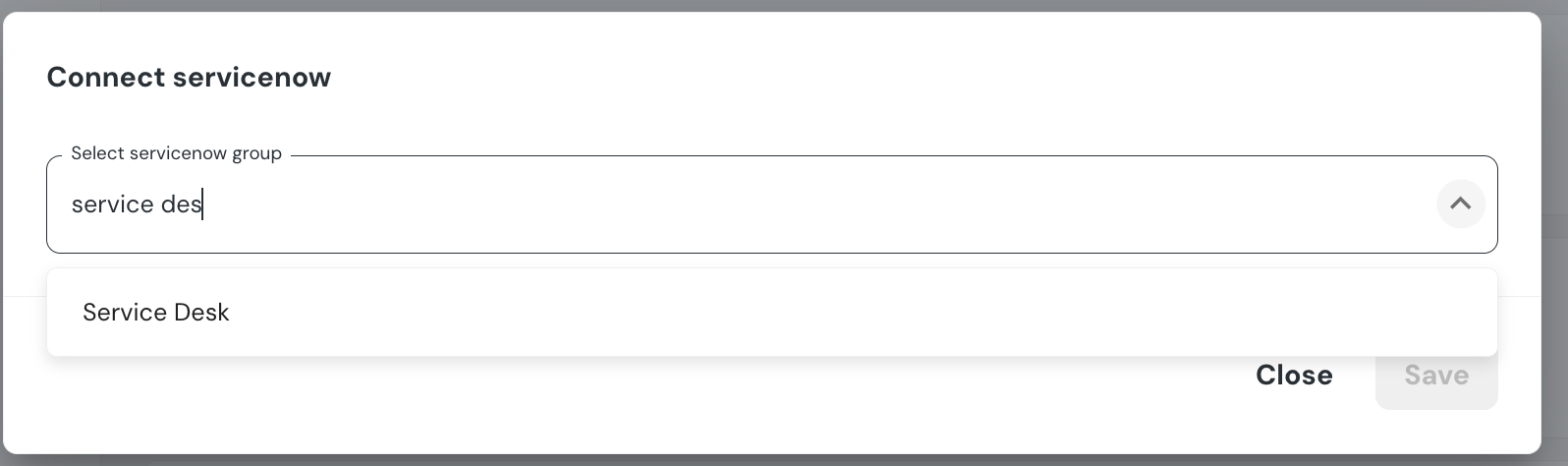

+## How to connect Jira

+1. Go to the `Environments` page on the sidebar.

+2. Select an environment and click connect on the `Connect ticketing system` card, and select `Jira`.

+3. Authorize the Elementary app for your workspace. **This step may require a workspace admin approval.**

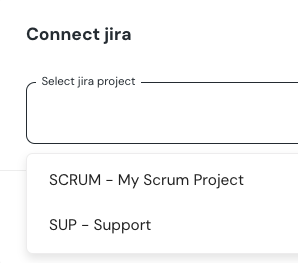

+4. Select a default project for tickets

+5. Click `Save` to finish the setup

+

+

+

+## How to connect Jira

+1. Go to the `Environments` page on the sidebar.

+2. Select an environment and click connect on the `Connect ticketing system` card, and select `Jira`.

+3. Authorize the Elementary app for your workspace. **This step may require a workspace admin approval.**

+4. Select a default project for tickets

+5. Click `Save` to finish the setup

+

+ +

+ +

+ +

+ +

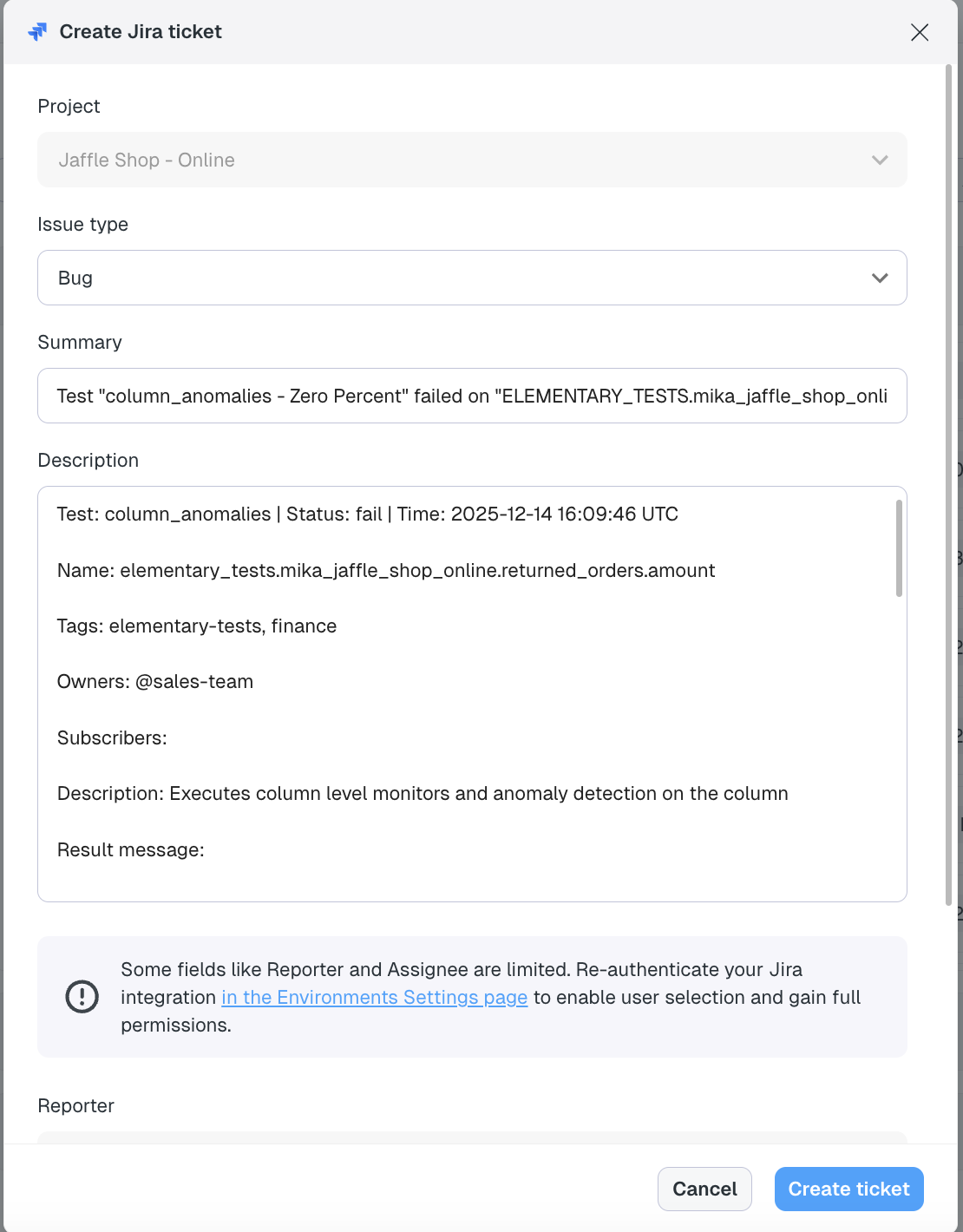

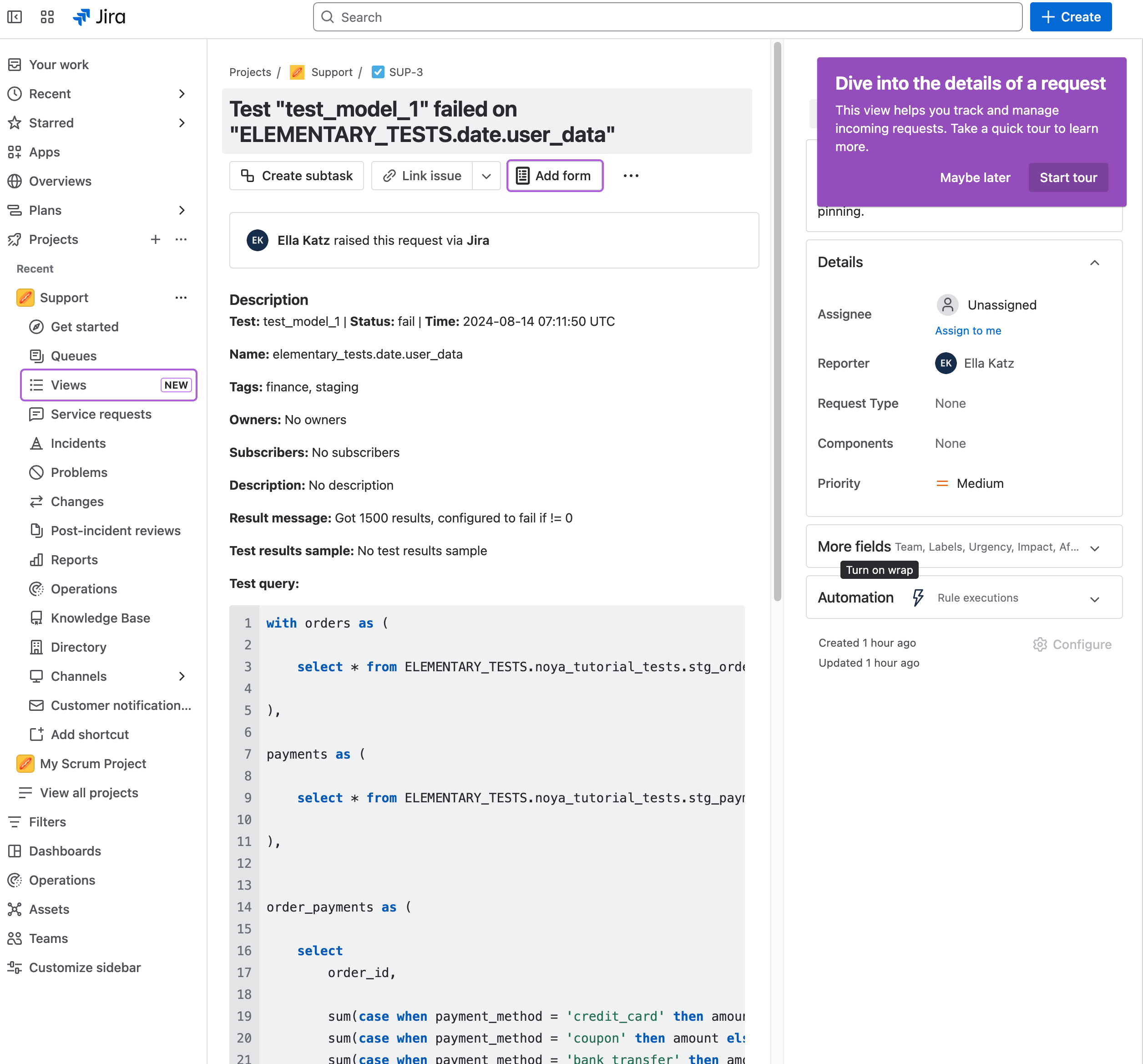

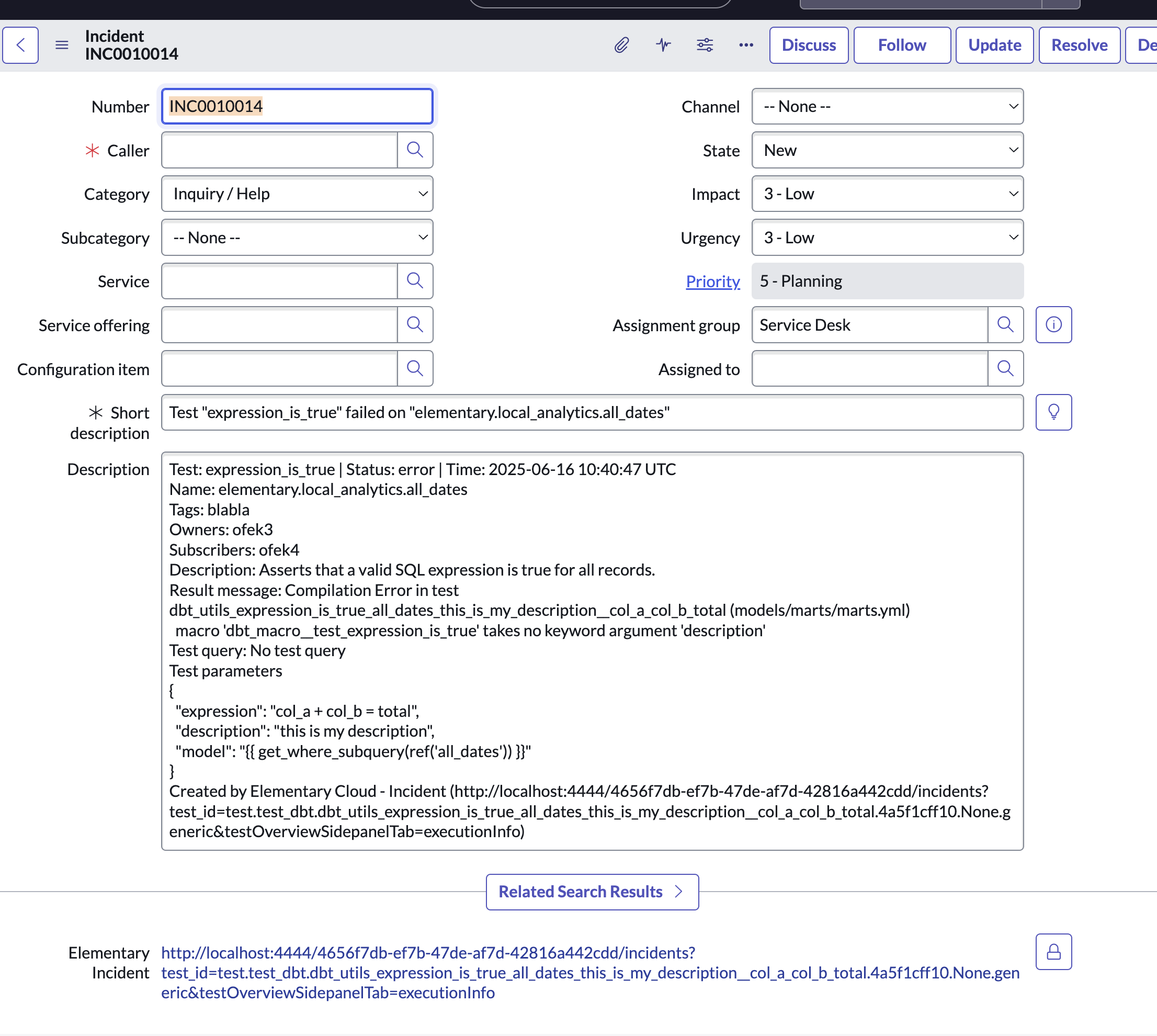

+## Creating Jira issues from incidents

+When an incident is created, you can generate a Jira issue directly from the incident page by clicking **Create Jira Ticket**. This opens a pre-filled form where you can review, update, or add additional fields before submitting. Once created, the issue is automatically added to the Jira team you selected when connecting Jira.

+

+After the ticket is created, a link to the Jira issue appears on the incident page. The Jira ticket itself also includes a link back to the incident in Elementary for easy cross-referencing.

+

+

+

+## Creating Jira issues from incidents

+When an incident is created, you can generate a Jira issue directly from the incident page by clicking **Create Jira Ticket**. This opens a pre-filled form where you can review, update, or add additional fields before submitting. Once created, the issue is automatically added to the Jira team you selected when connecting Jira.

+

+After the ticket is created, a link to the Jira issue appears on the incident page. The Jira ticket itself also includes a link back to the incident in Elementary for easy cross-referencing.

+

+ +

+

+

+ +

diff --git a/docs/cloud/integrations/alerts/linear.mdx b/docs/cloud/integrations/alerts/linear.mdx

index 5837a8c8a..35902c444 100644

--- a/docs/cloud/integrations/alerts/linear.mdx

+++ b/docs/cloud/integrations/alerts/linear.mdx

@@ -2,14 +2,30 @@

title: "Linear"

---

-

+

diff --git a/docs/cloud/integrations/alerts/linear.mdx b/docs/cloud/integrations/alerts/linear.mdx

index 5837a8c8a..35902c444 100644

--- a/docs/cloud/integrations/alerts/linear.mdx

+++ b/docs/cloud/integrations/alerts/linear.mdx

@@ -2,14 +2,30 @@

title: "Linear"

---

- +

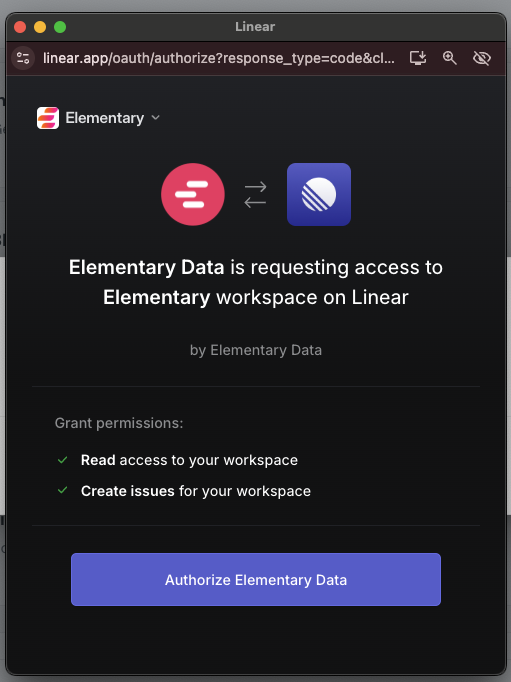

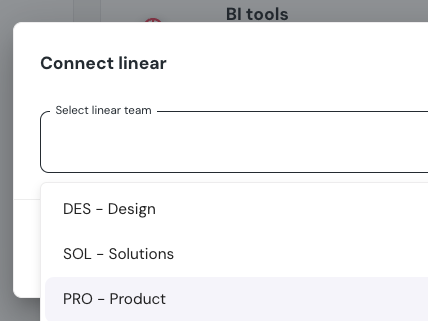

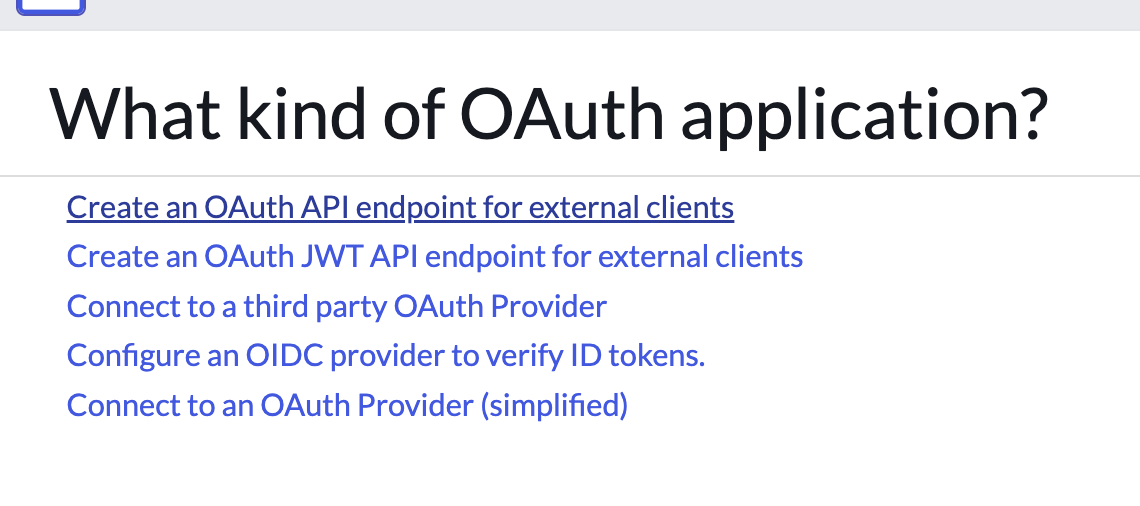

+## How to connect Linear

+1. Go to the `Environments` page on the sidebar.

+2. Select an environment and click connect on the `Connect ticketing system` card, and select `Linear`.

+3. Authorize the Elementary app for your workspace. **This step may require a workspace admin approval.**

+4. Select a default team for new tickets

+5. Click `Save` to finish the setup

+

+

+

+## How to connect Linear

+1. Go to the `Environments` page on the sidebar.

+2. Select an environment and click connect on the `Connect ticketing system` card, and select `Linear`.

+3. Authorize the Elementary app for your workspace. **This step may require a workspace admin approval.**

+4. Select a default team for new tickets

+5. Click `Save` to finish the setup

+

+ +

+ +

+ +

+ +

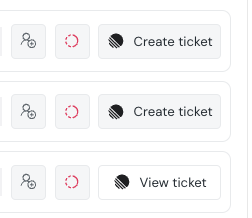

+## Creating Linear ticket from incidents

+When an incident is created, you can create a Linear ticket from the incident page by simply clicking on "Create Linear Ticket".

+The ticket will automatically be created in Linear, in the team you chose upon connecting Linear.

+

+After the ticket is created you can see the Linear ticket link in the incident page.

+The ticket will also contain a link to the incident in Elementary.

+

+

+## Creating Linear ticket from incidents

+When an incident is created, you can create a Linear ticket from the incident page by simply clicking on "Create Linear Ticket".

+The ticket will automatically be created in Linear, in the team you chose upon connecting Linear.

+

+After the ticket is created you can see the Linear ticket link in the incident page.

+The ticket will also contain a link to the incident in Elementary.

+ +

+

+

+ diff --git a/docs/cloud/integrations/alerts/ms-teams.mdx b/docs/cloud/integrations/alerts/ms-teams.mdx

index d7ea82311..387567b89 100644

--- a/docs/cloud/integrations/alerts/ms-teams.mdx

+++ b/docs/cloud/integrations/alerts/ms-teams.mdx

@@ -5,12 +5,12 @@ title: "Microsoft Teams"

Elementary's Microsoft Teams integration enables sending alerts when data issues happen.

The alerts are sent using Adaptive Cards format, which provides rich formatting and interactive capabilities.

-The alerts include rich context, and you can create [alert rules](/features/alerts-and-incidents/alert-rules) to distribute alerts to different channels and destinations.

+The alerts include rich context, and you can create [alert rules](cloud/features/alerts-and-incidents/alert-rules) to distribute alerts to different channels and destinations.

diff --git a/docs/cloud/integrations/alerts/ms-teams.mdx b/docs/cloud/integrations/alerts/ms-teams.mdx

index d7ea82311..387567b89 100644

--- a/docs/cloud/integrations/alerts/ms-teams.mdx

+++ b/docs/cloud/integrations/alerts/ms-teams.mdx

@@ -5,12 +5,12 @@ title: "Microsoft Teams"

Elementary's Microsoft Teams integration enables sending alerts when data issues happen.

The alerts are sent using Adaptive Cards format, which provides rich formatting and interactive capabilities.

-The alerts include rich context, and you can create [alert rules](/features/alerts-and-incidents/alert-rules) to distribute alerts to different channels and destinations.

+The alerts include rich context, and you can create [alert rules](cloud/features/alerts-and-incidents/alert-rules) to distribute alerts to different channels and destinations.

+

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

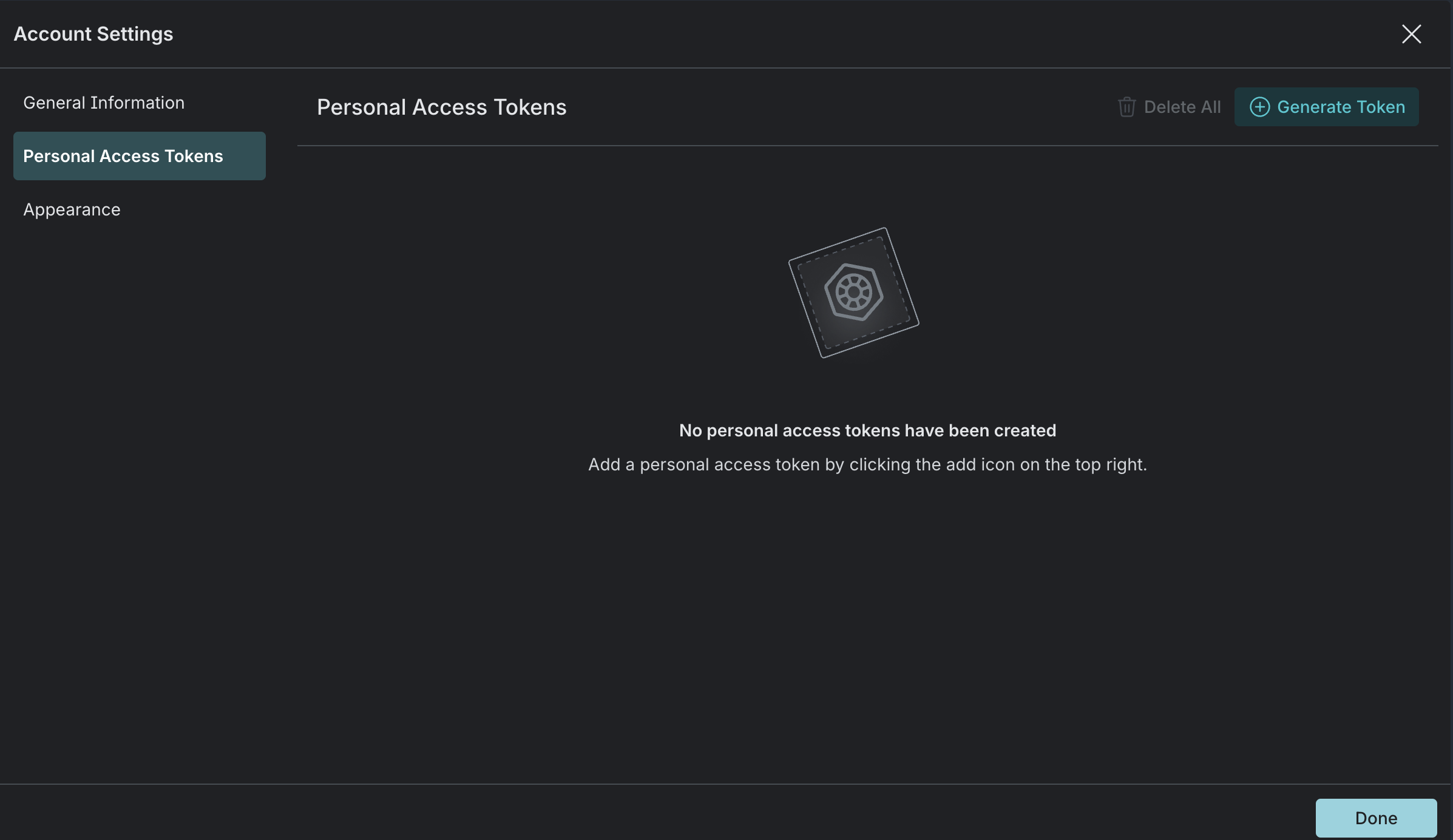

+ +To create one, please follow the [official Hex documentation](https://learn.hex.tech/docs/api/api-overview#workspace-tokens). +Make sure you create a Hex Workspace token with read access for all categories and "read project queried tables". + +### Connecting Hex to Elementary + +Navigate to the **Account settings > Environments** and choose the environment to which you would like to connect Hex. +Choose the Hex connection and provide the following details to validate and complete the integration. + +- **Base URL**: Your Hex workspace URL. For example: `https://app.hex.tech/my-workspace/` +- **Workspace Token**: The Hex workspace token you've created on the previous step. + + +### Limitations + +- **Hex assets will only be visible if their project has run within the last 14 days.** Elementary tracks Hex query history to determine asset visibility, and only projects with recent execution activity will appear in the lineage graph. diff --git a/docs/cloud/integrations/bi/lightdash.mdx b/docs/cloud/integrations/bi/lightdash.mdx new file mode 100644 index 000000000..e902055d4 --- /dev/null +++ b/docs/cloud/integrations/bi/lightdash.mdx @@ -0,0 +1,31 @@ +--- +title: "Lightdash" +--- + +After you connect Lightdash, Elementary will automatically and continuously extend the column-level-lineage to the dashboard and chart level. +This will provide you end-to-end data lineage to understand your downstream dependencies, called exposures. + +### Create a Personal Access Token + +Elementary needs a Personal Access Token (PAT) to access the Lightdash API on your behalf. + +1. In Lightdash, go to **Settings > Personal Access Tokens**. +2. Click **Generate Token**. +3. Give the token a descriptive name (e.g. "Elementary integration"). +4. Copy and save the generated token securely — you will need it when connecting Lightdash to Elementary. + +For more details, refer to the [official Lightdash documentation](https://docs.lightdash.com/references/workspace/personal-tokens). + +

+To create those, Please follow the [official Sigma documentation](https://help.sigmacomputing.com/reference/generate-client-credentials#generate-api-client-credentials). +Make sure you enable 'REST API' privileges for that client. + +### Connecting Sigma to Elementary + +Navigate to the **Account settings > Environments** and choose the environment to which you would like to connect Sigma. +Choose the Sigma connection and provide the following details to validate and complete the integration. + +- **Cloud Provider:** To determine your Sigma cloud provider, Navigate to **Account -> General Settings** under Sigma's **Administration** menu and look for **'Cloud: ...'**.

Should be one of the following: + - `AWS US` + - `AWS Canada` + - `AWS Europe` + - `AWS UK` + - `Azure US` + - `GCP` +- **Client ID**: The Sigma client ID you've created on the previous step. +- **Client Secret:** The new Sigma client secret you've created on the previous step. + + +### Limitations + +`Datasets` or `Data Models` are currently excluded from computed lineage graph - which will point from DWH directly to your Workbook Elements.

\ No newline at end of file diff --git a/docs/cloud/integrations/bi/thoughtspot.mdx b/docs/cloud/integrations/bi/thoughtspot.mdx index 52b31d8e9..160c8bf81 100644 --- a/docs/cloud/integrations/bi/thoughtspot.mdx +++ b/docs/cloud/integrations/bi/thoughtspot.mdx @@ -1,15 +1,32 @@ --- -title: "ThoughtSpot" +title: "Thoughtspot" --- -

+To enable Trusted Authentication on a user, please follow the [official Thoughtspot documentation](https://developers.thoughtspot.com/docs/trusted-auth-secret-key).

+Make sure you copy the generated token (`Secret Key`) as you will need it to connect Thoughtspot to Elementary. + +### User Privileges + +For an easy integration, it's recommended for the connected user to be an administrator (`ADMINISTRATION` privilege), this will ensure Elementary can access all of your Liveboards and Answers.

+It is also possible though to integrate with a regular user, just make sure it can download data (has `DATADOWNLOADING` privilege) for all the relvant ThoughtSpot entities you want Elementary to discover and show lineage for. + +### Connecting Thoughtspot to Elementary + +Navigate to the **Account settings > Environments** and choose the environment to which you would like to connect ThoughtSpot. +Choose the Thoughtspot connection and provide the following details to validate and complete the integration. + +- **User Name:** The username of the user you want to use to connect to Thoughtspot. +- **Secret Key:** The token generated for the user you want to use to connect to Thoughtspot (from the previous step). +- **Base URL:** The URL of your Thoughtspot instance. This would be `'https://

\ No newline at end of file diff --git a/docs/cloud/integrations/code-repo/azure-devops.mdx b/docs/cloud/integrations/code-repo/azure-devops.mdx new file mode 100644 index 000000000..0784986ac --- /dev/null +++ b/docs/cloud/integrations/code-repo/azure-devops.mdx @@ -0,0 +1,43 @@ +--- +title: "Azure DevOps Integration" +sidebarTitle: "Azure DevOps" +--- + +Elementary can integrate with Azure DevOps to connect to the code repository where your **dbt project code** is managed, and it opens pull requests with configuration changes. + +## Connecting Through the Azure DevOps App + +1. Navigate to **Environments** in Elementary Cloud, open your environment, then go to **Code repository**. +2. Click on **Connect** and select **Azure DevOps**. +3. Enter your Azure DevOps organization URL \ +(e.g., `https://dev.azure.com/your-organization`). +4. Click **Save**. +5. Connect through OAuth to authenticate between Azure DevOps and Elementary Cloud. During this process, a temporary token is issued, which can be used to make API calls. Along with the temporary token, a refresh token is also provided. The refresh token is used when Azure DevOps indicates that the temporary token has expired. For Microsoft services, OAuth is managed by Microsoft Entra ID (formerly known as Active Directory). + +## Updating tokens when they expire + +When your Azure DevOps token expires, update the connection with new tokens: + +1. Go to **Environments**, open your environment, and go to **Code repository**. +2. Click **Edit** on the code repository connection. +3. Paste the new generated tokens (from Azure DevOps) and save. + +--- + +## Required Permissions + +Elementary requires the following permissions in your Azure DevOps **dbt repository**: + +- **Read and write** access to the repository +- Access to **file contents** +- Permission to **open and read pull requests** + +--- + +## Troubleshooting + +If you encounter issues with the Azure DevOps integration, ensure the following: + +1. Your **organization URL** is correct. +2. You have **sufficient permissions** in Azure DevOps. +3. Elementary is properly **authorized** in your Azure DevOps organization. diff --git a/docs/cloud/integrations/code-repo/bitbucket.mdx b/docs/cloud/integrations/code-repo/bitbucket.mdx new file mode 100644 index 000000000..d44d7d0fa --- /dev/null +++ b/docs/cloud/integrations/code-repo/bitbucket.mdx @@ -0,0 +1,26 @@ +--- +title: "Bitbucket" +--- + +import RepoConnectionSettings from '/snippets/cloud/integrations/repo-connection-settings.mdx'; + + + +Elementary connects to the code repository where your dbt project code is managed, and opens PRs with configuration changes. + +## Recommended: Connect using Elementary Bitbucket App + +Navigate to the **Account settings > Environments** and choose the environment to which you would like to connect the dbt project code repository. + +Simply Click the blue button that says "Connect with Elementary Bitbucket App" and follow the instructions. +In the menu that opens up later on, select the repository where your dbt project is stored, and if needed the branch and path to the dbt project. + +

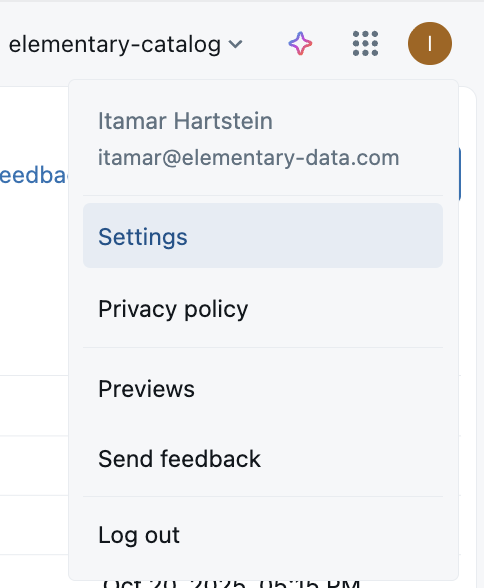

-

+

-

+ -

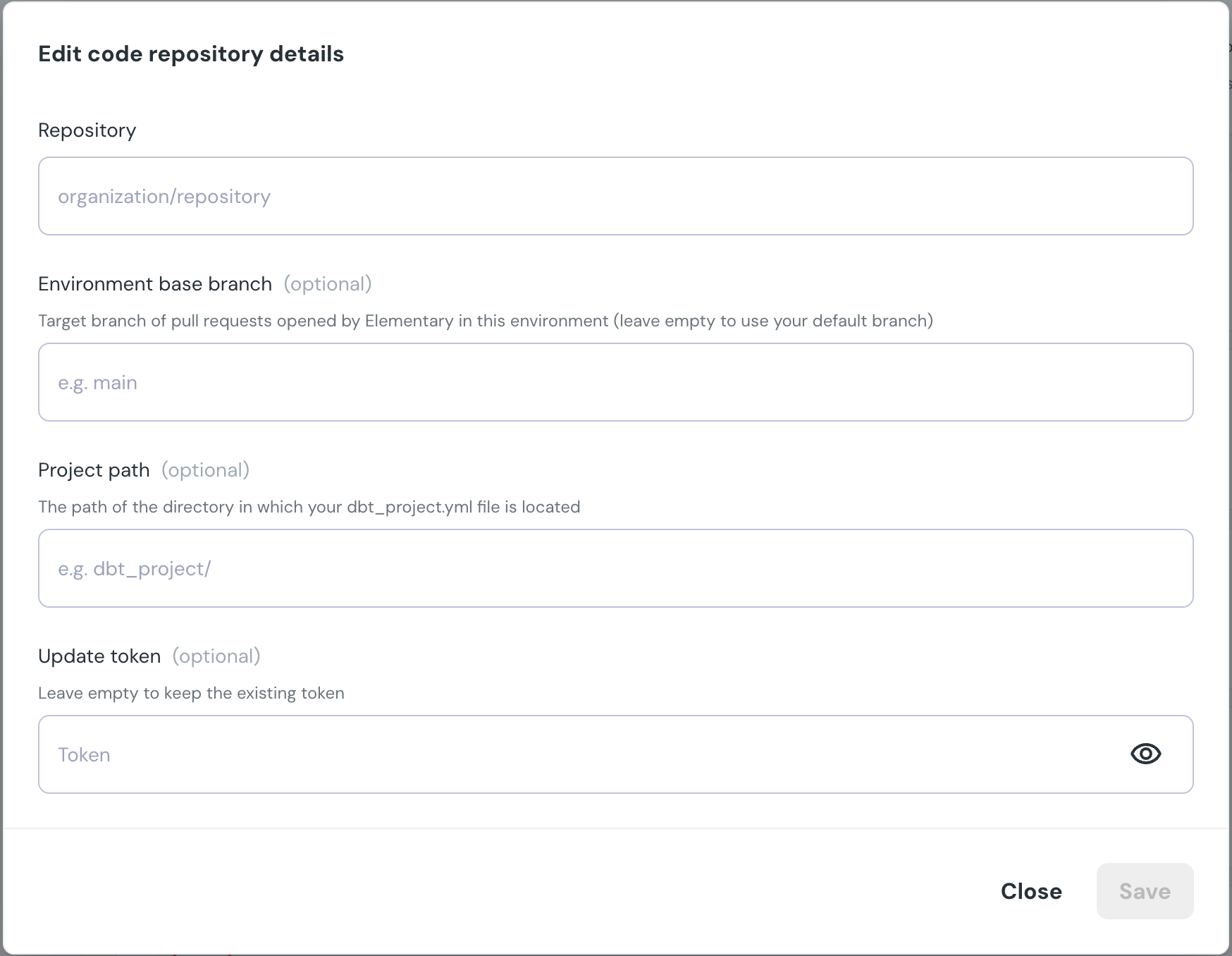

+## Repository connection settings

+

+

-

+## Repository connection settings

+

+ +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

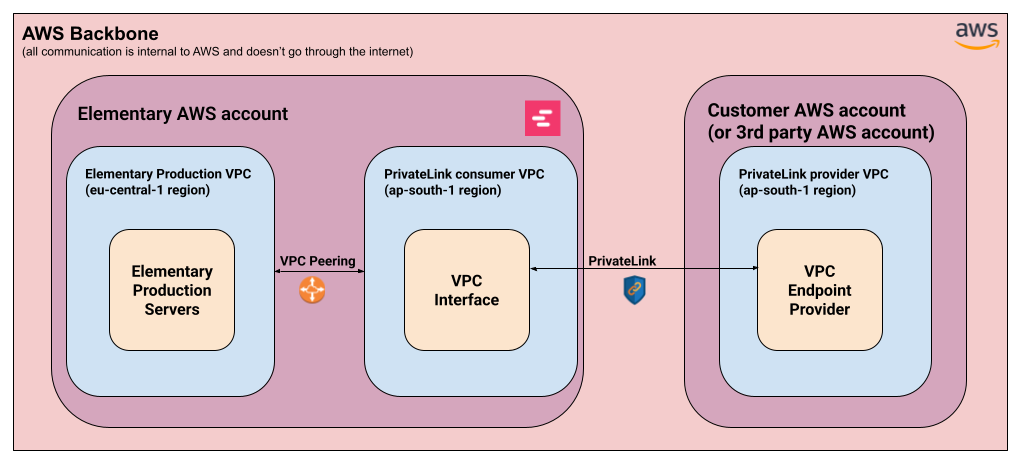

+Elementary’s PrivateLink setup consists generally from two parts:

+

+1. **AWS PrivateLink connection** -

+ 1. Provider side (Customer / 3rd party) - **A VPC endpoint service** is set up at the customer’s AWS account (or a 3rd party AWS account in the case of Snowflake). This provides access to a particular service in that account.

+ 2. Consumer side (Elementary) - Elementary sets up a dedicated VPC interface that will connect to the integrated service, in the same AWS region as the service.

+ This is done through a dedicated regional VPC created for this purpose.

+2. **AWS VPC Peering:**

+ 1. Elementary’s production servers are located in the **eu-central-1** (Frankfurt) region. For us to be able to access the service exposed through PrivateLink, we connect our main production VPC with the regional VPC mentioned above.

+

+## Supported integrations

+

+### Snowflake

+

+Snowflake has support for connecting to AWS-hosted Snowflake accounts via PrivateLink. This setup is entirely managed by Snowflake, so Elementary connects with an endpoint service hosted on Snowflake’s AWS account for this purpose.

+

+In order to set up a PrivateLink connection with Snowflake, please follow the steps below:

+

+1. **Open a support case to Snowflake Support**

+ 1. Ask to authorize Elementary’s AWS account for PrivateLink access.

+ 2. Provide Elementary’s account ID in the request - `743289191656`

+2. **Obtain the PrivateLink configuration**

+ 1. Once Snowflake’s support team approves the request, obtain the PrivateLink configuration by invoking the following commands (admin access is required):

+

+ ```sql

+ USE ROLE ACCOUNTADMIN;

+ SELECT SYSTEM$GET_PRIVATELINK_CONFIG();

+ ```

+

+3. **Provide Elementary with the configuration obtained in the previous step.**

+ 1. Elementary will then setup the required infrastructure to connect to Snowflake via PrivateLink.

+4. **Add a Snowflake environment in Elementary**

+ 1. Follow the instructions [here](/cloud/integrations/dwh/snowflake) to set up a Snowflake environment in Elementary.

+ 1. When supplying the account, use `

+

+Elementary’s PrivateLink setup consists generally from two parts:

+

+1. **AWS PrivateLink connection** -

+ 1. Provider side (Customer / 3rd party) - **A VPC endpoint service** is set up at the customer’s AWS account (or a 3rd party AWS account in the case of Snowflake). This provides access to a particular service in that account.

+ 2. Consumer side (Elementary) - Elementary sets up a dedicated VPC interface that will connect to the integrated service, in the same AWS region as the service.

+ This is done through a dedicated regional VPC created for this purpose.

+2. **AWS VPC Peering:**

+ 1. Elementary’s production servers are located in the **eu-central-1** (Frankfurt) region. For us to be able to access the service exposed through PrivateLink, we connect our main production VPC with the regional VPC mentioned above.

+

+## Supported integrations

+

+### Snowflake

+

+Snowflake has support for connecting to AWS-hosted Snowflake accounts via PrivateLink. This setup is entirely managed by Snowflake, so Elementary connects with an endpoint service hosted on Snowflake’s AWS account for this purpose.

+

+In order to set up a PrivateLink connection with Snowflake, please follow the steps below:

+

+1. **Open a support case to Snowflake Support**

+ 1. Ask to authorize Elementary’s AWS account for PrivateLink access.

+ 2. Provide Elementary’s account ID in the request - `743289191656`

+2. **Obtain the PrivateLink configuration**

+ 1. Once Snowflake’s support team approves the request, obtain the PrivateLink configuration by invoking the following commands (admin access is required):

+

+ ```sql

+ USE ROLE ACCOUNTADMIN;

+ SELECT SYSTEM$GET_PRIVATELINK_CONFIG();

+ ```

+

+3. **Provide Elementary with the configuration obtained in the previous step.**

+ 1. Elementary will then setup the required infrastructure to connect to Snowflake via PrivateLink.

+4. **Add a Snowflake environment in Elementary**

+ 1. Follow the instructions [here](/cloud/integrations/dwh/snowflake) to set up a Snowflake environment in Elementary.

+ 1. When supplying the account, use ` +

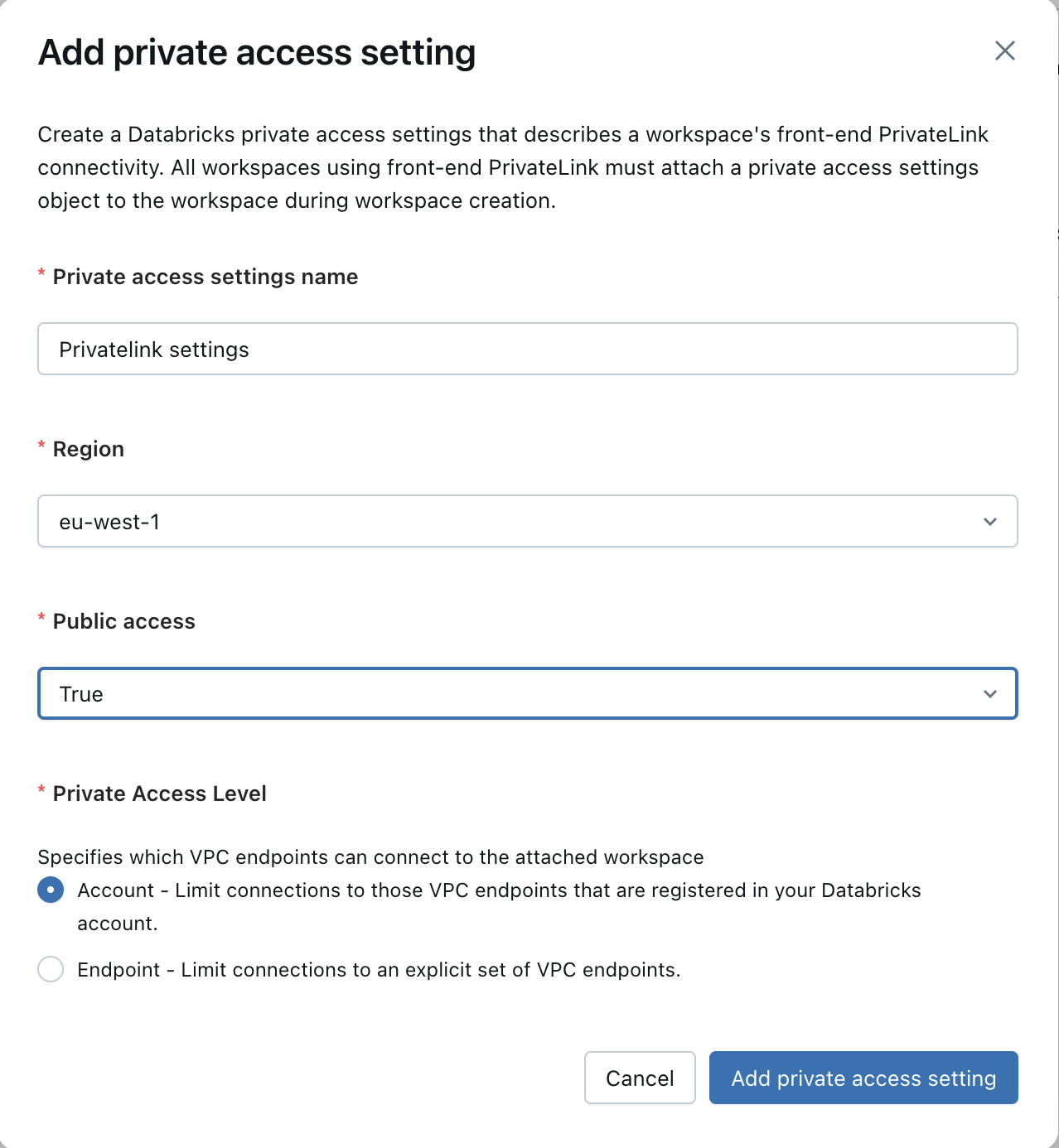

+3. **Configure a private access setting**

+

+ Go to Security -> Private Access Settings.

+

+ * If you've set up private link with your Databricks instance before, you should already have a private access setting configured.

+In that case, please ensure that the endpoint allows access to the VPC endpoint created in step (2).

+

+ * If this is the first time you are setting PrivateLink for your databricks workspace:

+ * Click on "Add private access config".

+ * Please fill in the following details:

+ * A name for your setting: e.g. "Privatelink settings"

+ * Your AWS region

+ * Whether or not to allow public access - only set this as False if all your systems and users access your Databricks workspace through privatelink.

+ * Private access level - either leave as "Account", or allow-list specific VPCs including the Elementary VPC created in the previous step.

+

+

+

+3. **Configure a private access setting**

+

+ Go to Security -> Private Access Settings.

+

+ * If you've set up private link with your Databricks instance before, you should already have a private access setting configured.

+In that case, please ensure that the endpoint allows access to the VPC endpoint created in step (2).

+

+ * If this is the first time you are setting PrivateLink for your databricks workspace:

+ * Click on "Add private access config".

+ * Please fill in the following details:

+ * A name for your setting: e.g. "Privatelink settings"

+ * Your AWS region

+ * Whether or not to allow public access - only set this as False if all your systems and users access your Databricks workspace through privatelink.

+ * Private access level - either leave as "Account", or allow-list specific VPCs including the Elementary VPC created in the previous step.

+

+  +

+4. **Add the private access setting to your Databricks workspace**

+

+ __Note__: If you have already set up Privatelink with Databricks in the past, you can skip this step.

+

+ Under the Databricks account management portal, go to Workspaces, click on your workspace and then on "Update Workspace".

+Then go to "Advanced Configurations", and under "Private Link", please attach the setting created in the previous step.

+

+5. **Add a Databricks environment in Elementary**

+

+ After all the previous steps are completed, please reach out to the Elementary team to verify that your Databricks cluster is accessible via PrivateLink.

+

+ Once verified, please add a Databricks environment to Elementary by following [this guide](/cloud/integrations/dwh/databricks).

+

+ Under the *Add the Elementary IP to allowlist* section, please add the following private subnets *instead* of the IP mentioned there:

+ * 10.0.1.x

+ * 10.0.2.x

+ * 10.0.3.x

+

+

+### Github Enterprise Server

+

+Github Enterprise Server can be connected to Elementary Cloud via AWS PrivateLink. This setup requires creating a VPC endpoint service in your AWS account that exposes your GitHub Enterprise Server instance.

+

+**Prerequisites:**

+- Your Github Enterprise Server instance must be accessible from within your AWS VPC

+- You must have administrative access to your AWS account

+- Ensure you are working in the correct AWS region where your Github Enterprise Server is deployed

+

+In order to set up a PrivateLink connection with Github Enterprise Server, please follow the steps below:

+

+1. **Create a VPC Endpoint Service**

+ - Follow the detailed instructions in the [Creating a VPC Endpoint Service](#creating-a-vpc-endpoint-service) section below to set up the endpoint service for your GitHub Enterprise Server instance.

+

+2. **Add a Github integration in Elementary** - Once the VPC endpoint service setup is completed, please proceed to adding a [GitHub integration](https://docs.elementary-data.com/cloud/integrations/code-repo/github). Note:

+ * OAuth is not currently supported for Github Enterprise Server, so you should generate a fine-grained token.

+ * You should use the same Github hostname as you would internally (Elementary will resolve that host to the privatelink endpoint)

+

+## Creating a VPC Endpoint Service

+

+

+

+4. **Add the private access setting to your Databricks workspace**

+

+ __Note__: If you have already set up Privatelink with Databricks in the past, you can skip this step.

+

+ Under the Databricks account management portal, go to Workspaces, click on your workspace and then on "Update Workspace".

+Then go to "Advanced Configurations", and under "Private Link", please attach the setting created in the previous step.

+

+5. **Add a Databricks environment in Elementary**

+

+ After all the previous steps are completed, please reach out to the Elementary team to verify that your Databricks cluster is accessible via PrivateLink.

+

+ Once verified, please add a Databricks environment to Elementary by following [this guide](/cloud/integrations/dwh/databricks).

+

+ Under the *Add the Elementary IP to allowlist* section, please add the following private subnets *instead* of the IP mentioned there:

+ * 10.0.1.x

+ * 10.0.2.x

+ * 10.0.3.x

+

+

+### Github Enterprise Server

+

+Github Enterprise Server can be connected to Elementary Cloud via AWS PrivateLink. This setup requires creating a VPC endpoint service in your AWS account that exposes your GitHub Enterprise Server instance.

+

+**Prerequisites:**

+- Your Github Enterprise Server instance must be accessible from within your AWS VPC

+- You must have administrative access to your AWS account

+- Ensure you are working in the correct AWS region where your Github Enterprise Server is deployed

+

+In order to set up a PrivateLink connection with Github Enterprise Server, please follow the steps below:

+

+1. **Create a VPC Endpoint Service**

+ - Follow the detailed instructions in the [Creating a VPC Endpoint Service](#creating-a-vpc-endpoint-service) section below to set up the endpoint service for your GitHub Enterprise Server instance.

+

+2. **Add a Github integration in Elementary** - Once the VPC endpoint service setup is completed, please proceed to adding a [GitHub integration](https://docs.elementary-data.com/cloud/integrations/code-repo/github). Note:

+ * OAuth is not currently supported for Github Enterprise Server, so you should generate a fine-grained token.

+ * You should use the same Github hostname as you would internally (Elementary will resolve that host to the privatelink endpoint)

+

+## Creating a VPC Endpoint Service

+

+ +

+ +

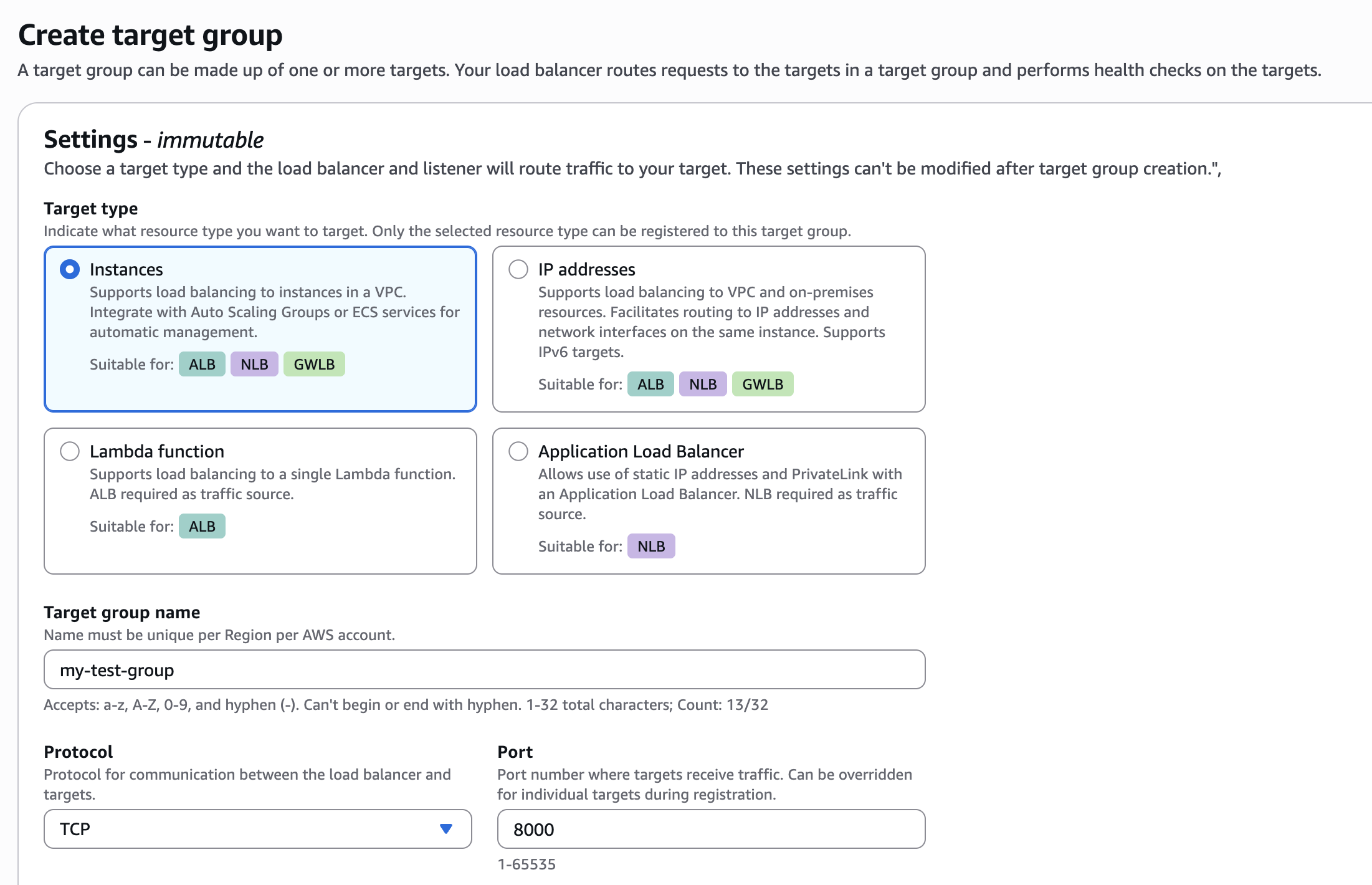

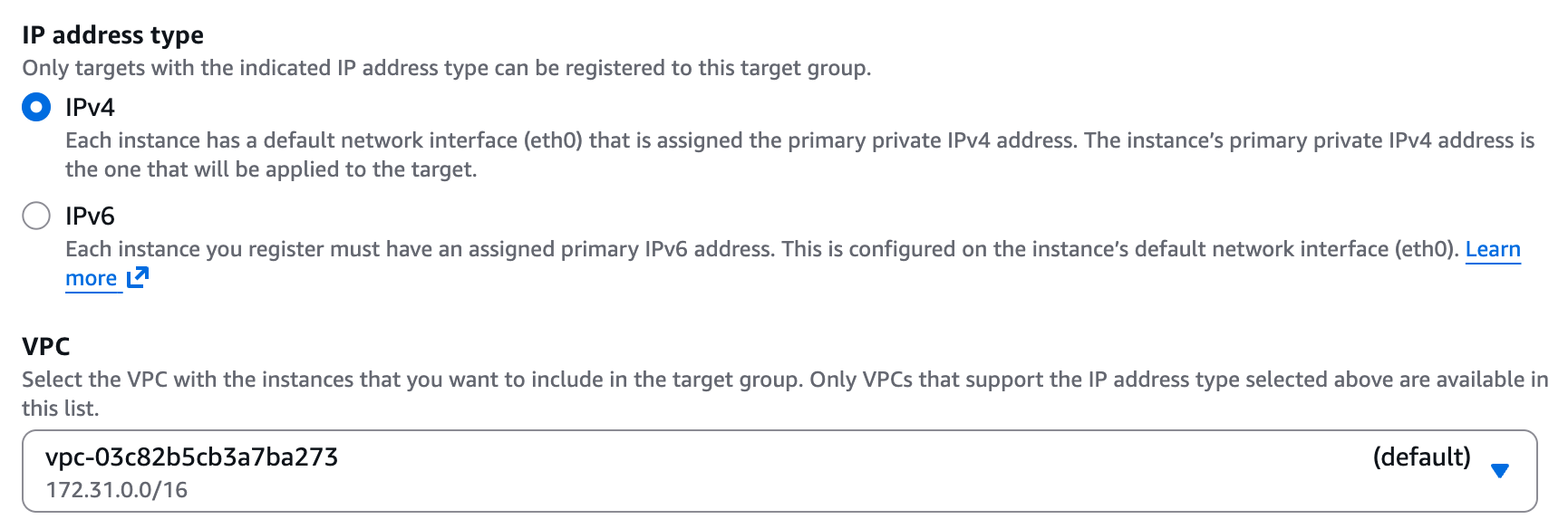

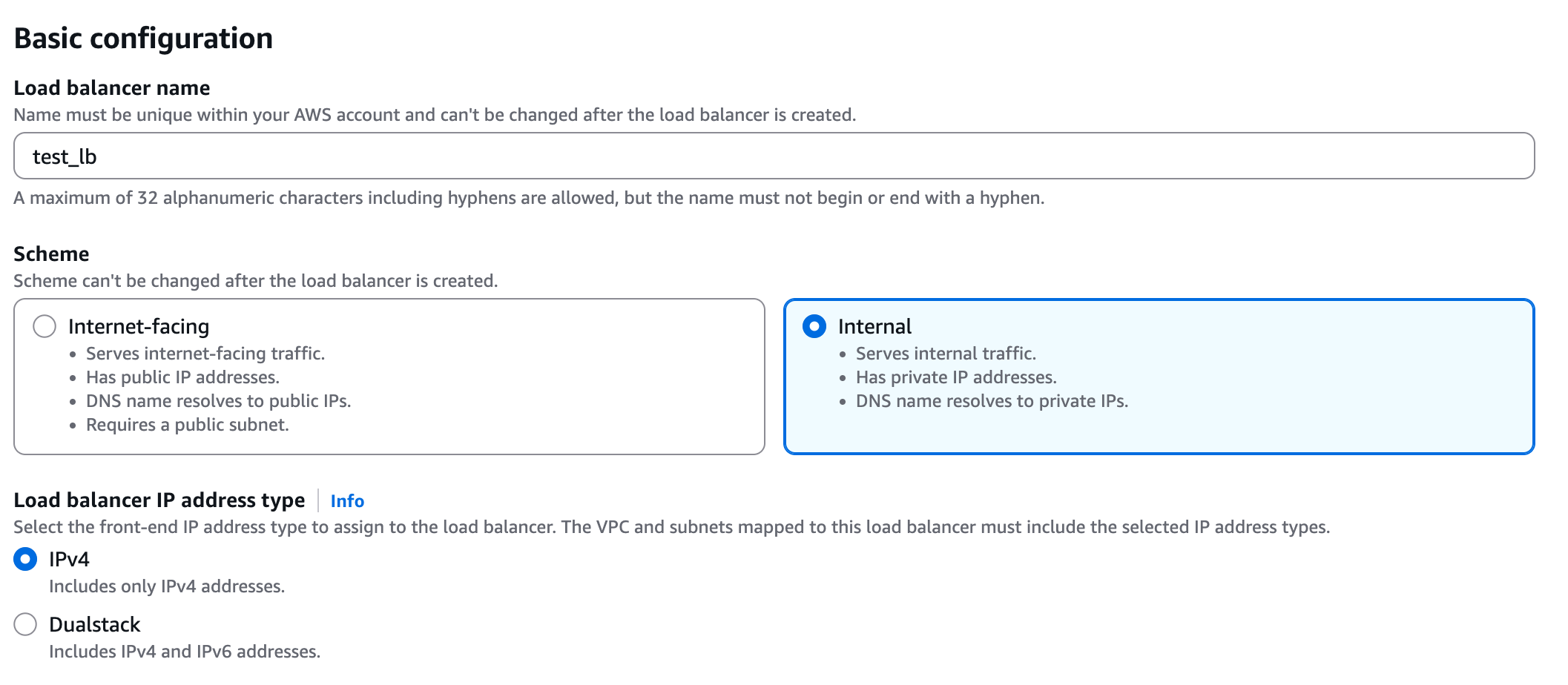

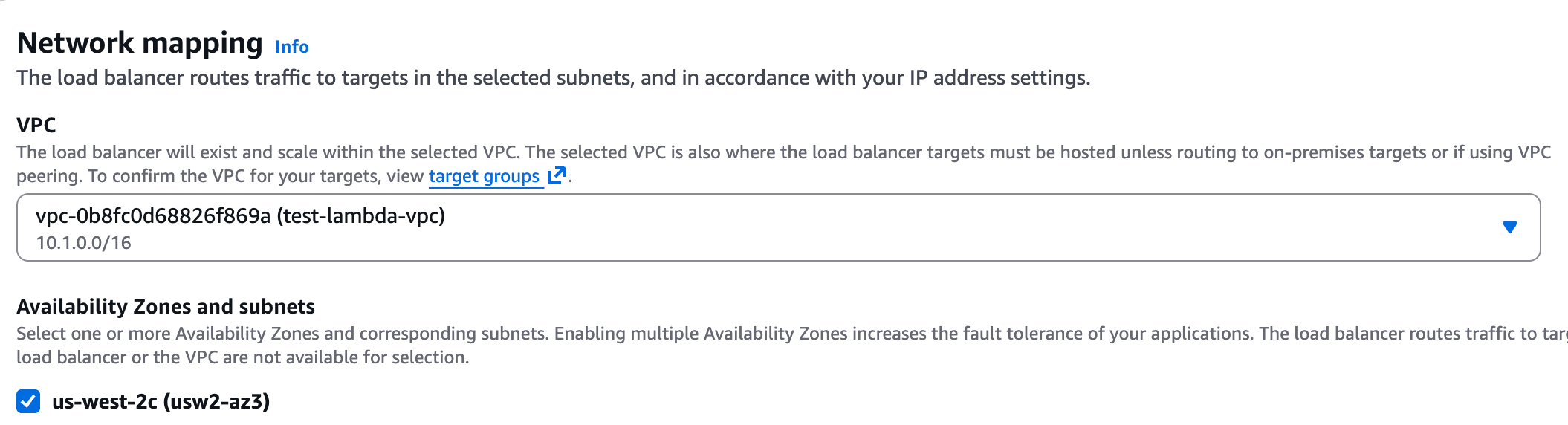

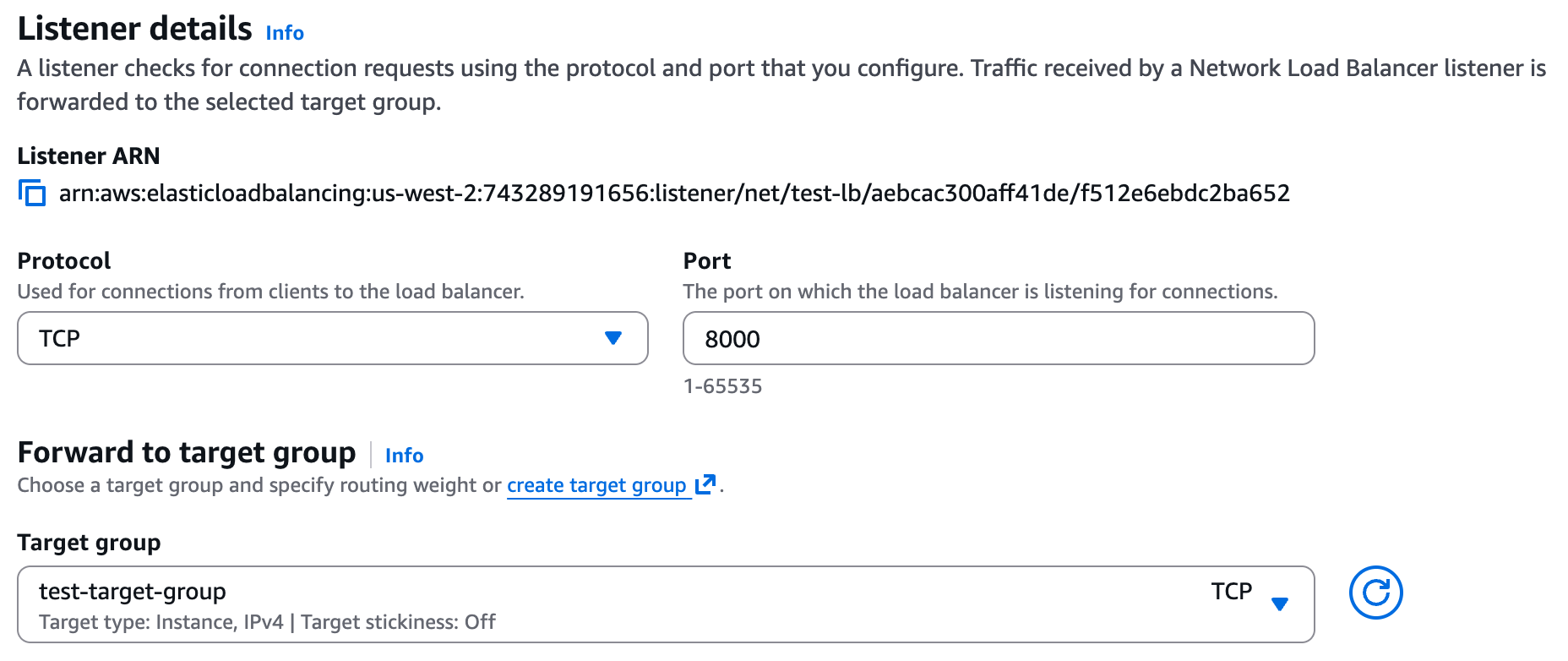

+**2. Create a Network Load Balancer (NLB)**

+

+Under the EC2 page in AWS, navigate to "Load Balancers" and click on "Create load balancer". Choose **Network Load Balancer** and proceed with the creation. Please follow the wizard and fill the following details:

+1. **Load balancer name** - Choose a name for the load balanver (e.g. "github-lb")

+2. **Scheme** - Internal.

+3. **Load balancer IP address type** - IPv4.

+4. **VPC** - Choose same VPC as the one you used for the target group above.

+5. **Mappings** - Select one or more private subnets.

+6. **Security groups** - Select a security group with access to your service. Please grant access to the relevant ports for your service, to the following IP ranges (these are the internal IPs Elementary may connect to your service from):

+ * 10.0.1.x

+ * 10.0.2.x

+ * 10.0.3.x

+7. **Listeners** - Select the target group, protocol, and port from Step 1.

+

+

+

+**2. Create a Network Load Balancer (NLB)**

+

+Under the EC2 page in AWS, navigate to "Load Balancers" and click on "Create load balancer". Choose **Network Load Balancer** and proceed with the creation. Please follow the wizard and fill the following details:

+1. **Load balancer name** - Choose a name for the load balanver (e.g. "github-lb")

+2. **Scheme** - Internal.

+3. **Load balancer IP address type** - IPv4.

+4. **VPC** - Choose same VPC as the one you used for the target group above.

+5. **Mappings** - Select one or more private subnets.

+6. **Security groups** - Select a security group with access to your service. Please grant access to the relevant ports for your service, to the following IP ranges (these are the internal IPs Elementary may connect to your service from):

+ * 10.0.1.x

+ * 10.0.2.x

+ * 10.0.3.x

+7. **Listeners** - Select the target group, protocol, and port from Step 1.

+

+ +

+ +

+ +

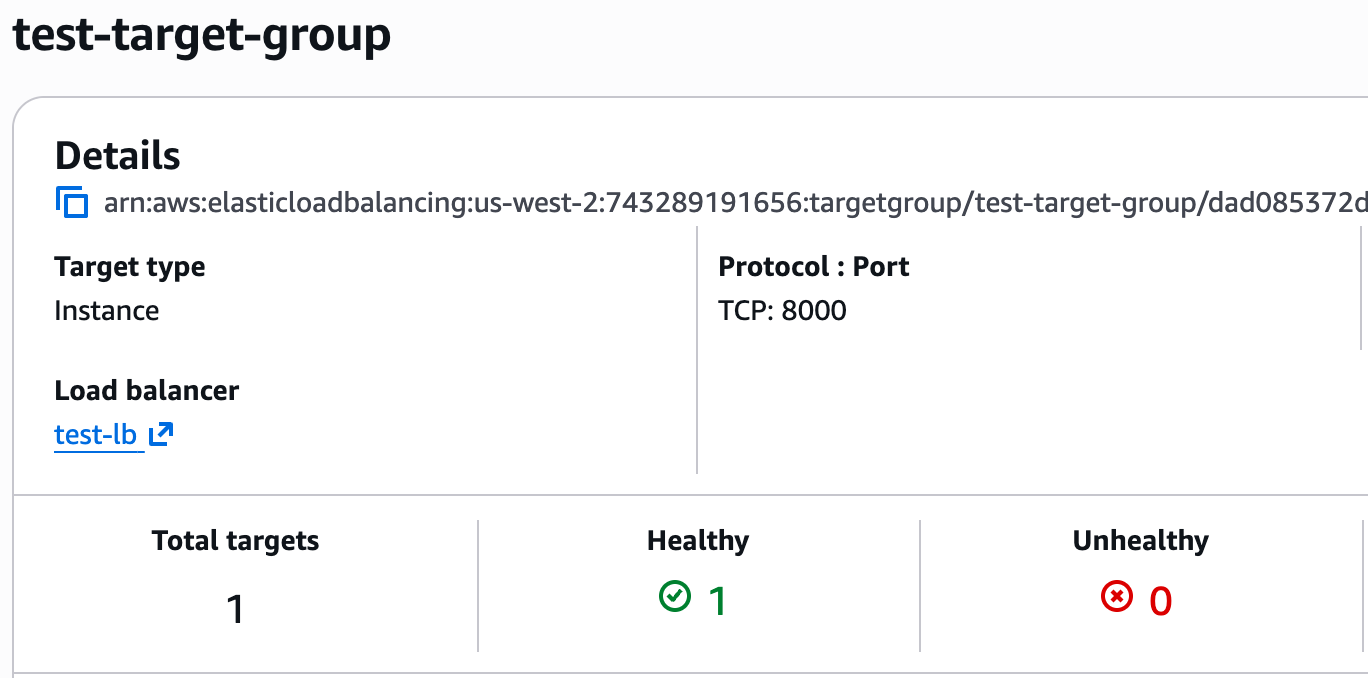

+**3. Verify the Target Group is Healthy**

+

+Once the load balancer from the previous step is ready, please navigate to the target group you created above. It should be listed as "Healthy".

+

+

+

+**3. Verify the Target Group is Healthy**

+

+Once the load balancer from the previous step is ready, please navigate to the target group you created above. It should be listed as "Healthy".

+

+ +

+If it appears as "Unhealthy" for some reason, please ensure the security group provides access to the service, that the health check is configured correctly, and of course that the service itself is available.

+

+**4. Enable Cross-Zone Load Balancing**

+

+If you selected more than one subnet for the load balancer above:

+* Navigate back to the "Load Balancer" screen

+* Choose the load balancer you created.

+* Click on Actions -> Edit load balancer attributes.

+* Enable the setting "Enable cross-zone load balancing".

+

+If you selected more than one subnet (availability zone) when creating the NLB in Step 2, navigate back to the Load Balancer, then select Actions → Edit load balancer attributes. From this page, select "Enable cross-zone load balancing" and save your changes.

+

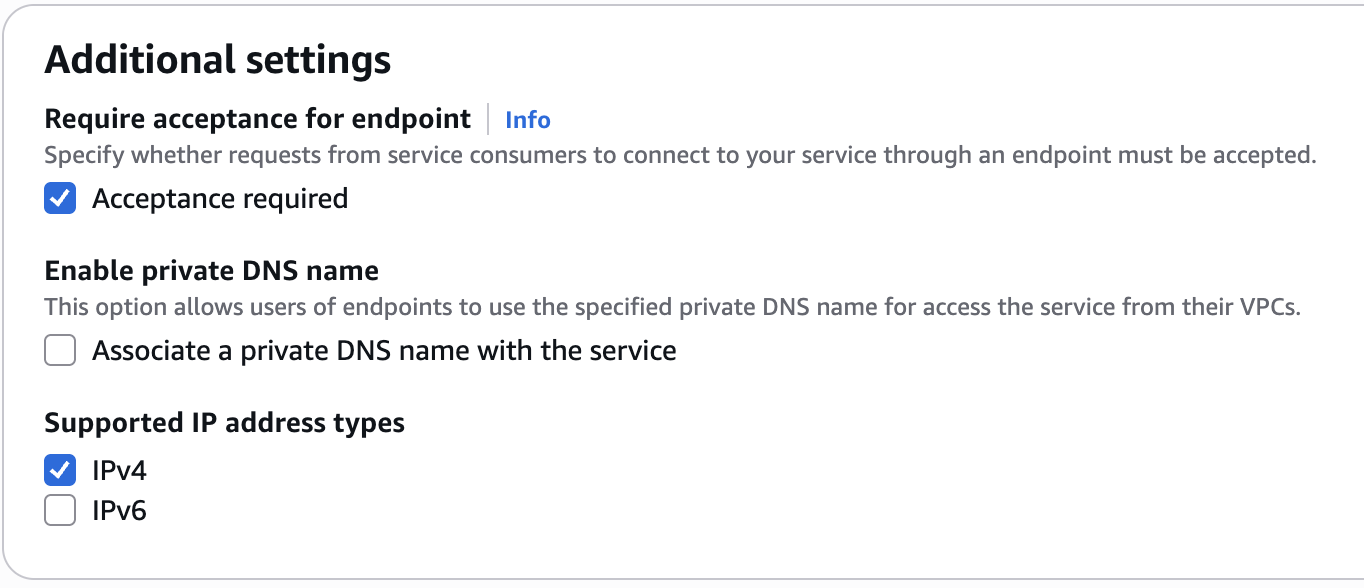

+### Create a VPC Endpoint Service and approve access for Elementary

+

+**1. Create a VPC Endpoint Service**

+

+Navigate to the VPC page in AWS, go to "Endpoint services," and select "Create endpoint service." Please follow the wizard and fill in the following details:

+

+1. **Name** - Choose a name for your VPC endpoint service.

+2. **Load balancer type** - Network.

+3. **Available load balancers** - Select the network load balancer (NLB) created above.

+4. **Require acceptance for endpoint** - Yes (so new connections will require approval, see below)

+5. **Enable private DNS name** - No.

+6. **Supported IP address types** - IPv4.

+

+

+

+If it appears as "Unhealthy" for some reason, please ensure the security group provides access to the service, that the health check is configured correctly, and of course that the service itself is available.

+

+**4. Enable Cross-Zone Load Balancing**

+

+If you selected more than one subnet for the load balancer above:

+* Navigate back to the "Load Balancer" screen

+* Choose the load balancer you created.

+* Click on Actions -> Edit load balancer attributes.

+* Enable the setting "Enable cross-zone load balancing".

+

+If you selected more than one subnet (availability zone) when creating the NLB in Step 2, navigate back to the Load Balancer, then select Actions → Edit load balancer attributes. From this page, select "Enable cross-zone load balancing" and save your changes.

+

+### Create a VPC Endpoint Service and approve access for Elementary

+

+**1. Create a VPC Endpoint Service**

+

+Navigate to the VPC page in AWS, go to "Endpoint services," and select "Create endpoint service." Please follow the wizard and fill in the following details:

+

+1. **Name** - Choose a name for your VPC endpoint service.

+2. **Load balancer type** - Network.

+3. **Available load balancers** - Select the network load balancer (NLB) created above.

+4. **Require acceptance for endpoint** - Yes (so new connections will require approval, see below)

+5. **Enable private DNS name** - No.

+6. **Supported IP address types** - IPv4.

+

+ +

+ +

+Once the service is created, please go to the "Details" tab and save the "Service name" attribute, you will need later to provide it to the Elementary team.

+

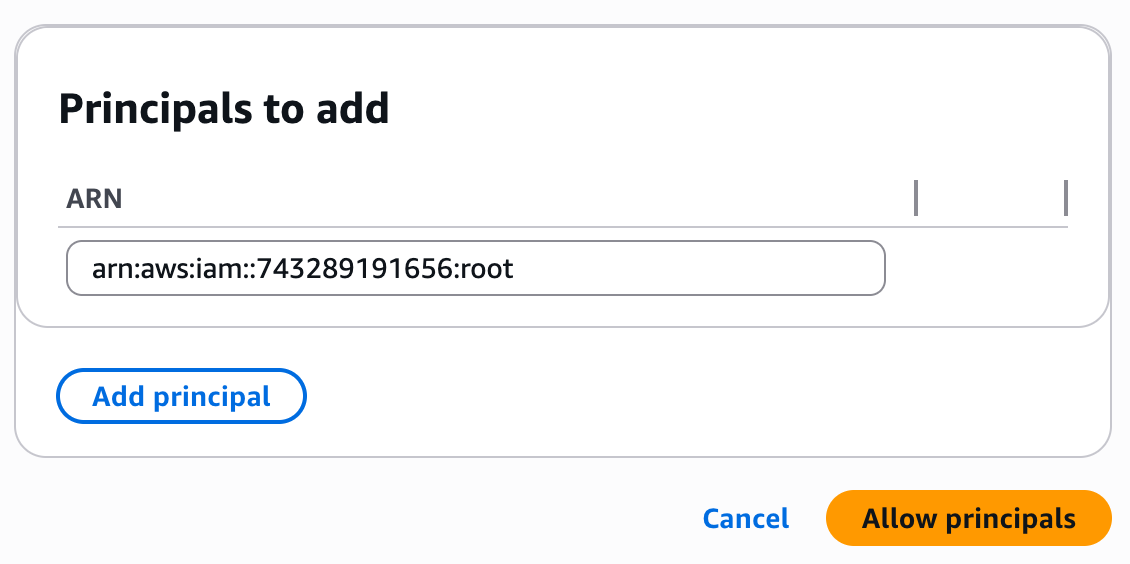

+**2. Allow the Elementary Principal**

+

+Once the VPC endpoint service is successfully created, navigate to the "Allow principals tab" and click on "Allow Principals". Then add the following principal:

+```

+arn:aws:iam::743289191656:root

+```

+

+After the endpoint service finishes creating, navigate to the "Allow principals" section and select "Allow principals." Add Elementary's AWS account ID: `743289191656`.

+

+

+

+Once the service is created, please go to the "Details" tab and save the "Service name" attribute, you will need later to provide it to the Elementary team.

+

+**2. Allow the Elementary Principal**

+

+Once the VPC endpoint service is successfully created, navigate to the "Allow principals tab" and click on "Allow Principals". Then add the following principal:

+```

+arn:aws:iam::743289191656:root

+```

+

+After the endpoint service finishes creating, navigate to the "Allow principals" section and select "Allow principals." Add Elementary's AWS account ID: `743289191656`.

+

+ +

+**3. Contact the Elementary team to configure the PrivateLink connection**

+

+Please provide the Elementary team with the following details:

+1. Your AWS account ID.

+2. Your AWS region.

+3. The VPC endpoint service name (from step 1).

+4. The relevant service / integration (e.g. Github).

+5. The hostname you use internally to connect to your service.

+

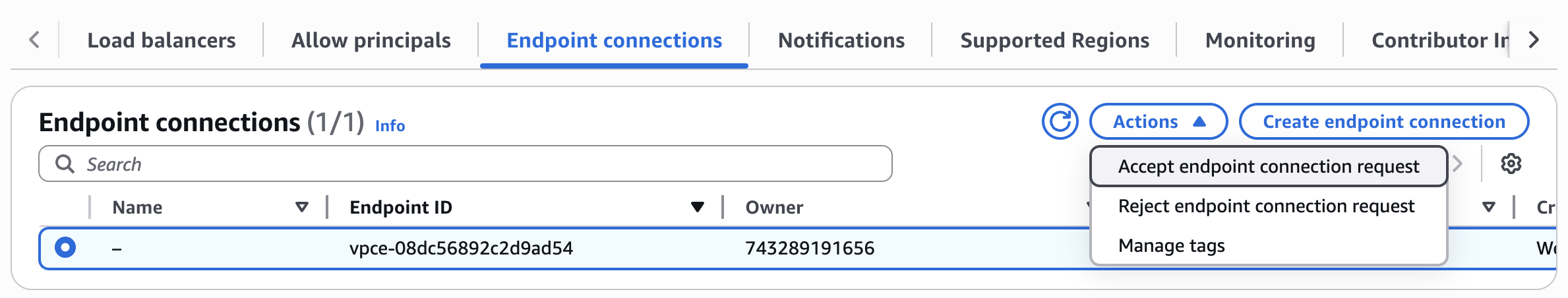

+**4. Accept the endpoint connection request**

+

+Once you got confirmation from the Elementary team that the private link connection is set up, you need to approve

+the VPC endpoint connection from Elementary. You can do so with the following steps:

+* In the VPC page in your AWS console, go to "Endpoint Services", and then choose the endpoint service that you created in step 1.

+* Under the "Endpoint Connections" tab, you should see a pending connection, select it.

+* Click on Actions->Accept endpoint connection request to accept the connection.

+

+After a couple of minutes the connection should change from "Pending" to "Available".

+

+

+

+**3. Contact the Elementary team to configure the PrivateLink connection**

+

+Please provide the Elementary team with the following details:

+1. Your AWS account ID.

+2. Your AWS region.

+3. The VPC endpoint service name (from step 1).

+4. The relevant service / integration (e.g. Github).

+5. The hostname you use internally to connect to your service.

+

+**4. Accept the endpoint connection request**

+

+Once you got confirmation from the Elementary team that the private link connection is set up, you need to approve

+the VPC endpoint connection from Elementary. You can do so with the following steps:

+* In the VPC page in your AWS console, go to "Endpoint Services", and then choose the endpoint service that you created in step 1.

+* Under the "Endpoint Connections" tab, you should see a pending connection, select it.

+* Click on Actions->Accept endpoint connection request to accept the connection.

+

+After a couple of minutes the connection should change from "Pending" to "Available".

+

+ +

+**5. Notify the elementary team**

+

+Once the endpoint connection is approved and shows as "Available", please reach out to the Elementary team, so we will ensure the connection is ready and working.

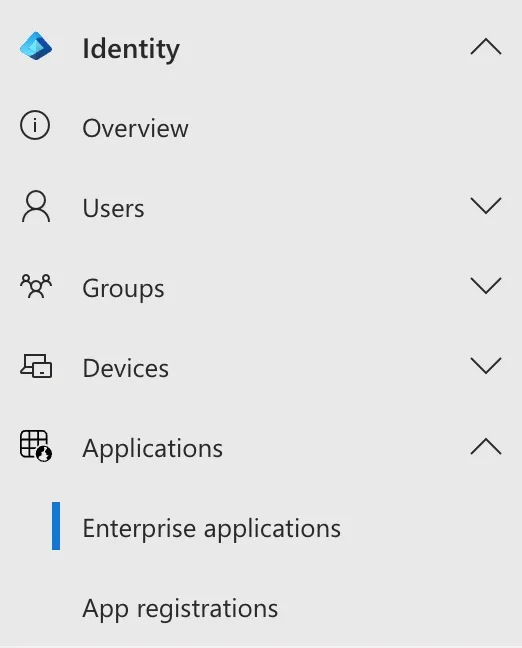

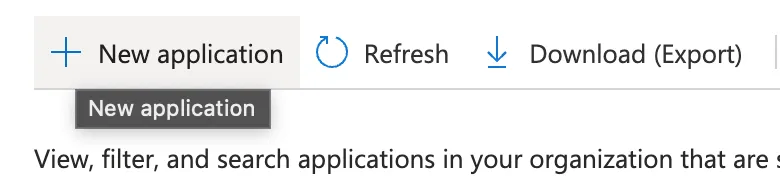

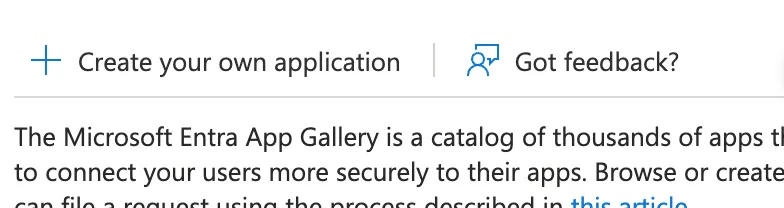

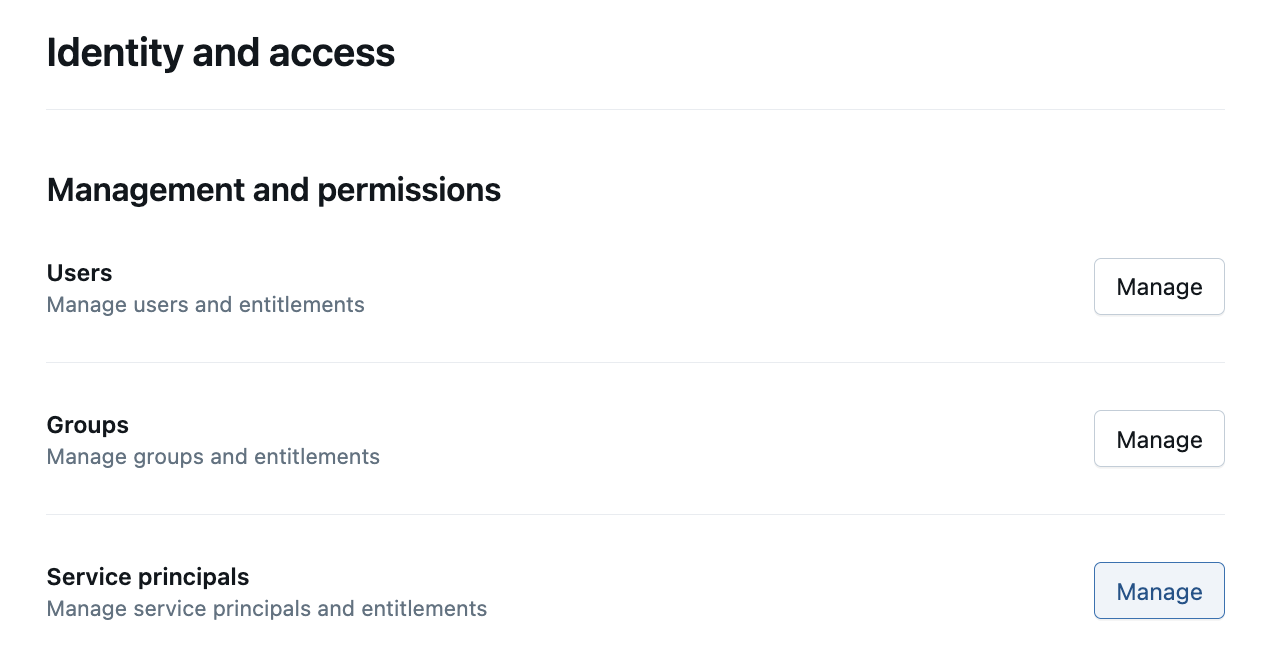

diff --git a/docs/cloud/integrations/security-and-connectivity/ms-entra.mdx b/docs/cloud/integrations/security-and-connectivity/ms-entra.mdx

new file mode 100644

index 000000000..121631cf4

--- /dev/null

+++ b/docs/cloud/integrations/security-and-connectivity/ms-entra.mdx

@@ -0,0 +1,82 @@

+---

+title: "Microsoft Entra ID"